第十一章 三头同盟时代的战争

布伦迪西翁的和约已经达成,安东尼也迎娶了奥克塔维娅。现在,三头同盟可以继续为士兵们分配土地,并且运用手中的权力来提升名望、壮大势力了。东方正在召唤着安东尼。他带着新婚妻子奥克塔维娅去了雅典,然后开始准备大举东征帕提亚人。安东尼给自己定下的目标是为公元前53年战败被杀的克拉苏报仇,同时完成尤里乌斯·恺撒生前的东征计划。而此时的屋大维也有紧迫的事情要处理。首先,他需要完成分配土地的承诺。其次,伟大的庞培之子塞克斯图斯·庞培仍然是一个问题。虽然三头同盟已经和塞克斯图斯·庞培达成了和解,但他仍然拥兵自重,在西西里岛割据一方,这种和平脆弱无比。

剿灭海盗之王

差不多是在他的父亲被恺撒击败以后,塞克斯图斯·庞培开始了他的军事生涯。此时,他盘踞在西西里岛,并且由此建立了海上霸权,控制着西地中海的重要航道。以前,庞培为安东尼提供了海军支援,还协助安东尼的家人完成了撤离,让安东尼欠下了人情债。于是,安东尼帮助庞培与三头同盟签订了和约,但李必达因此吃了一些亏。[280]这份和约还准许了那些被流放的贵族返回罗马。尽管和解还未达成,但至少这些已然失败的贵族得以摆脱流放生活。

屋大维和庞培之间的和平打从一开始就不可能持续多久。他们的关系本就很紧张,同时,没有什么因素能够促使他们化敌为友。屋大维控制了意大利的丰富资源,这就意味着从长远来看,他们的竞争必定会以屋大维的胜利告终。庞培及其追随者应该也能看清这一点。此外,屋大维肯定一方面知道夺取西西里的田地能够带来多么巨大的好处,另一方面也深知庞培的舰队不容小觑。维持一段时间的和平既可以让屋大维有机会来发展自己的海军,也可以削弱庞培的势力,因为舰队的建造和维持都需要耗费大量的人力、物力。随着时间的流逝,庞培的资源会越来越紧张,其追随者也会相应地减少。

屋大维提出的宣战理由是庞培的海盗行径。不过,最终战争的到来还是因为庞培麾下的一名比较重要的舰队统帅米诺多洛斯(Menodoros)投靠了屋大维。现在,屋大维有了一支足以与庞培一战的舰队。公元前38年,战争爆发了。第一场大规模战斗发生于意大利南部库马伊(Cumae)附近的海域,屋大维的舰队告负。海战本身其实并没有造成特别大的损伤,但是屋大维这边的舰队统帅经验相对较少,遇上了风暴,许多船只都是在这个时候被毁的。屋大维就这样失去了在海上与庞培对抗的能力,而庞培的制海权令其可以自由地劫掠意大利的沿海地区,这让接下来一年时间里的屋大维深感无奈。[281]

到了公元前36年,屋大维再次有了可堪一用的舰队。安东尼造访了意大利,给屋大维带来了一百二十艘船只。而屋大维需要相应地给他提供两万名士兵,支援他对抗东方的帕提亚人。李必达则得到了指示,让他自7月1日开始从北非发起协同攻击。这一次,屋大维的部队又遇上了糟糕的天气,李必达的攻势也不顺利。[282]但是,庞培的处境还是不可避免地恶化了。李必达的十二个军团最终成功地登陆了西西里,并且围住了位于岛上西端的城市利吕拜翁[Lilybaeum,今天的马尔萨拉(Marsala)]。现在,庞培必须在西西里岛上直面一支实力强劲的军队。而且,屋大维的部队也即将到来。

阿格里帕是屋大维的密友。从屋大维踏入政坛开始,他就一直站在屋大维这边。在佩鲁西亚战争期间,阿格里帕也有出众的表现。此时,他已经在意大利本土西面的埃奥利群岛(Aeolian Islands)上建立了前沿基地。接着,他率军大致朝着正南方航行,在西西里岛东北部的缪莱(Mylae)附近和庞培的舰队相遇。此次交手是屋大维的部队第一次在海战中占上风。虽然这场战斗本身并没有产生重大的战果,但阿格里帕的胜利让他得以登陆西西里,把第二支三头同盟的军队带到了岛上。而且,就在庞培专注于北部的战斗之时,屋大维乘机开始从东部的陶洛米尼翁[Tauromenium,今天的陶尔米纳(Taormina)]登陆西西里。

然而,屋大维所部的登陆行动受到了阻碍。庞培的海陆军队撤离缪莱以后沿着海岸经过梅萨纳(Messana)[今天的墨西拿(Messina)],往南遇上了正在登陆的屋大维。他们早已料到屋大维会尝试登陆。既然已经无法阻止阿格里帕的行动,那么他们就果断地放弃,转而试图出其不意地进攻屋大维。于是,屋大维麾下人数较少的这支部队被打乱了,陷入了非常危险的境地。不过,他最后还是稳住了阵脚。其原因大概有两个:首先,夜幕已经降临;其次,庞培的部队消耗了过多的体力。当天夜里,屋大维抓紧时间巩固了营地的防御工事。第二天,双方开始对峙。[283]

不过,屋大维的这个营地毕竟独木难支。所以,他召集海军登上了小型船只,试图突破庞培的阻拦,转移到别处去。虽然庞培击败了屋大维的舰队,但屋大维本人成功地逃到了意大利的海岸上。如果庞培能够在这场战斗里抓住屋大维,那么战争的走向也许会发生剧变。不过,最终找到屋大维的是一些一直在山丘上围观战斗的当地居民。他们帮助了屋大维,令其得以顺利地与部下会合,并且开始组织后援部队。

与此同时,屋大维留在陶洛米尼翁的军团受到了不小的压力。营地的位置不是很理想。而且,驻扎的部队有一万九千人,但他们的补给却不多,尤其缺少饮用水。负责指挥的将领是科尔尼非奇乌斯。他试图与对方展开战斗,但庞培等人深知己方的优势,拒绝出战。因此,科尔尼非奇乌斯只好率领全军出去寻找饮用水和援军。[284]他顶着敌方的骚扰,向西西里岛的内部挺进。他们途中需要经过埃特纳火山(Etna)附近的熔岩平原,这是一块崎岖、干燥的荒芜之地,当地居民通常只会在夜里来此。对于军队而言,在这种地方行进是非常困难的。而且,庞培的人还在不停地干扰科尔尼非奇乌斯的行动。他们一面阻挡在某些路线上,一面派出部队来进行骚扰。庞培的骑兵熟练地保持着距离投射火力,致使许多人负伤,进而拖慢行军的速度。路上的每一个庞培派据点都阻碍着科尔尼非奇乌斯的行军,让士兵们越发渴望水源,同时还增加了伤员的数量。就这样,在这仲夏之时,科尔尼非奇乌斯等人既没有据点也没有充足的饮用水,只能步履维艰地在西西里岛的熔岩平原上缓慢前行。这支部队可以说是朝不保夕。

终于,他们发现了一处泉水,但是旁边还有不少的庞培派军队。如果他们无法快速地突破敌方的防线获得水源,那么这支部队就很可能会因疲劳和干渴而崩溃。就在他们前进的时候,又有一支相向而来的军队出现在他们的视野里。但是,由于双方距离过远,他们无法分清敌我。如果这是庞培方的援军,那么科尔尼非奇乌斯等人就彻底失去了得救的希望。不过,位于泉水处的庞培方部队距离这支军队较近,他们应该已经通过斥候探得了情报。这支靠近的部队属于阿格里帕,他正在寻找科尔尼非奇乌斯的下落。于是,庞培的部队离开了,科尔尼非奇乌斯等人得救了。[285]

屋大维带来的军团和阿格里帕会师以后,庞培的处境就很艰难了。三头同盟的强大陆军业已在西西里岛上展开了行动。庞培的最大优势原本是他的舰队,但现在,他已经无法完全掌控海洋。当然了,他还可以守住一些城镇,但已然无力阻止敌方自由行动。李必达正在逐步地控制西西里岛的西部地区,阿格里帕和屋大维的部队则正在东部虎视眈眈。庞培现在的最佳策略就是寻求海上决战。如果他能摧毁敌方的海军,那么就可以进一步切断敌方和意大利的联系,给敌方的补给造成巨大的压力。公元前36年9月3日,庞培的舰队出航了。对此,屋大维和阿格里帕卓有自信地接受了挑战,双方舰队会战于缪莱以西的瑙洛库斯[Naulochus,大概在今天的斯帕达福拉(Spadafora)]。

罗马人的海战有一套标准的模式。在古老的三层划桨战船时代,海战的目标是用己方的船只迅猛地撞击对手,以期击毁敌方船只。相比之下,罗马人的海战更为稳健,也更加缓慢。他们更看重近距离的缠斗和接舷战。[286]因此,罗马人倾向于建造特别高大的船只。船上还会有高高的塔楼以供士兵们在塔楼上向对手投射火力。同时,他们还会准备厚重的防护板,用以阻挡敌方的火力。在这种设计思路下建造起来的船只都是笨重的庞然大物,载着大量的士兵和装备。这些宏伟的海上堡垒还会整齐地排列为一条线,以免让对手抓住侧翼的破绽甚至找到落单的船只。在这个阶段,船员的目标就是让友方船只尽可能紧密地靠在一起,不给对手插队的机会,但同时又必须保持足够的距离,以免不同船只的桨碰撞在一起。战斗打响以后,双方的船只就会开始缠斗,互相投射火力。撞击船会反复地进出,以期逃离敌方的攻击或者发起下一轮冲锋,从而保持己方的阵形或者摧毁敌方的阵形。船上的士兵必须在瞄准对手的同时闪躲敌方的火力。他们还会注意抓钩,避免让自己的船被旁边下沉的船只拖下水。他们也会挑选看起来比较弱的对手,避开强敌。等到合适的时机来临,他们就会纵身扑向对手,开启接舷战。总而言之,海战是漫长的消耗战。

在瑙洛库斯海战当中,阿格里帕运用了一种新设计的抓手。随着双方船只的靠近,他们把这种抓手伸到了庞培的舰队的甲板上,用来拨走敌方士兵或者毁坏甲板上的防御工事或者火力投射装置的引擎。也许,这种武器的主要效果其实只是引发敌人的骚乱。因为这是一种前所未见的新武器,庞培的士兵们都没有准备长杆子来进行反制。三头同盟的舰队大概由此而获得了些许的优势,并且将优势逐步扩大为胜利。

此时,庞培和他的陆军正在营地里观看战局。渐渐地,他们的舰队难以支撑下去了。在这一天的战事结束的时候,三头同盟的海军唱起了胜利的颂歌,屋大维的军团也在岸上附和。庞培派陷入了绝望。庞培本人当即离开,回到了梅萨纳的基地。据说,他在基地里一言不发,没有下达任何命令。他的军团看不到未来,选择了投降。[287]

庞培本人还有一些挣扎的余地,但他已经无力回天了。他一边召集部队,一边逃往东方,然后请求安东尼看在他之前曾经为其效劳的分儿上出手相助。与此同时,庞培还派出使者前往帕提亚,想要再寻一条后路。安东尼接见了庞培的信使,承诺只要他怀着善意前来就会认真地考虑他的请求。然而,此时的庞培毕竟已经丧失了谈判的资本,安东尼没有理由与他交涉。所以,当庞培落脚于小亚细亚以后,安东尼就开始调遣海陆部队过来包围庞培。而庞培派往帕提亚的使者也被拦截了下来,他只好尝试着作战。他取得了一些战果,但他已经深陷重围。最终,他烧毁了剩下的舰船,准备沿陆路逃往帕提亚。他的势力彻底失败了。他的部下们也都明白了这一点,纷纷离他而去。最后,庞培被俘,并且在不久以后遭到了处决。[288]

西西里局势的走向有些奇怪。庞培的残部并没有投靠屋大维,反而投奔了李必达,令其顺势宣布自己才是西西里的主人,由此提出了提高自身在三头同盟当中的地位的要求。于是,他们二人的部队与其展开了对峙。然而,此时的广大士兵仍然像公元前40年那样不愿自相残杀。至于庞培的军团,他们都是败军,早已没了士气,当然也不会愿意为了李必达而战死沙场。屋大维带人来到李必达的营地旁边,宣布对方士兵主动来降可以免罪。然后,双方爆发了小规模的打斗。屋大维的一位朋友在打斗中受了伤,于是,他匆忙下令撤退。不过,李必达的一部分士兵已经听到了屋大维的话,他们的战意越来越少。接着,李必达的部下开始三三两两地跑到屋大维那边去。此情此景,李必达非常熟悉,当初面对安东尼的时候他就经历过这一幕。于是,他换下了戎装,亲自去会见屋大维。他鞠躬以示谦卑,请求屋大维饶过自己的性命。屋大维则转身离去,让部下去欢迎李必达加入己方的阵营。就和当年一样,他的对手展示了自己的仁慈,留下了他的性命。屋大维或许想要以此向所有的恺撒派人士表明,无论发生了什么事,往日的交情始终是存在的。同时,屋大维的举动还可以说明他根本不认为李必达会威胁到自己。之后,李必达被剥夺了军权,只保住了最高祭司这个最重要的神职。归根结底,李必达的那点政治影响力实在是不值得让人动手杀他。[289]

不过,战争还没有完全结束,屋大维就遇到了兵变。士兵们要求得到腓立比之战以后那种规格的奖赏,屋大维不太情愿,并且试着坚持己见。这种态度激怒了与会的士兵,他们开始反叛。一个名叫奥非里乌斯Ofellius的军官成了他们的领导者,屋大维被迫撤回了自己的军帐。第二天,他较有信心地回到了士兵们的面前,表示愿意做出一些让步,并且承诺要带领大家前去讨伐伊利里亚人(Illyrians)。然而,奥非里乌斯失踪了。据说,屋大维的人在夜里悄悄地杀死了他。不管怎样,士兵们意识到自己的代表不见了。于是,屋大维在做出让步的同时加以威胁,他宣布不服从者再也没有参军的资格。屋大维这是在强调自己的权威,逼迫士兵们做出选择—要么永远地离开三头同盟的关系网络,再也没有分得奖赏的机会;要么服从这张关系网络的规矩。最终,屋大维恢复了自己的权威。或许,他确实依赖于这些军人,但这些军人无疑也依赖于屋大维。[290]

这是屋大维的光辉时刻。他成功地取得了对西西里和阿非利加的控制权,保证了罗马城能够拥有稳定的粮食供应。他不仅为三头同盟击败了割据一方的庞培,还能凭着在粮食供应方面立下的功绩赢得广大平民的感激。贵族的观点或许有所不同。一些人应该是刚刚从庞培那里返回,还有一些也许仍然忠诚于布鲁图斯和卡西乌斯的理念。但是,屋大维这次取得的胜利再一次说明了这些人根本无关紧要。

屋大维毫不客气地利用了这份战功。平民会议表决让屋大维有权坐在保民官的座位上(很可能是永久性的),同时也给了他等同于保民官的神圣不可侵犯sacrosanctitas的权利。从此,伤害屋大维就是在犯罪。当然,这个权利只是一份荣誉。真正的刺客肯定不会在意。不过,值得注意的是,这两项长期有效的特权都把屋大维和保民官联系在了一起。[291]由此,屋大维将自己塑造为罗马人民的保护者,宣布自己对人民负有义务,同时强调了人民也依赖于他。

屋大维还在罗马城的正中心建造了一座独特的纪念柱,用以长期纪念这次的胜利,并且警告那些妄图与自己作对之人。按照罗马的古老传统,海战胜利的纪念物就是敌舰的船首和撞击锤。屋大维命人截下了敌船的撞击锤,然后将其添加到罗马城广场中心新建的纪念柱上。这座建筑物(未能保存至今)占据了罗马城内最为重要的政治场所,吸引着无数人的目光。纪念柱的顶端还有屋大维的金色雕像。

一般来说,只有神灵才会享有贵金属制成的雕像,屋大维这是在尝试为自己塑造合适的公共形象。他是手握重权的人民守护者,位居罗马新秩序的中心,享受着军人的拥戴和平民的感激。现在,他足以比肩神明。

唯一有可能挑战其地位之人远在海外—位于亚历山大的安东尼和克莱奥帕特拉。不过,在屋大维庆祝胜利之时,三头同盟的制度看起来前所未有地强大而稳固。毕竟,真正终结了庞培的是安东尼,他之前还曾给屋大维提供作战所需的舰船。而且,无论他和克莱奥帕特拉之间到底有着怎样的关系,安东尼仍然是奥克塔维娅的丈夫、屋大维的姐夫。当然,安东尼和屋大维应该都不怎么想念李必达与他们分享权力的日子。战后,屋大维归还了安东尼借给他的舰队。其中的一部分船只已经毁于战火,屋大维对此做了相应的补偿。[292]此外,安东尼在这场战争中做出的贡献也得到了认可:罗马城中举办了庆祝安东尼杀死庞培的赛事;罗马城广场的演讲台附近立起了安东尼乘坐战车的雕像;和谐女神庙(Temple of Concord)里也有了安东尼的雕塑;新铸的货币上同时描绘着屋大维和安东尼两人的头像(如图3);安东尼本人和他的妻子(奥克塔维娅)、儿女都获得了在神庙里举办宴会的权利。[293]总之,屋大维认可了安东尼的重要地位,他们二人团结一致。心存怀疑的元老都不妨去看一看广场上的安东尼像,深刻地感受一下屋大维和安东尼之间互相依赖、合则两利的关系。此时此刻,他们二人想要做的不是自相残杀,而是自古以来罗马人最为擅长的一件事情:开疆拓土。

安东尼的东方霸业

自公元前53年罗马人在卡莱遭遇大败[指卡莱战役。在这次战争中,罗马执政官马尔库斯·克拉苏被杀,七个罗马军团全军覆没,罗马军队的军旗被夺。这是罗马与帕提亚帝国之间的一场重要战役,也是罗马的一次重大失败]以来,帕提亚人就成了罗马人的心腹大患。当时被击败的罗马远征军由七个军团和相应的辅助部队组成,其统帅是马尔库斯·克拉苏。他们被帕提亚人找到了行踪,然后在对方的骑兵手下吃了大亏。罗马的重步兵难以应对帕提亚的重甲骑兵和轻装弓骑兵。一般说来,罗马人在遇到这种失败的时候只会迎难而上,继续投入兵力。然而,当时西部的政治局面动荡不断,令罗马人暂时无法把资源调集至东方。后来,公元前44年,恺撒在大致平定西部乱局以后就开始准备复仇之战。不过,他在奔赴东方之前就遇刺身亡了。时至今日,罗马的国内秩序终于再度恢复,帕提亚当然就成了安东尼用以建功立业的对象。

在腓立比之战结束以后,安东尼就致力于平定东部的领土。除了埃及以外,东部的地方势力都曾经与布鲁图斯和卡西乌斯为伍。在这个过程当中,安东尼和克莱奥帕特拉的政治联盟以及他们二人之间的私人关系发挥了不小的作用。克莱奥帕特拉的对手遭到了清洗。安东尼先从希腊各地收取了资金,然后到埃及的亚历山大建立了自己的政治基地。[294]

就在这个时候,帕提亚人出兵了。也许,他们意识到了罗马人在政局稳定以后就会发动入侵。帕提亚军队的统帅是王子帕科鲁斯(Pacorus)和流亡至帕提亚的罗马人拉比恩努斯,他当初是恺撒在高卢的得力助手,但在内战期间投靠了庞培。后来他成为布鲁图斯和卡西乌斯派往帕提亚的使者。当腓立比之战的结果传到帕提亚之时,他选择了留在帕提亚。在民族主义兴起之前,这种改换门庭的行径虽然并不多见,但还不是什么难以设想的事情。

在拉比恩努斯的劝说下,帕提亚人决定主动出击。东部的罗马驻军大部分还是布鲁图斯和卡西乌斯留下的,他们不会坚定地效忠于安东尼,这让帕提亚军队有了可乘之机。他们在叙利亚地区长驱直入,阿帕梅亚(Apamea)和安条克(Antioch)相继沦陷。然后,拉比恩努斯西进至奇里乞亚,帕科鲁斯则南下犹地亚,控制了耶路撒冷。就这样,帕提亚人迅速地占领了黎凡特地区(Levant)的大块罗马领土。[295]然而,安东尼还没来得及发起反击就被迫带兵返回了意大利。

公元前39年,安东尼得以抽身回来处理帕提亚人的问题。他派出了共同经历过穆提纳战役的老朋友文提迪乌斯,命他率领一支大军先行。安东尼自己则途经希腊,落后一些。对此,我们的各位史家秉持一贯的态度,将其归因于安东尼的性格缺陷—懈怠。然而,安东尼的作战计划还是一如既往地取得了成功。

拉比恩努斯在和文提迪乌斯交上手之前都没有得知对方靠近的消息。随他进入小亚细亚的只有他自己的罗马士兵,而这些部队是无法抵挡文提迪乌斯的。安东尼或许就是知道了这个情报才做出了这样的安排,拉比恩努斯只好向叙利亚撤退。但是文提迪乌斯留下了重装部队,只带着骑兵和轻装部队快速行军,拦下了拉比恩努斯。接着,双方都开始等待援军抵达。

帕提亚的骑兵和罗马的步兵同时到达了。文提迪乌斯的军营在地势较高处。拉比恩努斯和帕提亚人前来叫阵,但文提迪乌斯并不想让己方部队暴露在帕提亚骑兵面前。[296]为了吸引对方出战,帕提亚人越来越靠近罗马军队的营地。最终,他们朝着文提迪乌斯设置在上坡的工事发起了冲锋。于是,文提迪乌斯下令出击。遭受突袭的帕提亚骑兵连忙转身逃跑,以求尽快远离罗马步兵。在一般情况下,这其实是正确的做法。但这一回,帕提亚骑兵直接撞上了文提迪乌斯安排好的骑兵。接着,罗马步兵也赶到了,帕提亚人被打得溃不成军。拉比恩努斯带着残部向西边转移,但无济于事。为了逃跑,他把部队化整为零,试图由此避开文提迪乌斯的部队。然而,还是有许多人未能逃出生天。拉比恩努斯本人一度乔装成平民,藏匿于奇里乞亚。但这种花招并不能拯救他,最后,拉比恩努斯被找了出来,遭到处决。[297]

帕提亚人没有做好防御的准备,他们动员起来的部队已经被击溃,还有大量的骑兵战死沙场。现在,他们既不能与罗马人展开野战,也没有足够的驻军来打守城战。黎凡特地区的诸位国王既然可以接受帕提亚人占领他们的土地,那么就同样可以接受罗马人的再次进驻。一旦罗马军队进入了叙利亚,帕科鲁斯就几乎只能选择撤退了。[298]

在安东尼收复失地的过程中遇害的最著名的人物是统治着犹地亚的国王安提柯(Antigonus)。他曾经与帕提亚人为伍,后来又试图抵御罗马人的入侵。在罗马人围攻拿下耶路撒冷以后,安提柯被绑在了十字架上遭受鞭打。他或是立刻或是没过多久就被处死了。在他以后统治犹地亚的是出身于其他家族的希律,也被称为大希律王(Herod theGreat)。犹地亚社会的传统精英阶层是神职人员,在这些人眼里,希律算是一个异类。但是,对罗马人来说,希律几乎是一个完美的附庸国王。他有从军的履历,不惜动用严酷的手段来维持公共秩序,但他的地位归根结底来自罗马人的扶植。[299]

公元前38年,帕提亚人主动进攻了。文提迪乌斯如法炮制,再次引得帕提亚重装骑兵靠近了他的据点,然后先用火力扰乱敌方的军阵,接着出动部队进攻。这一次,帕科鲁斯被杀死了。帕提亚骑兵奋勇作战,夺回了他的尸体,但他们的败局已定。罗马军队成功地将其击退,文提迪乌斯又取得了一场大胜。[300]

战事暂停了,安东尼需要巩固罗马人对叙利亚的统治。同时,他还在分心关注屋大维和塞克斯图斯·庞培的战争。至于帕提亚人,他们现在不仅无力再次发动攻势,还又一次深陷于同室操戈的泥沼。大概在公元前37年,老国王奥罗迪斯(Orodes)去世了。在帕科鲁斯也已亡故的情况下,王位传到了另一位王子普拉提斯(Phraates)的手中,而这位王子还有不少的竞争对手需要解决。他杀死了科马基尼(Commagene)国王安条克[即科马基尼王国国王安条克一世(Antiochus Ⅰ)]的女儿和奥罗迪斯所生的儿子。安条克对此表示抗议。于是,他把安条克国王也给杀了。接着,至少有一部分帕提亚贵族举起了反旗,比如莫奈西斯(Monaeses)。[301]

现在,安东尼成了主动进攻的一方。他让奥皮乌斯·斯塔提阿努斯(Oppius Statianus)指挥大多数的士兵和辎重车队,自己则带着一股规模较小的部队朝着普拉斯帕(Praaspa)进发。这座城市位于今天伊朗的西北部,在当时是米底王国的一座重要城市。安东尼的计划其实是不差的,因为帕提亚人正在自相残杀,他想要趁此良机推动帕提亚帝国解体。但是,就在罗马人学习如何对抗帕提亚人及其盟友的同时,东方的诸位国王也逐渐掌握了与罗马人战斗的技巧。米底国王阿尔塔瓦斯迪斯(Artavasdes)深知一旦被罗马人围困在城里就必败无疑。所以,他在普拉斯帕留下了一些驻军,然后就主动出击。他避开了安东尼,找到了正在行军的斯塔提阿努斯,趁其不备,将其一举击溃。

安东尼的后援部队和辎重车队都被歼灭了,但他仍然拒绝撤退。接着,阿尔塔瓦斯迪斯开始骚扰安东尼的补给线。于是,安东尼尝试着在普拉斯帕附近就地收集补给,但他派出去的人手遭到了米底人的抵抗。渐渐地,安东尼的补给越来越少,他只好无奈地选择战略转移。途中,米底人趁机对其发起了攻击。安东尼带着一部分军队来到了亚美尼亚,但另有一部分人先是偏离了寻常的道路,然后就失去了方向。有一次,面对米底弓箭手的攻击,罗马步兵摆出了龟甲阵(testudo),把盾牌紧密地靠在一起,抵挡住来自所有方向的火力。米底人从未见过这种战术,他们以为自己的箭雨已经奏效,便贸然发起了冲锋,被重整阵形的罗马人打得大败。接着,罗马军队得以继续撤退。[302]

安东尼回到了埃及过冬,并且向屋大维和克莱奥帕特拉求援。屋大维做了一个不同寻常的决策—派奥克塔维娅和援军一起去安东尼处。但安东尼并不打算让两位妻子一起陪伴自己,他让奥克塔维娅回到了罗马。尽管各位史家依然有些偏颇地强调了安东尼在公元前36年的行动是比较大的失败,但身为当事人的米底国王阿尔塔瓦斯迪斯并不觉得自己有望赢得这场战争。大约在公元前35年底之前,米底人加入了罗马人的阵营。[303]第二年上半年,安东尼入侵了亚美尼亚,俘虏了亚美尼亚国王,并且击退了发起反攻的帕提亚人。[304]至此,安东尼已经赢得了战争:他驱逐了侵占叙利亚的帕提亚人,杀死了帕提亚的王位继承人帕科鲁斯,迫使米底王国依附于罗马,占领了亚美尼亚。后世的传统观点对安东尼怀有偏见,认为这场战争失败了,还以此来证明安东尼是一位沉溺于温柔乡的不称职的将军。然而,安东尼毫无疑问地取得了一连串的政治、军事胜利,将一大片广阔的土地纳入了罗马的势力范围,同时还削弱了这一地区其他政权的实力。安东尼或许的确没能实现他原先设立的远大目标,但他仍然完全有资格宣布自己已经取得了胜利。之后,他返回了亚历山大去庆祝自己建立的功业。

胜利返回亚历山大标志着安东尼在东方的霸业登上了巅峰。就在安东尼征服东方之时,屋大维也在忙着东征西讨。在塞克斯图斯·庞培死后,屋大维最先想到的是去安抚原本属于李必达的阿非利加行省。他抵达了西西里,但接着就改变了主意。达尔马提亚出现了一些问题,给了屋大维扩张领土的机会。于是,他召集了部队,准备作战。或许就是因为他自己也需要用兵,屋大维派往东方的援军数量没有完全符合安东尼的要求。[305]

公元前35年,达尔马提亚的战事开始了。罗马人早就在这个地区拥有了很强的影响力,亚得里亚海北部的制海权也掌握在他们手中。但是,越往内陆推进,他们遇到的阻力就越大。屋大维本人也在一次围城战中受了伤。不过,到了那一年的战事大体结束之时,屋大维似乎觉得战争已经结束了。[306]他离开了达尔马提亚,转而赶往高卢。据说,他想要入侵不列颠。[307]但是达尔马提亚人还没有认输,战火再次引燃。于是,屋大维就回到了达尔马提亚,再次负了伤,又带领罗马军队取得了胜利。在接下来的几十年里,这个地区仍然在不屈不挠地反抗着罗马人的统治。不管怎样,屋大维也算是取得了胜利。基本已经被驯服的元老院为他举办了凯旋仪式,表达了感谢之情。[308]

时至公元前33年,屋大维和安东尼面前都已经没有亟待解决的军事问题了。当然了,只要有心,他们肯定还能找到可以扩张势力范围的地方和相应的出军理由。然而,屋大维和安东尼的关系正在不停地恶化,其原因尚不明确。在勉强维持了十年的合作关系以后,他们自己以及他们身边的人都开始相互攻讦。这种现象的根源不是很明显。也许,他们其实未必走向决裂。此时的罗马毕竟已经大大不同,没有人像卢比孔河畔的恺撒那样骑虎难下。不过,我们也不能把问题简化成安东尼和克莱奥帕特拉的私人关系。罗马的确又一次不可避免地坠入了内战的深渊。等到尘埃落定,安东尼和屋大维的双头统治就将蜕变为奥古斯都一人执掌大权的君主制。

[280] Appian, Civil Wars , 5.71-75.

[281] Appian, Civil Wars , 5.78-92; Dio, 48.46-47.

[282] Appian, Civil Wars , 5.96-100; Dio, 49.1.

[283] Appian, Civil Wars , 5.98-110; Dio, 49.3-5.

[284] Appian, Civil Wars , 5.111-112.

[285] Appian, Civil Wars , 5.113-114; Dio, 49.6-7.

[286] 关于罗马人的战船,读者可以参考Michael Pitassi, Roman Warships(Woodbridge: Boydell and Brewer, 2011)。

[287] Appian, Civil Wars , 5.117-121; Dio, 49.9-10.

[288] Appian, Civil Wars , 5.133-144; Dio, 49.11; 49.18.

[289] Appian, Civil Wars , 5.123-126; Dio, 49.12.

[290] Appian, Civil Wars , 5.128; Dio, 49.13-14.

[291] 后来,奥古斯都以非保民官之身取得了保民官的权力,对罗马宪法做出了创新,发展出元首制。此时的这两项特权或许可以被视作元首制的先声。

[292] Dio, 49.14-15.

[293] Dio, 49.18.6-7.

[294] Dio, 48.24.

[295] Dio, 48.25-26.

[296] Dio, 48.39-40.

[297] Dio, 48.40.

[298] Dio, 48.41.

[299] Dio, 39.22.

[300] Dio, 49.19-21.

[301] Dio, 49.23.

[302] Dio, 49.27-30.

[303] Dio, 49.33.

[304] Dio, 49.39-40.

[305] Dio, 49.34.

[306] Dio, 49.35.

[307] Dio, 49.37.

[308] Dio, 49.38.

第十二章 安东尼和克莱奥帕特拉:爱情

和与爱为敌之人

罗马革命已经改变了罗马政治的本质。在公元前40年9月的布伦迪西翁,屋大维和安东尼避免了交战,让三头同盟得以稳固地统治着罗马。无论还有多少共和国的制度、机关、传统保存了下来,罗马人都已经置身于完全不同的时代,国家的最高权力现在由三头同盟来掌握。而且,安东尼和屋大维都不太可能会主动放弃这种权力。我们或许可以把布伦迪西翁和约的签订视作罗马共和国的谢幕。不过,一般说来,我们会立刻把目光转移到历史舞台上的下一个剧目—安东尼和克莱奥帕特拉之间引人入胜的故事。

后人或许会凭着后见之明认为阿克提翁海战是安东尼和屋大维之间势必发生的总清算。他们本来就不是什么亲密的好朋友,还曾经在战场上兵戎相见。阿克提翁海战就是公元前44年至公元前40年以来的种种恩怨爆发的结果,同时也是向君主制演变的一个必要环节。但是,在公元前40年,几乎没有人能够断言三头同盟必将消亡。他们恐怕也难以想到罗马的社会、经济问题最终会被奥古斯都时代的那种君主制给画上一个句号。从公元前43年至公元前32年,三头同盟的政权一共经历了佩鲁西亚战争、屋大维和塞克斯图斯·庞培之间的战争、安东尼在东方遇到的波折、屋大维后来发动的战争以及李必达的失势。这个时间跨度甚至已经超过了很多现代的政权。在此期间,屋大维和安东尼还有他们身边的其他参政者之间难免会不断地产生各种各样的摩擦。但这并没有伤及大局,没有哪一方看起来早就准备着与另一方开战。当然,双方之间肯定充满了防备之意,两位领导者有可能只是在互相虚与委蛇。但是屋大维的确为安东尼在帕提亚的战事而派出了一些援军,安东尼也给屋大维提供了对抗庞培所需的舰队。我们固然不能说三头同盟毫无问题,但是这个政权的稳定性确实也没有那么弱。所以,我们有必要探寻屋大维和安东尼最后究竟是怎样走向决裂的。

安东尼和克莱奥帕特拉的故事有着非常丰富的内容,却未免有些可疑。这大体上要归因于后人。在当代的我们和这对古代的爱侣之间隔着两千多年来积累下来的形形色色的电影、戏剧、画作、小说等等。而且,即使我们排除了这些东西的影响,恐怕也不能触及“真相”,因为就连最早的相关文本也都有着一种虚构的色彩。看来,很可能在他们二人还统治着亚历山大的时候就已经有了许多神奇的事在人们口中流传。[309]关于安东尼和克莱奥帕特拉,我们主要参考的是普鲁塔克和狄奥的文本。但普鲁塔克生活的年代距离他们二人有一百多年的时间差。也就是说,各种谣言有了一百多年的时间来产生、演变。他的《安东尼传》是后来莎士比亚编写戏剧《安东尼与克莱奥帕特拉》的根据。至于狄奥,他著述的时间比普鲁塔克还要晚一百年。此外,我们还需要注意的是,普鲁塔克编写《安东尼传》的主要目的既不是还原历史的真相,也不是探究政治形势。他的主旨是以史为鉴,用历史上的教训来提升大家的道德水平。所以,有关安东尼和克莱奥帕特拉的各种谣言其实正好有助于达成他的编写意图,贴近于历史真相的评价反而没有那么重要。

除了普鲁塔克以外,还有很多人也把安东尼和克莱奥帕特拉的故事当作道德说教的案例。因此,有大量的相关文本都把叙述的重心放在了他们二人之间的亲密关系上。换言之,人们关注的重点不是权力斗争,而是个人的道德品质。这在一定程度上是因为每个人都需要思考如何完善自我,但只有个别人才会去考虑如何治国理政。此外,安东尼和克莱奥帕特拉是可以被树立为反面典型的,而这种反面人物更能引发大家的兴趣。身处两百五十年后的史家狄奥就把各种古代文献的内容精炼为一句话:“克莱奥帕特拉的妖术让安东尼成了欲望的奴隶。”[310]

总之,安东尼和克莱奥帕特拉被塑造为爱情故事的主角,而爱情基本上与政治无关。我们通常把这种情感划入非理性的领域,同时认为政治生活必然需要理性,而且一般由男性主宰。早在莎士比亚写出相关的戏剧之前,安东尼和克莱奥帕特拉就已经是广为人知的悲剧角色了。不过,现代人一般认为这段关系的主导者是邪恶的克莱奥帕特拉,她凭着东方女子的魅力迷住了安东尼。但是在古代,人们往往认为安东尼的道德缺陷才是引发这场悲剧的关键。随着安东尼和克奥帕特拉成为痴迷于爱情的典型人物,伟大的屋大维等人自然就成了爱情的敌人。在非黑即白的思想影响下,罗马的历史就被简化为爱情对帝国、情感对理性的道德对抗史。不过,这种通俗易懂、简洁明了的对比确实让很多后人引以为然。

但是,如果审视一下安东尼的具体行动,我们会发现他似乎不是一个合格的爱情的奴隶。从公元前40年起,在绝大部分时间里他都置身于意大利、小亚细亚、叙利亚、亚美尼亚和米底。亚历山大或许确实是安东尼的过冬之所,但总体说来,这对据说沉溺于爱情的夫妻有相当长的时间分居异地。那么,我们或许不应该把接下来的这场战争归因于克莱奥帕特拉的妖术或者安东尼的欲望。此外,我们也不太能相信屋大维等人竟然在九年以后才察觉安东尼和克莱奥帕特拉之间有着异乎寻常的亲密关系。安东尼本人似乎也认为他和克莱奥帕特拉的关系不会影响到他和奥克塔维娅的婚姻。这也难怪,毕竟他的这种状态维持了将近八年都没有引发什么值得一提的政治问题。

在现代社会里,家庭私事通常与政治无关。但是在罗马人看来,这不是泾渭分明的两类事情。婚姻是政治化的。当然,性生活和婚姻不同。性生活是私事,但婚姻是公事,或者说政治关系。不过,公元1世纪的罗马人似乎越来越反对女性干政,这大概是因为尤里乌斯-克劳狄乌斯王朝[包括提比略(Tiberius)、卡里古拉(Caligula)、克劳狄(Claudius)和尼禄(Nero)]的宫廷政治给人们留下了教训。后来,这种视婚姻为公事的观念还进一步延伸到了性生活上,很多人都关心罗马皇帝的性生活对象是谁。然而,在共和国时代,我们似乎很难说真的有人会这么在意谁和谁上了床(除了当事人和他们身边的亲朋好友)。在战争来临之前,安东尼就自己和克莱奥帕特拉的关系给屋大维写了一封信。[311]他的这些文字同样反映了前文所提的论断:

你怎么了?就因为我和女王上了床吗?她是我的妻子。我不是九年以前就和她这样了吗?你难道只和德鲁茜拉(Drusilla)一个人上床吗?提尔图拉(Tertulla)、提兰提拉(Terentilla)、鲁菲拉(Rufilla)、萨尔维娅·提提森尼娅(Salvia Titisenia)或者别的什么女人,你难道没和她们上过床?在什么地方和谁一起享受欢愉有什么关系呢?

鉴于苏埃托尼乌斯引用的部分很简短,我们得审慎地考虑一个问题:屋大维对他抱怨的到底是什么?按照安东尼的说法,屋大维抱怨的是他和多个女性保持性关系。然而,从后来的史料来看,屋大维抱怨的很可能是安东尼被克莱奥帕特拉“支配”了。毕竟,这才是他的开战理由。不管怎样,安东尼都是在佯作不知。他的意思是,利用他人的性生活来发起政治攻击是前所未有的。但这本来就是罗马人的惯用手段,虽然后面的这些才是比较常见的名目:同性恋、通奸、溺爱情人或者因私生活(尤其是和地位较低的女性)而玩忽职守。

况且,克莱奥帕特拉绝非寻常女子。安东尼自己也在信件的开头就提到了—她是女王。安东尼和克莱奥帕特拉还组建了家庭,让问题变得更加复杂。罗马人采用一夫一妻制。不过,就像其他的许多奴隶制社会一样,一夫一妻制并不意味着罗马人只能有一个性生活的对象。而且,在罗马人看来,性生活不是一种罪,而是很正常的一种活动。需要用制度来严格约束的只是传宗接代的事情—每个罗马男性只能拥有一位妻子来为他生育合法的后代。

此外,妻子也是家庭的核心人物。一方面,她的地位和人脉可以为其丈夫所用。安东尼以实际行动向所有的罗马人证明了他是有能力和一位女王组成家庭的男人。另一方面,对于安东尼这样的大人物而言,妻子还是他的政治伙伴。一般说来,人们都认为夫妻二人是会互相支持的。[312]在晚期罗马共和国,虽然男性往往是家庭的支配者,但是女性显然也能发挥不小的政治影响力,像奥克塔维娅和安东尼那样的政治联姻就是基于这样的考虑而出现的。

这种婚姻关系的缔结基础是政治考虑,而不是男女双方的感情。爱情甚至往往不在考虑范围之内。或许正是如此,至少精英阶层的罗马人还比较能够容忍婚外情。他们并不是不知爱情为何物,事实恰恰相反,他们很明白个中三昧。在罗马革命时期就诞生了某些异常热烈的情诗。但是,罗马人一般不会指望着在婚姻中找到这种浪漫的感情。

显然,奥克塔维娅和安东尼之间的联姻最终未能保住屋大维和安东尼的盟友关系。但是,这段婚姻关系的终止是他们二人之间政治关系破裂的结果,而非其原因。虽然乍一看可能有些奇怪,但是安东尼真的在很长的一段时间里同时维持住了他和克莱奥帕特拉组成的王室家庭以及他和奥克塔维娅组成的罗马家庭。

安东尼在信里列举了他觉得有可能和屋大维上过床的女性。她们都不是地位低下的女子(没有人会在意屋大维是否和那种女性发生过婚外性关系),而是屋大维身边最亲密的朋友的妻子。安东尼的意思是,就连屋大维侵害他人家庭的通奸行径都不足为奇,他自己的行为就更不应该受到指责了,因为他的两个性生活对象都是他的合法妻子。

根据罗马的法律,每个罗马男性都只能和一名罗马女子维持一段婚姻关系,组建一个家庭。不过,身为三头之一,安东尼掌握着莫大的权力。克莱奥帕特拉也是地位超然的女王,他们不太可能会拘泥于寻常的法律规定。后三头的权力让他们甚至可以不顾应有的法律流程,随意地杀死任何一位罗马公民。这样的权力肯定也能解决婚姻法的问题,让安东尼如愿以偿地和克莱奥帕特拉拥有合法的婚姻关系。因此,安东尼完全可以有底气地宣称克莱奥帕特拉就是他的合法妻子,他们的孩子也是合乎法律的,他和奥克塔维娅的关系也是如此(就算这是重婚)。

然而,让安东尼得以摆脱传统束缚的这份权力同时也改变了他的家人的政治地位。成家确实是一件从头到尾都很实际的事情。例如,在三头同盟时代的早期,富尔维娅凭着安东尼的权力而享有了罕见的强大政治影响力,因为人们通常认为掌权的男性会听取妻子的意见,并且在此基础上做出决策;妻子则会热心地支持丈夫的事业,同时对外代表着丈夫的意志。在共和国时代,家庭内部的这种决策过程不会导致什么问题,因为罗马官员的所有重要决策都还需要进一步和同僚们展开讨论。但是,在三头同盟时代,安东尼和屋大维的家人就拥有了前所未有的政治地位。

所以,安东尼和克莱奥帕特拉的关系遭到诟病不是因为人们忽然想要把男女私情夸大为严肃的政治问题,而是因为安东尼和克莱奥帕特拉组建家庭本身就是影响深远的政治行为,他们二人的政治未来由此被绑在了一起。至少从理论上来说,双方都从中受益了。对于这段亲密的私人关系,他们并不介意对外声张,他们甚至还在货币上描绘了彼此之间非常相像的形象(如图5)。通过与安东尼联姻,埃及的女王克莱奥帕特拉获得了罗马世界的权力。所以,罗马世界的政治人物势必要对安东尼的这段关系加以审视。屋大维的通奸行为确实如安东尼所说,不是什么严重的问题,因为没有人会相信这些情人能够影响屋大维的决策。但克莱奥帕特拉完全不同。她原本就有着不小的权势,罗马有可能会为她所掌控。当然,要除掉威胁着罗马人的克莱奥帕特拉就意味着要攻击安东尼。

我们在前文强调了爱情并不是这场战争的主要原因,但是从安东尼在信件里所写的愤怒、简短而明确的文字来看,我们也无法完全忽视埃及女王的异域魅力以及他们之间的亲密关系。安东尼最后还提出了这个问题:“和谁一起享受欢愉有什么关系呢?”然而,无论他有多么讨厌人们把他的风流韵事摆上台面来加以严肃的政治讨论,无论这种事情在罗马的历史上有多么罕见,对这个问题的回答都是肯定的—安东尼和谁一起享受欢愉真的是个大问题。而且,安东尼自己也一定是明白这一点的。

亚历山大的封赏仪式

公元前34年,为了庆祝他对帕提亚人取得的胜利,安东尼在亚历山大举办了游行仪式。身为战俘,亚美尼亚国王被戴上了银质的镣铐,然后跟着安东尼的游行队伍在城里走了一趟。安东尼本人则乘着战车,一边前进,一边向民众致意。埃及人民簇拥着一个银色的高台,其上有一个金色的宝座,他们的女王就坐在这里。此次庆功仪式的高潮就是把俘虏和战利品都献给克莱奥帕特拉。[313]接着,安东尼为亚历山大城内的军民举办了一场盛大的宴会,然后对大家发表了讲话,宣布克莱奥帕特拉为统治诸王的女王,克莱奥帕特拉和尤里乌斯·恺撒的儿子恺撒里昂为王中之王。这种波斯风格的头衔让他们至少在名义上成了东方的主人。安东尼和克莱奥帕特拉所生的子女也得到了封赏:托勒密得到了叙利亚;克莱奥帕特拉·塞勒涅拿到了昔兰尼加(Cyrenaica,埃及以西的土地);亚历山大则获得了亚美尼亚以及东至印度的土地。[314]

亚历山大的封赏仪式并不符合罗马的传统。当然,游行以及在市中心献出战俘还算是效仿了传统的凯旋仪式。但是,这次活动的举办地点和克莱奥帕特拉扮演的角色都是全新的设计。这场封赏仪式足以说明公元前34年的罗马政治已经与之前的时代大不相同。此外,这种公开的仪式是执政者在人民面前塑造政权形象的一种手段,并且因此具备了意识形态方面的意义:执政者可以通过这种仪式向特定的受众宣传某种世界观。显然,安东尼以及克莱奥帕特拉都不可能在民众面前把自己包装成之前统治埃及的各位将军或者法老,埃及女王和罗马三头之一组成的王室是史无前例的,无论罗马人还是埃及人都能明显地感受到这一点。因此,安东尼和克莱奥帕特拉不得不去探寻可用的政治象征,然后对其加以改造,进而发明出新的仪式。例如,他们以神话为基础,把克莱奥帕特拉塑造为伊西丝(Isis),把安东尼描绘成奥西里斯(Osiris)或狄俄尼索斯。[315]

不过,这种尝试具有一定的政治风险。象征其实是在给人们展示一种看待现实的方式,同时也会让人们有机会去展开独立的思考。如果权力的运行方式已经不能顺畅地融入既有的政治文化,那么执政者就需要对政治文化进行创新。他们可以在公开的仪式上向民众展示新的象征,获取民众的认可,从而成功地树立新的政治文化。但是,对于执政者提出的新的解释世界的方式,民众或许会表示怀疑乃至否定。

一般说来,罗马的将军取胜以后会向元老院提议在战败者的土地上建立起殖民地。然而,这一次,安东尼不仅没有采用罗马人的传统做法,还公然任命自己的家人去统治这些土地。这是足以让罗马人深感震惊的新颖事物(虽然熟悉希腊王族传统者大概立刻就能明白安东尼在做什么打算)。而且,这些封赏的意义也不明确,克莱奥帕特拉和她的儿女们看起来不会去实际地统治这些地方。克莱奥帕特拉本人的角色显然是很被动的。在封赏仪式上发表讲话的是安东尼,预先做出这些安排的也是安东尼,这次封赏事件体现的完全是安东尼的权力。亚历山大的封赏仪式是一场政治秀。安东尼等人采用了罗马的凯旋仪式里的一部分内容,但也吸收了埃及和近东王权的一部分传统,因为这种形式更能引起当地居民的文化共鸣。包括安东尼和最为著名的亚历山大大帝在内,东方的征服者们往往会以当地居民认可的传统方式来宣传自己的王权。安东尼这次的宣传受众既有罗马人也有东方诸国的人民。这些群体有着截然不同的文化背景,但是他们之间的交流已经有了比较长的历史。所以,无论哪一方都不会对安东尼的封赏仪式感到陌生,他们都能够明白其寓意。

安东尼当然不是法老,也没有把自己宣传为法老。他也不同于托

勒密王朝的希腊国王,克莱奥帕特拉没有尝试着把安东尼塑造为埃及

的君主,他的形象没有和克莱奥帕特拉一起出现在埃及的神庙上。克

莱奥帕特拉更倾向于宣传自己和恺撒里昂一同出现的样子,安东尼则

仍然是外来的罗马人。不过,这次的封赏仪式毕竟体现了安东尼享有

永久的统治权,克莱奥帕特拉及其子女在仪式上获得的头衔都彰显着

安东尼的权威。封赏仪式还宣布安东尼和克莱奥帕特拉的子女后代都

可以统治东方的土地,这种声明全然违背了共和国的旧制度。不过安

东尼却仍非国王。他既没有给自己加冕,也没有采用新的头衔。[316]

总之,安东尼改造了既有的宣传权力的方式,从而将其化为己

用。无独有偶,屋大维也做了类似的事情。如前文所述,屋大维击败

庞培以后在罗马城广场上立起了自己的金色雕像。由于恺撒已经被尊

奉为神明,屋大维还命人铸造了称其为“神子”的货币。我们很难说

他们二人此刻的所作所为有很大的区别,安东尼涉嫌效仿希腊化时代

的东方君主;屋大维则自比于神明,在罗马城内竖立起自己的金色雕

像。如果在罗马的共和制度坚如磐石的年代,他们二人的举动显然都

会遭受猛烈的抨击。安东尼和屋大维都是在以全新的方式宣传自己的

权力,试图让自己在理论上也摆脱元老和罗马传统的束缚。他们的做

法无疑都反映了公元前1世纪晚期罗马政治的新形势。不过,安东尼在

亚历山大举办的封赏仪式还反映了他和屋大维之间的关键差异。这场

仪式显然说明了安东尼和克莱奥帕特拉已经在私人和政治层面上合二

为一,他们的权力中心则位于亚历山大。身处东方的安东尼仍然可以

在一定程度上维持罗马人对他的支持,并且对这些支持者予以奖赏。

然而,在当时,埃及的亚历山大距离罗马有两三个月的路程,克莱奥

帕特拉女王和意大利政界的联系也不是很密切,这样一个以亚历山大

为中心的私人关系网络难免不能及时而广泛地照顾到位于意大利的支

持者。而如果关系网络不能满足其成员的需要,这些人或许就会择木

而栖。更何况,屋大维的关系网仍然立足于意大利。

安东尼的个人及政治命运都已经和克莱奥帕特拉维系在一起。她为安东尼生下了三个孩子,就算安东尼在宣传自己的权力之时未曾把克莱奥帕特拉当作核心人物,他也不可能简简单单地把克莱奥帕特拉给抛在一边。况且,没有任何证据能够证明安东尼觉得自己有必要做这种事情。此时,这段婚姻给他带来了一位美丽动人的女王和无与伦比的财富与地位。克莱奥帕特拉是安东尼政权里的关键角色。

三头同盟的终结

时至公元前33年,安东尼和屋大维的关系已经相当紧张了。具体的细节我们已经无法获悉,但他们显然在很多问题上都发生了争执。安东尼要求屋大维派出援军;屋大维则要求分享一半的战利品,同时还抱怨安东尼擅自举行亚历山大的封赏仪式。安东尼接着质问了屋大维取消李必达的权位,并且入主西西里和阿非利加的事情。虽然看起来就很难取信于人,但屋大维还是对安东尼声称他原本打算饶恕塞克斯图斯·庞培,进而指责安东尼杀死庞培。他也对克莱奥帕特拉和恺撒里昂表示了不满,暗指安东尼在亚美尼亚的战争有违道德。[317]他们基本上都在围绕着旧闻展开争论。

双方的交流大体上处于保密状态。当然,某些贵族也许曾经不慎泄露过机密。在此期间,信使们来来往往,为这两个人传递着令彼此都埋怨不已的消息。或许,罗马的贵族们已经在不停地讨论着安东尼和屋大维的关系。但是,双方依然没有大打出手,他们还没有遇到什么不得不立刻解决的法律问题或政治问题。虽然后三头的权力即将在公元前33年末抵达法定期限,但这两个人看起来都不会心甘情愿地回归“正常”的政治地位。

公元前32年,安东尼的密友格奈乌斯·多米提乌斯和盖乌斯·索西乌斯成了执政官。在1月1日的传统就职典礼上,多米提乌斯借机赞颂了安东尼,并且对屋大维加以批评。屋大维本人并不在现场,但他很快就做出了回应。他召集了元老院会议,然后带兵与会。两位执政官只得静静地听着屋大维驳斥他们所做的批评。接着,会议就结束了。

于是,这两位执政官连夜逃离了罗马。[318]三头同盟的正式终结和屋大维此次动用军队的行为或许的确让很多人深感不安,担心之后还会有更大规模的暴力冲突。但就算如此,战争也未必不可避免,此时还远远没有真正达到足以引发战争事端的地步。

不过,在某些人看来,站队的时机已到。一部分人离开了罗马去

投奔安东尼,另一部分人则从亚历山大来到了屋大维这边。抵达罗马

的这些人充分表达了他们对克莱奥帕特拉的不满,还暗指安东尼想要

把罗马献给克莱奥帕特拉。除此以外,或许还有很多人期待着有同时

忠于双方的人能够从中斡旋,再一次化解两边的矛盾。然而,逃至罗

马的变节者让屋大维得知了安东尼的遗嘱就被保管在维斯塔贞女

(Vestal Virgins)手中(罗马人常常把文件和财产存放在神庙里,因为

他们认为这样就可以得到神明的保护)。于是,屋大维全然不顾法律的

约束,强行取走了安东尼的遗嘱,然后对元老们宣读了其中的内容。

这份遗嘱里有一项看似无关紧要、实则非常致命的条款—安东尼希望

自己死后能够和他的女王一起被葬在亚历山大。为此,元老们断然披

上了战袍,表决同意对克莱奥帕特拉开战。[319]

屋大维提出的宣战理由是克莱奥帕特拉正在窃取罗马的最高权力。安东尼的遗嘱可以算作一种证据:安东尼已经想要抛弃罗马,彻底投入克莱奥帕特拉的怀抱。而且,他们之间的婚姻也可以视作克莱奥帕特拉对罗马东部领土主权的篡夺。由此,罗马和亚历山大之间有了裂痕。罗马城本该是国家的唯一中心,享有最高的权威。但现在,这种独一无二的地位受到了威胁。如果有安东尼相助,克莱奥帕特拉有可能让所有的罗马人都臣服于她。而亚历山大的封赏仪式已经证明了安东尼确实打算和克莱奥帕特拉建立长久的关系,甚至还要把权力传承给子女后代,开创一个新的王朝。

面对这种潜在的威胁,屋大维要求意大利的城镇社区立誓以他为领导(dux),随他一同作战。他由此宣布整个意大利都在支持他。[320]屋大维让意大利在政治和文化上取得了某种统一性,从而成为支撑起一个大帝国的根基。在古老的共和国时代,罗马城以外的地方都不重要,只有罗马城的政治生活才值得重视。然而,屋大维造就了新的权力版图。老兵们定居的城镇和殖民地、与屋大维达成一致的社区都被包括在内。意大利成了国家的中心。屋大维对意大利的重视说明了,身为三头之一,他的私人关系网,亦即他的势力范围已经远远超出了罗马城。此外,强调意大利的支持有助于表明他现在和东方的异国埃及站在对立面上。

当然了,这场战争其实是安东尼和屋大维的战争。意大利境内还有不少安东尼的支持者。我们可以想见,很多倾向于支持安东尼的人或许还留在意大利,和安东尼一起历经多场战役的老兵们可能也对他留有不错的印象,政治宣传往往有别于现实。但是安东尼毕竟不能召集起留在罗马和意大利的支持者,这些人无法给安东尼提供多少实质性的帮助。因此,屋大维成功地实现了他的政治宣传:意大利的资源会被他调集起来对抗安东尼及其埃及盟友。

阿克提翁之战

在两位执政官逃跑以后,安东尼和屋大维先做出了一些谈判的姿态,然后就在公元前32年末展开了军事行动。不过,到公元前31年春,双方才准备就绪。屋大维的海陆军队集结于意大利南部的布伦迪西翁,安东尼则率军来到了位于其势力范围最西端的希腊。安东尼让舰队停驻在阿克提翁,又往伯罗奔尼撒(Peloponnese)派出了驻军。

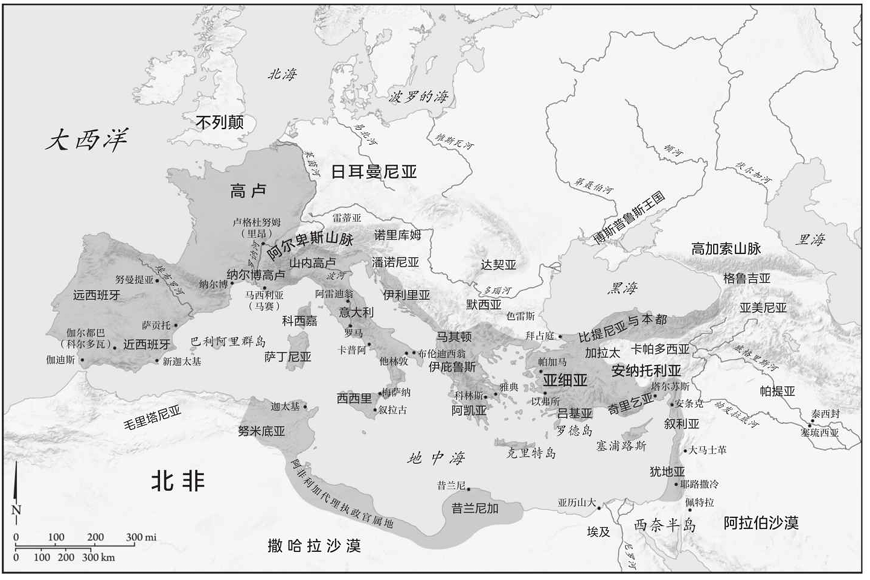

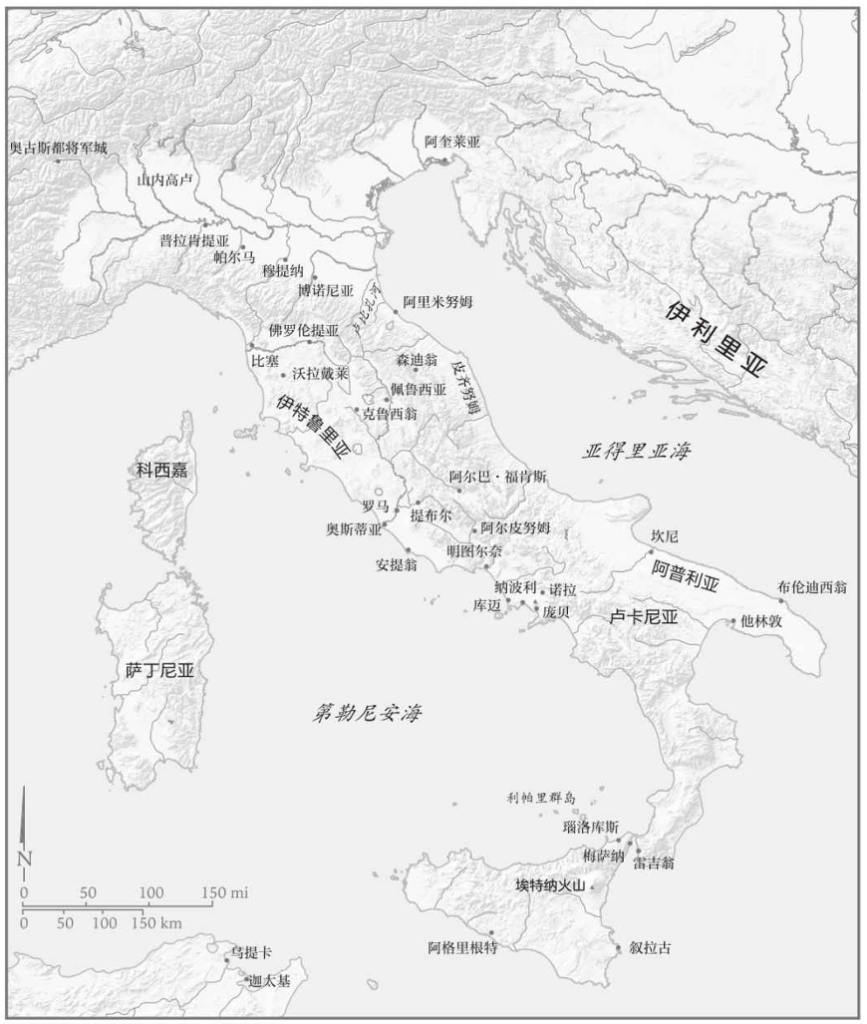

希腊西部的安布拉奇亚湾(Ambracian Gulf)深入内陆约五十公里(请参考地图5),它和伊奥尼亚海以一条窄短的水道相连。阿克提翁就坐落于这个入口的南岸,它是希腊西海岸的少数良港之一。虽然阿克提翁的陆路交通受到了崎岖的山地的阻碍,但它和意大利以及伯罗奔尼撒的海路交通是很便捷的。因此,驻扎在这里的舰队有着不小的行动空间。安东尼的意图是直接威胁亚得里亚海沿岸归属于屋大维的城镇或部队。其实,安东尼的这个策略在佩鲁西亚战争以后就曾经实施过一次。当时的他以希腊西部为基地,利用己方的海军优势主动向布伦迪西翁发起了进攻。然而,公元前31年的屋大维已经今非昔比,他的舰队现在有能力和安东尼竞争海洋的控制权。所以,这一次,阿克提翁不再是安东尼进攻意大利的跳板,他甚至会发现自己已经被困在了安布拉奇亚湾。

阿格里帕决定先发制人。他绕过了阿克提翁,直接攻下伯罗奔尼

撒半岛西南部的米托涅(Methone),进而对安东尼的领地发起了一连串

的劫掠行动,骚扰着安东尼的部队。屋大维则横渡亚得里亚海,大概

登陆于今天的阿尔巴尼亚(Albania)境内某处,准备进攻安东尼部署在

阿克提翁的舰队。[321]接着,就在安东尼赶来与其大部队会合之时,

屋大维经由帕克索斯岛(Paxos)南下至安布拉奇亚湾北岸,在阿克提翁

以北数公里处安营扎寨。屋大维试图和安东尼的海军或陆军交战,但

是安东尼在海湾入口的两岸都建好了防御工事。如果强攻,屋大维势

必会面临不小的风险。此时,安东尼还有部队大概在沿着曲折的陆路

赶赴阿克提翁,他不愿在这种时候应战。于是,双方都开始一边积攒

军力,一边等候良机。[322]

不过,阿格里帕向来热衷于主动出击。他趁此时机在伯罗奔尼撒

大肆劫掠,还在琉卡斯(Leucas)[1]建立了据点,让屋大维控制了阿克

提翁以南的交通要道。至此,屋大维已经从海上包围了安东尼。而

且,阿格里帕的舰队现在掌控着科林斯湾(Gulf of Corinth),可以直

接威胁安东尼的陆路交通,[323]安东尼的后勤因此受到了严重的影

响。在海路被截断的情况下,他的补给队只能沿着曲折又漫长的陆路

前进。

希腊的这个地区在夏天的时候有可能会变得异常酷热,当地的气

候还很潮湿。位于低地的安东尼大概尤感不适。而北边的屋大维所部

驻扎在地势较高的地方,每天下午都能享受到清爽的海风。现代的安

布拉奇亚湾南岸分布着很多沼泽。而在古典时代,这里疟疾频发。在

当地获取清洁的饮用水是一个大问题。而且,安布拉奇亚湾的水体流

速较慢,几乎不可能被用来处理营地里产生的垃圾。因为补给线受到

了骚扰,安东尼帐下还有越来越多的人饱受饥饿之苦。总而言之,安

东尼的部队在酷暑时节承受着食物、燃料、饮用水的匮乏,居住在肮

脏又潮湿的环境里。没过多久,疾病就来袭了。安东尼已经受困,他

的军队危在旦夕,他不能再坐以待毙了。

地图5:阿克提翁及其周边地区

于是,安东尼来到北岸向屋大维发起了挑战。可形势已然逆转,

屋大维现在更愿意坐等安东尼的军队不攻自破,他肯定已经知道安东

尼此刻急需做出决断。摆在安东尼面前的大概有三条路:首先,他可

以尝试着与屋大维的陆军展开决战,将其一举击溃;其次,他可以让

海军尝试突围;最后,他的选项就只剩下先烧毁己方的全部船只(同时

也是安东尼的主要军备),然后带着他饥病交加的部队,顶着敌方海陆

军队的骚扰,沿着崎岖的山路撤退。如果要展开陆军决战,安东尼就

必须来到海湾北岸靠近今天的普雷韦扎(Preveza)的那块平原上。因

此,他率军出动,在屋大维的据点前摆好了阵势。双方发生了一些小

规模的冲突,但屋大维还不想与他展开陆军决战。

这一定是屋大维和阿格里帕兼权熟计的结果,他们想要让海战来决定此次战役的胜负。屋大维拒绝出营应战的态度让安东尼也明白了这一点。此前,屋大维和阿格里帕以海战击败了塞克斯图斯·庞培,而安东尼还没有海战的经历。看起来,他们二人大概觉得己方的海军能够稳占上风。

既然屋大维无意展开陆战,安东尼便撤走了海湾北岸的部队。这

一举动充分说明了此时的主动权掌握在屋大维手中。有史料称,安东

尼此时召开了一场作战会议。这则记载又一次突出了克莱奥帕特拉的

角色,声称她才是最高决策者,而她已经被各种各样的凶兆给吓坏

了,认为他们现在应当竭尽全力地撤退。[324]这种说法不足为信。到

了这个时候,安东尼等人其实已经没有什么可以商议的了,他们的主

要军备就是这支舰队。更何况,他们的陆军和海军的关系堪称休戚与

共。[325]假如安东尼能够在海战中大败屋大维和阿格里帕,他就能确

保对希腊的掌控权,进而再次以希腊为跳板进攻意大利,乃至彻底扭

转局势,让屋大维也尝到失去补给的滋味。就算安东尼仅仅是让自己

的舰队大致完好地撤离了此地,他也可以有重整旗鼓、来日再战的机

会。比如,他可以把舰队派去竞争亚得里亚海的控制权,也可以把这

一批陆军撤走,还可以从东方的领地召来更多的后援军。总而言之,

无论是取胜还是打平,安东尼和克莱奥帕特拉都至少能够摆脱阿克提

翁的困境,改善己方主力部队的作战条件,从而继续进行这场战争。

在安东尼的部队离开了海湾北岸以后,双方都立刻开始着手准备海上

决战。

安东尼和克莱奥帕特拉花了几天的时间来等待合适的风况和海

况。公元前31年9月2日,他们率领舰队离开了阿克提翁的水道。屋大

维和阿格里帕正在等待着他们。安东尼的舰队就在海湾入口之外。他

们排好了紧密的阵形,组成了一堵木质高墙,以免敌方战舰穿插进

来。双方都在等待,没有人轻举妄动。屋大维也许期待着安东尼的舰

队会朝南方的开阔海域逃跑,以致露出侧翼并且加大船只的间距,但

安东尼没有犯下这种错误。于是,屋大维下令延长阵线,同时派出小

股部队去包抄敌舰。面对着遭到多方夹击的威胁,安东尼选择了利用

敌方调整阵形的时机发起进攻。[326]

安东尼的舰队有着更大、更重的船只,它们不仅火力更强,而且具备高度优势。屋大维的战船更小、更轻,因而更加灵活;但这种船只如果被困住了就很有可能被敌方强行攻下。在很长的一段时间里,这场海战都没有明显的优劣之分,两边都无法给对方造成严重的损伤。但是,因为比较笨重,安东尼的舰队基本不可能逃离敌方的攻击。

传说,在战况依然僵持不下的时候,克莱奥帕特拉受到了惊吓,

连忙逃跑,安东尼也随她而去。[327]也就是说,决定了战局走向的是

脆弱的克莱奥帕特拉和过于信赖、爱护克莱奥帕特拉的安东尼。但这

个传说很可能是不实的。安东尼和克莱奥帕特拉此战的目标是至少突

围离开海湾,进入开阔的外海,从而完成撤退,以求在日后另寻良

机。在中午以后、傍晚之前,风力渐渐增强了。安东尼和克莱奥帕特

拉一定在密切地关注着风况,想要借助风势,一口气摆脱敌舰。但

是,挑选扬帆的时机并不容易。就算风力和风向都合适,安东尼的船

只也还需要有充足的时间才能拉开距离,真正地逃出生天。[328]而

且,所有战舰都必须整齐划一地开始行动,因为放弃作战、进行转向

或许会导致阵形破裂或者露出侧翼。那样一来,屋大维就很有可能找

到可乘之机。而只要安东尼的大多数舰船未能逃离,屋大维就算是取

得了胜利。也许,安东尼甚至应该安排一定量的殿后部队来掩护大部

队扬帆撤退。

时机一到,克莱奥帕特拉便下令撤离。安东尼本人的行动也很顺

利。但是,他的绝大部分战舰都没能突围。不过,尽管我们可以认为

胜负已分,但是战斗还没有完全结束,安东尼的舰队还在作战。这本

身就足以表明安东尼和克莱奥帕特拉的撤离是早就安排好的。克莱奥

帕特拉没有像传说里描写的那样因恐惧而慌乱地逃跑;安东尼也没有

被爱情冲昏头脑,不顾一切地追随爱人而去。就算克莱奥帕特拉和安

东尼已经相继离去,剩下的舰队也不见得就毫无希望,他们仍然在努

力地寻找突围的机会。

安东尼方舰队的阵形依然比较完整,屋大维等人无法将其打乱。但随着白天渐渐过去,他们逃生的希望越来越小。在临近夜晚的某个时间点上,风势会完全消失,然后,他们就会彻底失去打破僵局的机会。此时,屋大维的进攻已经使得安东尼方舰队的阵形变得更加紧密,也更加难以逃跑。于是,屋大维开始派人去准备火攻。

屋大维下令向敌舰射出了装着木炭和沥青的罐子。在火箭的配合下,安东尼的木制舰队陷入了火海。船员们赶紧开始灭火。他们先把自己的饮用水泼到了沥青上,然后开始用海水。安东尼的部下或许不太熟悉这种海战秘技。沥青是不溶于水的,对着燃烧的沥青泼水只会让火势进一步扩散开来。于是,他们开始击打火焰,甚至试图用尸体来扑灭火势。但这也无济于事,火焰不停地扩散开来,很快就彻底失去了控制。在此期间,屋大维的海军就在旁边看着安东尼的舰队化为灰烬。[329]战斗结束了。

安东尼的陆军正在撤离,但他们几乎不可能顺利逃生。他们的其中一条可选路线是经由陡峭的山路朝着马其顿前进。或者,他们可以顶着阿格里帕的海军骚扰的压力,沿着科林斯湾撤退。无论哪条路都充满了艰难险阻,而且很漫长,就算他们能够挡住屋大维等人的进攻,补给也是一个难以解决的大问题。对于一支已经饱受疾病侵袭的部队而言,这是不可能完成的任务。既然逃生几乎无望,那么安东尼的军团自然就选择了投降。[330]

穷途末路的爱情

安东尼和克莱奥帕特拉也许在逃跑之时曾经短暂地停留了一阵

子,以便察看己方陆军是否能够撤离。但在得知投降的消息以后,他

们便朝着埃及航去。对于地位较高的俘虏,屋大维在斟酌以后或杀或

饶。接着,他把一部分军队调回了意大利,表现出充足的信心。屋大

维在自己的军帐所在处建造了一根巨大的胜利纪念柱,饰以安东尼战

船的船首,并且将其献给阿波罗。后来,这里更是有了一座纪念此次

胜利的城市—尼科波利斯(Nicopolis),也就是胜利之城。它坐落在山

坡上,注视着屋大维曾经取胜的那片海域。屋大维还下令要求每五年

就在此举办运动赛事,让罗马世界每五年都来庆祝屋大维的胜利,同

时铭记安东尼和克莱奥帕特拉的命运。屋大维把阿克提翁之战渲染为

决定了整个罗马世界前途的重大事件。

此战以后,罗马世界里的几乎所有人都看清了局势。仅从理论上

来说,安东尼还掌握着非常充足的资源。他有很多军团还驻留在叙利

亚和阿非利加。有不少附庸国王还理应对他效忠,派兵前来支援他的

后续行动。克莱奥帕特拉也拥有大量的资金和战船,虽然她的舰队位

于红海。然而,阿克提翁之战干系重大。凡知晓战况者都不难看出这

场战争其实已经结束,没有必要白白葬送自己的性命。安东尼已败,

屋大维即将获胜。安东尼的盟友纷纷叛变,东方的各位国王以及由安

东尼委派至各个地方省份的罗马总督都开始向屋大维求和。最后,只

有埃及还处在安东尼和克莱奥帕特拉的掌控之内。

他们二人肯定也能看清现在的战略形势。此时的屋大维可以调用

巨量的资源,他们根本无法望其项背。于是,他们返回了亚历山大,

等候征服者的到来。他们派出了使者,尝试以外交渠道解决问题,却

并没有取得什么成果。安东尼和克莱奥帕特拉已经失去了谈判的资

格,屋大维没有理由跟他们讲和。就在这个时候,屋大维遇到了一场

兵变,已经退役的士兵又在要求得到奖赏。屋大维只好回到布伦迪西

翁去安抚哗变的士兵。不过,这只是推迟了结局的到来而已。安东尼

和克莱奥帕特拉由此得到了冬春两季的喘息时间。他们竭力召集部

队,准备抵抗到底。此外,为了逃避大难临头的压力,他们在冬天花

了大量的时间在自己最为擅长的事情上—挥金如土,举办奢华的晚

宴,展示自己的富有。他们还特意和朋友们一起组建了一个名为“共

赴黄泉”的享宴团体。[331]公元前30年,屋大维的军队即将抵达埃

及。

埃及的沙漠让这个国家有了抵御外敌的天堑,但是安东尼此时需

要同时抵挡来自东方和西方的入侵(请参考地图6)。之前,安东尼曾经

任命一位骑士科涅利乌斯·伽卢斯去负责指挥西边的昔兰尼加的军

队。安东尼也许认为地位低一级的贵族更有可能为自己尽忠。然而,

伽卢斯还是选择了叛变,甚至还率军向埃及发起了进攻。至于屋大维

的部队,他们很可能从东边的佩鲁西翁(Pelusium)而来。安东尼本想

去东方应敌,但现在却必须到西边处理叛军,因为伽卢斯已经攻下了

一座边境城市帕莱托尼翁(Paraetonium)。安东尼一度认为自己或许可

以说服士兵们回心转意。然而,他失败了。接着,安东尼在帕莱托尼

翁城外驻扎了没多久就得知了屋大维终于抵达了埃及。[332]

于是,安东尼转而率军向屋大维进发。他的骑兵在亚历山大的外围地带遇到了屋大维的部队并且将其击退。然后,安东尼派出了步兵乘胜追击,但他没能给屋大维造成严重的伤亡。不过,他还是深感振奋,声称自己就算是在此刻这样的绝境之中也有扭转乾坤的能力。但是,四面八方都有无数敌军袭来。安东尼不可能真的相信自己有机会逃脱死亡的命运。第二天,他命令海军离开亚历山大。当这支舰队遇到屋大维的优势海军以后,他们举起了船桨,选择了投降。陆军的情况也不容乐观,安东尼带着步兵投身于战场,却遭遇了失败,被迫退回城内。[333]

地图6:埃及

克莱奥帕特拉获悉了己方部队叛变和安东尼被击败的事情,她知道结局将至。此前,她已命人把一部分财物运到了自己的陵墓里。现在,她前去陵墓之内,关上了大门。埃及的女王已经准备好迎接死亡。

很快,全城的人都得知了这个消息。安东尼也不例外。他得到的情报大概是克莱奥帕特拉已死。因此,安东尼拔剑自刎。但就在他倒在地上流血不止的时候,又有消息传来,称克莱奥帕特拉还活着。于是,安东尼命人将他抬去和女王相会。他穿过了亚历山大的街道,抵达了陵墓外,然后被送到窗口处,在克莱奥帕特拉及其仆人的帮助下进入了陵墓。终于,安东尼回到了克莱奥帕特拉的身边,在她的臂弯中离开了人间。[334]

克莱奥帕特拉并没有立刻随安东尼而去。屋大维的使者进入了亚历山大,他们看起来愿意开启谈判,甚至有可能饶恕克莱奥帕特拉及其子女的性命(最后除了恺撒里昂以外,他们确实都没有被杀)。这些使者进入了陵墓,抓住了克莱奥帕特拉,夺下了她打算用以自裁的匕首。然后,克莱奥帕特拉被带回了王宫。屋大维的人小心地看守着她,等待屋大维本人前来。[335]

关于克莱奥帕特拉和屋大维的会面,有一个虚构的故事流传至今。据说,长于诱惑男性的克莱奥帕特拉试图对年轻的征服者屋大维故技重施。但屋大维充满了男子气概,毅然拒绝了。他不是恺撒,更不是安东尼。他有着更加强大、更符合罗马道德标准的自制力。但是,我们基本没有理由相信克莱奥帕特拉会忽然放弃自杀的意图。[336]她先为安东尼举行了丧礼,然后就回到了王宫。接着,她准备了一顿丰盛的宴席,穿上了女王的华服,向屋大维送出了一封信。及至屋大维收到这封信件之时,克莱奥帕特拉已然死去。屋大维破门而入,只见克莱奥帕特拉的两名侍女正在为她们的女王戴上冠冕。这是精心筹划的自杀。克莱奥帕特拉有意让罗马人发现即使是死她也秉持庄严的王家风范。克莱奥帕特拉的侍女也和她一样中了毒。

这位埃及女王的具体自杀手段至今仍然是一个谜,有史料暗示克莱奥帕特拉用了一枚带毒的别针。但在传说当中,她更有可能死于毒蛇之口。一条或数条毒蛇被藏在一堆无花果或是水罐里带进了王宫。伊西丝本就是和蛇有关的女神,对于最后的法老暨伊西丝的化身而言,这种死法或许确实特别合适。[337]最后,克莱奥帕特拉被葬在安东尼旁边。由此,安东尼的愿望实现了,他真的和他的女王一起被葬在了亚历山大。

帝国及其敌人

安东尼和克莱奥帕特拉之死既意味着人们即将开始进一步虚构相

关的传说故事,也标志着君主制的帝国时代即将到来。然而,阿克提

翁之战其实不是决定了罗马世界走向何方的事件,他们二人的自杀就

更没有改变历史的面貌了。即使阿克提翁之战的胜者是安东尼,罗马

世界看起来也不太可能走上截然不同的道路。安东尼和屋大维所在的

关系网络已经掌控了罗马。这场战争解决了位于亚历山大的安东尼和

克莱奥帕特拉以及位于罗马的屋大维形成的两个难分高下的权力中枢

问题。就算安东尼取得了胜利,他也几乎不可能损害这张关系网对罗

马的统治权。如果说罗马必定要从共和国走向帝国,那么从安东尼的

所作所为来看,我们完全可以说他其实比屋大维更加激进、更加急

切。当然,安东尼也许会让亚历山大在他的帝国里扮演更加重要的角

色,他和克莱奥帕特拉所生的子女大概也会构成帝国的第一个王朝。

但是,罗马和意大利终究是帝国的地理中心。无论安东尼对克莱奥帕

特拉的爱意有多么深刻,他应该还是会在罗马度过不少的岁月。

因此,安东尼和克莱奥帕特拉之死的意义恰恰就在于人们所虚构

的那些故事。[338]安东尼和克莱奥帕特拉正好可以被重塑为帝国的敌

人。而且,他们二人与帝国的对立关系要体现于他们的人生经历和生

活方式当中。于是,他们就成了为爱痴狂、沉迷于色欲、女性化、东

方化、必定灭亡的人物,这些属性也被渲染为同帝国与罗马相对立的

属性。随着罗马皇帝对政治和社会的掌控力越来越强也越来越全面,

安东尼和克莱奥帕特拉便逐渐代表了帝国在意识形态领域内的敌人。

无论真相究竟如何,安东尼和克莱奥帕特拉的历史意义就是让他们的

敌人成为与爱为敌之人,又让爱侣成了帝国的敌人。

[1]今名莱夫卡扎(Lefkáda)。—译者注

[309] 请参考Sally-Ann Ashton, Cleopatra and Egypt (Malden, MA: Blackwell, 2008), xi,她直言自己未能成功地找到“真正的”克莱奥帕特拉。彻底剥除相关文本中的虚构成分既是不可能的,也没有特别大的意义。不过,还是有很多人勇敢地朝着这个方向做出了努力,比如, Michel Chauveau, Cleopatra: Beyond the Myth, Ithaca (NY and London: Cornell University Press,2002); Joann Fletcher, Cleopatra the Great: The Woman Behind the Legend(London: HarperCollins, 2009); Stacy Schiff, Cleopatra: A Life (New York: Little,Brown, 2010),他在作品的第7页写下了这样的话:“在探寻克莱奥帕特拉的本来面目之时,我们需要一边排除那些许多人信以为真的假象和老生常谈的宣传话语,一边抢救那些所剩不多的真相。”相比之下更为实在一些的作品是Stanley M. Burstein, The Reign of Cleopatra (Westport, CT: Greenwood, 2004)。关于这些谣言和奥古斯都之间的关系,请参考Robert A. Gurval, Actium and Augustus: The Politics and Emotions of Civil War (Ann Arbor: University of Michigan Press, 1995)。Diana E. E. Kleiner,Cleopatra and Rome (Cambridge, MA: Belknap Press of Harvard University Press, 2005),她认为克莱奥帕特拉就好像是罗马世界的“明星”,同时代的人会对其生活产生无限的遐想。关于克莱奥帕特拉的形象,请参考Peter Higgs and Susan Walker, Cleopatra of Egypt: From History to Myth (London: British Museum, 2001)。

[310] Dio, 49.34.1.

[311] Suetonius, Divus Augustus , 69,他只引用了一部分。

[312] 请参考Riet van Bremen, The Limits of Participation: Women and Civic Life in the Greek East in the Hellenistic and Roman Periods (Amsterdam: Gieben, 1996),此书指出,男性和女性都会以家庭为单位参与公共生活。

[313] Dio, 49.40.

[314] Dio, 49.41; Plutarch, Life of Antony , 54.

[315] Plutarch, Life of Antony , 24和54提及克莱奥帕特拉被宣传为伊西丝。

[316] 请参考Rolf Strootman, “Queen of Kings: Cleopatra Ⅶ and the Donations of Alexandria”, Kingdoms and Principalities in the Near East , 139-157, edited by Ted Kaizer and Maria Facella(Stuttgart: Steiner, 2010)。他对封赏仪式有着相似的解读。

[317] Dio, 50.1.

[318] Dio, 50.2.

[319] Dio, 50.4.

[320] Res Gestae , 25.

[321] Dio, 50.11.

[322] Dio, 50.12-13.

[323] Dio, 50.13.

[324] Dio, 50.15.

[325] John C. Carter, The Battle of Actium: The Rise and Triumph of Augustus Caesar(London: Hamish Hamilton, 1970), 213-214,他得出了同样的结论。

[326] Dio, 50.31.

[327] Plutarch, Life of Antony , 66; Dio, 50.31-33.

[328] Carter, The Battle of Actium , 217-223对于这场战斗中风况所起的作用展开了推测。

[329] Dio, 50.34.

[330] Dio, 51.1.

[331] Plutarch, Life of Antony , 71.

[332] Dio, 51.9.

[333] Dio, 51.10; Plutarch, Life of Antony , 76.

[334] Dio, 51.10; Plutarch, Life of Antony , 76-77.

[335] Dio, 51.11; Plutarch, Life of Antony , 78-79.

[336] Dio, 51.12.

[337] Dio, 51.14; Plutarch, Life of Antony , 86.

[338] 关于这些故事,请参考Lucy Hughes-Hallett, Cleopatra: Queen, Lover,Legend (London: Bloomsbury, 1990)。

第十三章 奥古斯都的诞生

阿克提翁之战并不意味着安东尼和屋大维之间的战争已经结束。但是随着事态的发展,这场战斗最终演变成了安东尼阵营的全面崩溃:所有人都明白了谁会败亡,问题只是那一幕要怎样上演而已。对于屋大维而言,他还有别的事情需要处理。这场战争把恺撒派势力撕成了两半,安东尼的密友和部下应当受到怎样的处置呢?而且,屋大维极少在东方建功立业,东方人几乎不知道屋大维是何等人物。

罗马的西部领土大多被划分为各个省份,由中央派出的总督进行

统治。东方领土的安排则更加复杂,今天的希腊以东的那些土地很多

都是在比较晚的时候才接受了罗马人的统治。在种种原因的影响下,

罗马人不愿意把这些新领土划分为直属于中央的省份,反而更热衷于

扶植当地的附庸国王,让他们替罗马中央执掌权威。这种做法尤其盛

行于公元前1世纪60年代庞培的征服以后。一般说来,这些君主都很依

赖罗马的支持。为此,他们需要出兵协助罗马展开军事行动,还要用

金钱来体现他们的忠诚,虽然这些资金往往会被罗马的将领克扣一部

分。

对罗马贵族来说,这样一套让各地君主依附于罗马人的地方统治

体系是有一些好处的。例如,他们可以和这些君主建立良好的私交(安

东尼和克莱奥帕特拉的私交就非常深)。这种私人关系可以大大提升他

们的名望,因为和国王们结伴而行显然可以体现他们的突出地位。此

外,附庸国王的存在还给贵族们提供了赢利的空间。这些君主会对罗

马“朋友”慷慨解囊,以便让他们出手帮助自己维护利益。但是,当

罗马的政治精英们发生内斗之时,这套体系的缺点就暴露出来了。这

些远居各地的君主必须小心翼翼地考虑自己应该加入哪边的阵营。然

而,无论考虑得多么仔细,他们还是很有可能站到失败者那边去,这

是所有面对内战之人都难以避免的厄运。具体而言,在安东尼和屋大

维相争的这次内战当中,东方的诸位国王其实没有选择的余地。在过

去十年的时间里,他们一直都处于安东尼的势力范围内。安东尼肯定

已经除掉了所有不忠于他的君主,中立则几乎是不可能的。然而,和

屋大维作对是很有风险的事情,他素有绝不手下留情的名声,这些国

王难免担心屋大维取胜之后杀人灭国。

除了附庸国王以外,罗马东部领土的政治版图当中还有不少希腊

城邦(póleis,单数为pólis)的存在。希腊城邦内部的政治结构比较独

特:首先,官员们会组成统治城邦的委员会,但官员的任期一般不

长;其次是议会,其成员通常是地主贵族;最后是公民大会。简而言

之,希腊城邦和罗马的政体差异主要在于官员的权力。希腊官员的权

力较小,更依赖于贵族议会和公民大会。大多数的城邦都算是民主政

体,但城邦内的富人往往拥有更加强大的权威。所以,我们可以称之

为有限度的民主政体。每一个城邦一般都只控制着较少的土地,这个

地区的政治版图就是由大量的小城邦构成的。在亚历山大大帝的征服

以后,希腊的城邦开始活动于当地的数个王国(它们是这个地区的军事

强权)的范围之内,城邦和王廷之间的关系网就这样诞生了。

屋大维给这些城邦带来了一个新的问题。之前,罗马派出的总督

就好像只是某个大国的外交使节一样。在罗马的统治下,各个城邦还

是能够运用它们早已熟悉的外交手段。它们会派使者去罗马的精英圈

子里打点关系,用荣誉来收买人心。然而,屋大维时代的罗马发生了

很大的变化,权力现在集中于屋大维一个人身上,传统的罗马国家机

关似乎已经失去了实权。既然屋大维才是最高决策者,那么寻求罗马

元老的支持看起来就意义不大了。而且,屋大维不会像之前的总督那

样在短暂的任期结束以后就失去权力。总而言之,希腊的城邦现在需

要和它们的新主人建立起良好的关系。

赢得阿克提翁之战的屋大维仍然马不停蹄。众所周知,他之前在

取得胜利以后都不留情面地对敌人施以报复。就算是以当时的血腥标

准来看,其手段也不可谓不残酷。至于那些和安东尼为伍的罗马贵

族,我们不清楚屋大维具体是如何处置的,只知道他或杀,或罚,或

饶。其中被处死者的遭遇让人不禁回想起屋大维击败卡西乌斯、布鲁

图斯以及赢得佩鲁西亚之战以后的做法。他延续了一贯的作风,再度

表现得像是一个暴戾恣睢、草菅人命的独夫。后来,贵为皇帝的奥古

斯都把阿克提翁之战描绘为意大利和东方之间的对抗,还把自己塑造

成捍卫传统、维护秩序的人物。但那毕竟是后来的事情,此时的屋大

维看起来丝毫不打算掩饰自己手中生杀予夺的大权。

现在,屋大维把他的影响力施加到了东方,各地的附庸国王必须

摆正自己的位置。当年,安东尼曾经因人力、物力不足而在战争中向

这些君主寻求帮助。之后,他投桃报李,让这些国王得到了很多好

处,许多君主都扩张了自己的领地。但是,既然屋大维已经来到了东

方,那么当初安东尼赠予的利益就都不算数了。此外,有三位国王被

罢黜,一位被处死。没有遭殃的君主大概会感激屋大维不杀不废之

恩,庆幸自己还能保住权位,不会介意自己的领土发生怎样的变动。

况且,屋大维还把被废黜的国王的领地转交给了那些对他示以忠诚、

友好的君主。

在东方的诸位国王当中,统治着犹地亚的希律是除了埃及的克莱

奥帕特拉以外最为显赫的君主。在他之前的王朝因追随帕提亚人而被

安东尼麾下的将军索西乌斯给消灭了。之后,安东尼就扶持希律上了

台。希律原先并不算是犹地亚上流社会的一员。因此,犹地亚的传统

权力关系网络看起来不太愿意坐视希律入主犹地亚,他面对着颇为强

大的反对势力。然而,希律坚信安东尼会给自己提供可靠的援助,便

果断出手铲除了所有的政敌和对手,包括圣殿里拒不合作的大祭司。

希律还和马尔库斯(Malchus)的阿拉伯君主国展开了斗争,大胆地尝试

扩张自己的王国。正是因为忙于和马尔库斯作战,希律才未能亲自赶

到阿克提翁去支援安东尼。在得知了屋大维已经取胜以后,希律杀死

了现任大祭司,把自己的家人送到了比较安全的沙漠堡垒马萨达

(Masada),然后就动身前去拜见屋大维。

屋大维在罗德岛上接见了希律。这位来自犹地亚的国王既没有换

上丧服,也没有卑躬屈膝地摇尾乞怜,而是秉持自尊、身着盛装而

来,虽然他还是除去了象征着王权的冠冕。接着,希律开口了。他声

称自己是安东尼的挚友,若非阿拉伯的战事缠身,他必定会去阿克提

翁和安东尼并肩作战。而且,他虽然未能亲自参战,但还是为安东尼

提供了不少的粮草和资金。也就是说,希律自称会对朋友和恩人尽

忠,值得像屋大维这样的大人物对他加以信赖。假如屋大维愿意和他

缔结友谊,他同样会对屋大维鞠躬尽瘁。[339]

如果这则记载属实,那么希律就是很精明地把政治现实摆到了屋

大维的面前。东方国王们的政治手段一直很简单,屋大维肯定很快就

能想明白其中的利害关系。他应该已经知道犹地亚有很多人反对希

律,这位国王是凭着反复的大清洗才保住王位的。如果屋大维选择处

死希律,那么(经受了多次清洗的)犹地亚就没有人能够稳住局面了,

内战在所难免。最后,屋大维保留了希律的地位,而希律也没有辜负

屋大维的期待。当屋大维日后来到犹地亚之时,希律为他献上了丰厚

的礼物。屋大维的军队乃至其他有可能帮上希律的人都享受到了类似

的待遇。显然,让希律感恩戴德比砍下他的脑袋更有意义。

从公元前31年夏末到第二年初,屋大维基本都在小亚细亚和希

腊。他应该是在忙两件事,一件是准备去埃及结束这场战争,另一件

就是和希腊的各个城邦建立友好关系。城邦的精英们早就迫不及待地

想要讨好这位新的恺撒了。而屋大维的主要需求是资金,曾经花钱和

安东尼缔结友谊的这些城邦现在必须也向屋大维提供大量的资金才能

讨得他的欢心。不过,渐渐地,屋大维和希腊城邦发展出了新型的政

治关系。一种新的政治文化开始流行于东方的土地之上,这就是帝国

政治的运行方式。

亚历山大之城

这种新型的政治文化在埃及初见端倪。安东尼和克莱奥帕特拉被

击败以及克莱奥帕特拉自杀以后,托勒密王朝迎来了终结。屋大维根

本不可能让克莱奥帕特拉的后代继承王位、延续王统,因为这场战争

乃至相应的宣传战都过于激烈,让屋大维失去了宽恕的余地,他不得

不把埃及降格为罗马的一个省份。这种事情有一套传统的流程。屋大

维需要指定一位总督,然后先给埃及做好临时的安排,再把各项事宜

通报给元老院,让元老们加以审核。大概还会有专门的一项法案出

台。不过,在设置省份之前,屋大维仍须解决一个重要的问题:亚历

山大的命运。

罗马军队有毁灭城市以儆效尤的传统。这些年来,地中海沿岸地区已经有许多极其雄伟的城市都被罗马人夷为平地。迦太基在被摧毁以后的一百年内都是一片荒地,古老而壮观的科林斯也没有逃脱类似的命运,最负盛名的雅典同样被苏拉的部队洗劫一空,西边的西班牙和高卢地区也有不少城市沦为废墟,虽然史书往往对这些城市着墨不多。总之,肯定有很多人担心亚历山大也会遭遇毁灭。

亚历山大是古典时代最伟大的城市之一,其历史、建筑、规模(不

下于三十万人口)、文化都出类拔萃。它是地中海世界的第二大城市

(仅次于罗马),摧毁这样的一座城市会让屋大维的敌人和东西方的各

个势力都为之震撼。在后来的征服战争当中,屋大维并没有拒绝这种

惩戒性施暴的手段。更何况,按照屋大维的说法,此次战争的敌人非

同寻常。后来流行的文本浓墨重彩地把这场战争描写为不同的神明和

不同的道德之间的战斗,称其为捍卫罗马、意大利文化的战争,克莱

奥帕特拉和埃及的“威胁”得到了大量的渲染。所以,毁灭亚历山

大、抹除托勒密王朝的这座大都会是合乎道理的。然而,屋大维并没

有选择这条道路,他放过了亚历山大城。

对此,屋大维给出了三个理由。第一个理由是对这座城市的神明

的尊重。塞拉皮斯(Serapis)是一个特别的神,他同时具备希腊和埃及

的属性。有些人怀疑他是托勒密王朝专门为了新兴的亚历山大而捏造

的新神,但希腊人和埃及人并没有这种想法。而且,对于神灵,他们

往往只会做出某些发现或者在故事里加以详细的描写,而不会有意地

创作出新神。塞拉皮斯比较复杂,他的形象和宙斯最像。有时候,人

们会把他们相提并论。但埃及古都孟菲斯(Memphis)的公牛神阿匹斯

(Apis)、羊神阿蒙(Amun)、伊西丝的丈夫奥西里斯也都和塞拉皮斯相

似。埃及人相信法老具有神的某些性质,对其加以崇拜。塞拉皮斯就

凭着与众多神明相关的属性而为法老所用。此外,安东尼一度被塑造

为奥西里斯的化身。这说明罗马人并不怎么介意把人和神联系在一

起。他们同样可以利用这套象征体系。安东尼走过的路,屋大维也可

以走。

对伊西丝和塞拉皮斯的信仰不只存在于埃及,在公元前1世纪初,

罗马人也接受了这种崇拜。三头同盟成立之初就曾经颁布法令在罗马

城内建造一座新的伊西丝和塞拉皮斯的神庙。今天的我们恐怕永远也

不能完全理解罗马人究竟是怎样看待塞拉皮斯的。而且,对塞拉皮斯

的供奉显然在当时引发了一些争议—罗马人内部有着不同的意见。塞

拉皮斯大概更受平民的欢迎,而这一点可以解释为什么安东尼和屋大

维都有意成为塞拉皮斯信仰的支持者。总之,屋大维决定尊重塞拉皮

斯,把自己和这位少见的在地中海沿岸世界拥有众多信徒的神灵联系

在一起,而他的所有言行都会很快地被传回罗马。

不毁灭亚历山大城的第二个理由是屋大维对亚历山大大帝的崇

敬。屋大维早就开始利用亚历山大的形象来提升自己的名望,因为当

时的罗马人几乎可以说是痴醉于这位年纪轻轻就征服了东方的君王。

例如,关于亚历山大,尤里乌斯·恺撒的传记里有两个不同版本的故

事。公元前67年或公元前66年,恺撒正在西班牙。第一个版本的故事

称恺撒在阅读亚历山大的生平时骤然落泪,因为在亚历山大征战四方

立功无数的年纪,恺撒还没有取得什么成就。在另一个版本里,恺撒

同样表达了这种伤感之情。只不过,这一次的直接原因是他在伽迪斯

[Gades,加的斯(Cádiz)]遇见了亚历山大的雕像。[340]

这个故事大概率是捏造出来的,其作用就是为恺撒后来的伟大事

业做好铺垫。不过,我们可以由此看出当时有抱负者会以亚历山大为

榜样。比如,伟大的庞培[他的这个“伟大”(Magnus,即Great)的名

号也和亚历山大大帝(Alexander the Great)有关]在出征东方得胜归

来以后,认可了人们给他肖像上有些发福的圆脸配以和亚历山大相似

的发型。此外,在阿克提翁之战打响以前,屋大维也已经开始尝试把

自己的形象和阿波罗以及亚历山大联系在一起。在阿克提翁之战以

后,亚历山大看起来就更加适合于年纪尚轻的屋大维了。他是深受诸

神庇佑的东方征服者,他肆意地动用武力,他的伟大甚至令其跨越了

凡人和神明之间的分界线。

屋大维在亚历山大之城命人按照亚历山大的形象给自己塑造了一

些雕像,其中有一尊被称为密罗伊(Meroe..)头像,现藏于大英博物

馆。这尊头像就把屋大维塑造成了亚历山大。在绝大多数的肖像里,

亚历山大的脖子都会扭出一个比较大的角度,这大概是象征着他在遥

望神灵。而屋大维的密罗伊头像也扭了类似的角度。此外,其发型也

和亚历山大的标准发型相似,虽然稍短了一些。我们或许可以推断,

屋大维在埃及人面前就是以这种形象出现的,他用这些肖像把自己描

绘成了新的亚历山大。

身处亚历山大之城的屋大维还造访了亚历山大的陵墓。当然,这

也是托勒密诸王的墓穴。屋大维命人取来了遗体,然后打开石棺,以

便审视亚历山大的面庞。他给亚历山大的遗体戴上了金冠,然后为他

献上了散落的花瓣。不过,亚历山大毕竟已经死了将近三百年了,就

算是有最好的防腐手段,他的遗体也难免变得非常脆弱。因此,新的

亚历山大把旧的亚历山大的鼻子给碰掉了一块。这幅景象或许让亚历

山大之城的居民倍感紧张,这可是他们最宝贵的君王遗体。于是,他

们询问屋大维是否想要看一看托勒密诸王的尸体。对此,屋大维表

示,他想要看的只是国王,而不是尸体。[341]这个说法很有意思,所

以他后来又用了一次。有人问他是否要去看一看公牛神阿匹斯(埃及人

会用动物来表现神灵,这在古典时代堪称独树一帜),屋大维的回答

是,他通常只会膜拜神明,而不是牲畜。

第三个不毁灭亚历山大城的理由是屋大维和阿雷欧斯(Areios)之

间的友谊。当时的大多数罗马贵族都通晓希腊文化。而且,从公元前2

世纪中叶或更早一些的时候开始,罗马人还特别欢迎希腊的哲学家来

访,以至于营造出一种独特的贵族文化氛围。哲学家们常常受邀与罗

马的权贵为伴,到意大利南部越建越多的豪宅别墅里参加学术讨论。

拥有一个过从甚密的哲学家已经成了精英地位的标志,就好像拥有别

致的庭院、希腊(或希腊式)雕像和文雅的谈吐一样。因此,我们毫不

意外地发现屋大维和亚历山大的哲学家阿雷欧斯相识。此外,这种现

象还提醒了我们不应把这个时代的文化和冲突理解为近现代的民族国

家之间的概念。在阿雷欧斯的影响下,亚历山大的居民窥见了屋大维

的学术修养,得知他珍视希腊的教育和文化。一言以蔽之,他不是一

个野蛮的征服者。

屋大维还对亚历山大人民发表了演说,亲自解释了这一点,虽然

听众大概在之前就已经有所耳闻。屋大维在演说时非常罕见地使用了

希腊语。征战四方的罗马人(和历史上其他的征服者一样)向来致力于

让被征服者学习自己的语言,他们一般会用拉丁语对战败者讲话。然

而,此时的屋大维不仅对亚历山大的哲学家阿雷欧斯示以尊重,还使

用了希腊语对亚历山大人民发言。由此,屋大维发出了一个鲜明的信

号:希腊人可以与他和谐共处。这其实是亚历山大大帝的传统,希腊

人对此很熟悉。

屋大维迅速地接手了埃及统治者的既有传统。虽然他也许说过自

己不会膜拜牲畜,但是在上埃及地区的布奇斯(Buchis)神庙里,我们

可以看见有关于屋大维膜拜公牛神布奇斯的史料。而且,这份史料是

一系列铭文的一部分,它们都描写了埃及的统治者在向传承逾千年的

诸神献上祭品。也就是说,屋大维不仅把罗马的资金投入了埃及的神

庙和祭坛里,他本人还在神庙的铭文里得到了法老一样的待遇。埃及

的小城市俄克喜林库斯(Oxyrhynchus)有一份来自“恺撒元年”(公元

前30年或公元前29年)的文档,显示当地的某个神庙里有四个点灯人在

起誓为神庙效劳的时候提到了“身为神以及神子的恺撒”。[342]自尤

里乌斯·恺撒成神以来,屋大维就一直在使用类似的头衔。他在货币

上的称谓是“神子恺撒”(CAESAR DIVI FILIVS)。俄克喜林库斯的这

些点灯人显然知道这一点,把这个头衔纳入了自己的誓言。但他们同

时还延续了埃及的传统,把屋大维本人也当作了神。

为了庆祝自己与亚历山大人民化干戈为玉帛,屋大维给这座城市注入了一大笔建设资金。有关此事的最佳史料来自罗马,而不是接受资助的亚历山大。今天,梵蒂冈的圣彼得广场中央屹立着一座方尖碑,而这座方尖碑原本和其他的方尖碑一起在埃及的赫利俄波利斯(Heliopolis,太阳之城)伫立了许多个世纪。这是人们献给太阳的礼物。后来,人们把它迁到了亚历山大。然后,大概在卡里古拉皇帝的命令下,这座方尖碑来到了罗马。最终,又过了很长的一段时间以后,它才在圣彼得广场中央安家落户,挺立至今。在对这座碑加以考察之后,人们发现这座方尖碑上有一些原本镶嵌着铜字的槽。这段铭文的内容大概是:

奉皇帝、神子恺撒之令,工程长官(Praefectus Fabrum)、格奈乌斯之子盖乌斯·科涅利乌斯·伽卢斯建造了这座尤里乌斯广场。

科涅利乌斯·伽卢斯就是之前受安东尼之命去镇守昔兰尼加,接着反而率军在公元前30年从西边进攻埃及,帮助屋大维引走了安东尼的兵力之人。我们稍后还会提及他的事情。

亚历山大的尤里乌斯广场大概建于公元前30—前29年。当时,亚

历山大得到了大规模的改建,尤里乌斯广场就是其中的核心项目。此

外,恺撒庙(Caesareum)也是兴建于此时的标志性建筑,虽然建造这座

庙宇本来或许是克莱奥帕特拉的意思,因为尤里乌斯·恺撒毕竟是她

的情人,也是恺撒里昂的生父。亚历山大的恺撒庙和屋大维在罗马建

造的尤里乌斯神庙(Temple of Divus Julius)形成了遥相辉映的一

对。不过,埃及的这座恺撒庙会成为供奉每一位恺撒的庙宇,把成神

的尤里乌斯·恺撒和他的继承人都囊括在内。

屋大维走在了人和神之间的分界线上,他的这种做法利用了东方

人神化统治者的传统。埃及人对法老的膜拜自不必说,其他希腊化的

东方国度大多也有类似的习俗。而且,罗马的宗教传统里其实也有相

像之处。罗马的建城始祖罗慕路斯和埃涅阿斯都被尊为神明。不久前

成神的尤里乌斯·恺撒则复苏了这种传统。所以,俄克喜林库斯的那

几位点灯人不是奇怪的泥古之人。他们不是没能理解新来统治埃及的

罗马政权,也不是不懂罗马人的异国习俗,而是遵循了屋大维的意

愿,将他奉为新诞生的神明恺撒。

公元前30年末或者公元前29年初,屋大维开始返回罗马。他没有

直接航向罗马,而是选择了环地中海而行。小亚细亚半岛上的各个城

镇纷纷派出使者来拜见屋大维。以弗所(Ephesus)和尼西亚(Nicaea)人

民请求获许把神庙献给罗马女神(Roma,象征着罗马城)和尤里乌斯·

恺撒。帕加马(Pergamum)和尼科米底亚(Nicomedia)人民恳请把他们的

庙宇献给屋大维本人(可能还有罗马女神)。帕加马还获许举办赞美屋

大维的运动赛事。之前也有人享受过这种近似于神的待遇,比如希腊

化时代的诸位国王以及某些罗马总督。但他们的规模都无法与屋大维

相提并论,也不像屋大维这样堂而皇之。[343]

对于在深厚的一神教传统滋养下成长起来的人而言,把一个人尊

奉为神看起来似乎没有什么道理,反而有点邪教的色彩。但对古典时

代的人来说,这是很正常的。他们向来相信人有可能成为神,最著名

的例子就是赫拉克勒斯。大多数人还相信诸神会时常造访人间,他们

认为每个人都有灵魂,而灵魂是有一定的神性的。埃及人更是认为法

老就是某个神圣的灵魂的化身。因此,法老仿佛就是神明。类似的思

想观念在近东地区盛行了许多个世纪:国王和神明之间有着特殊的关

系,而且这就是他们为王的原因。不过,尽管有着这些统治者崇拜思

想的铺垫,但像屋大维那样直接宣布自己有着神的属性乃至把自己当

作神明供奉起来还是极其罕见的。由此,屋大维表明了自己不同于之

前罗马政坛上的所有人物。

罗马人和东方人早就有了比较密切的交往,后者肯定已经很清楚

罗马的状况了。毕竟,罗马和希腊城邦的构造其实没有很大的差异,

统治罗马的无非就是由地位大致相等的贵族们组成的议会(元老院)。

然而,在安东尼彻底落败以后,各地的城市做出了前文所提的种种创

举。这说明它们已经认识到了一个无可争辩的事实:屋大维全然不同

于寻常的罗马元老。而且,他也不同于那些继亚历山大以后统治东方

的君王。屋大维独一无二。他是神。不过,虽然这些使者看起来纯粹

是东方的各城市议会自主派出的,但我们很难相信他们会不谋而合地

想到这种如出一辙的主意,还同样在这个时候派出了使者,也许他们

在暗中收到了指示。

看到整个东方都无比热切地向自己表达了忠心,权威得到确认的屋大维便满载着埃及的财富,渡过亚得里亚海回到了意大利。自阿克提翁之战以来,元老院有将近两年的时间来好好地准备迎接凯旋的屋大维。现在,元老们即将为他献上与其相称的隆重表彰。

庆功

在赞美屋大维这件事情上,元老们表现得特别积极。在阿克提翁

战役结束以及亚历山大陷落之后,元老们争先恐后地出台了一系列歌

功颂德的决议:他们表决同意为屋大维击败了克莱奥帕特拉而举办一

次凯旋仪式;布伦迪西翁是屋大维奔赴战场的起点,也是他凯旋的港

口,因此元老们决定在这座城市建造一座凯旋门,还要用战争中缴获

的武器来加以装点;罗马的广场上也要兴造一座凯旋门;尤里乌斯神

庙(或许尚未竣工)将被饰以在阿克提翁之战中缴获的敌舰的船首;每

五年还要举办一次歌颂屋大维的庆典,虽然其具体内容不明,不过,

这种节庆想来应该会有祷告、献祭、比赛(大概是角斗)和戏剧这些常

见的感谢神明的公共活动;屋大维的生日和他的捷报被公之于众的日

子都被定为感恩日(同样会有祭祀活动);元老们还宣布维斯塔贞女、

元老还有其他的罗马居民(包括儿童在内)都会前去大道上迎接屋大维

胜利返回罗马,元老院和人民将一起向屋大维表达深切的感激之情;

[344]亚历山大陷落的日子被定为幸运日,而且,亚历山大的居民必须

从那一天开始重新计历。显然,这象征着新时代的到来。

罗马的宪法有了一些微调,屋大维得到了保民官的一部分权力。这具有特别的象征意义,因为保民官是罗马平民的权益的捍卫者。此举相当于宣布他要成为平民的新的代言人。之后,在司法领域,屋大维将拥有最高的决定权;在宗教领域,祭司们在祈求神灵祝福罗马元老院和人民之时必须提及屋大维的名字。屋大维已经成了罗马的第三大组成部分。罗马当局甚至还宣布在所有的晚宴上都要准备一份专门献给屋大维的祭酒。这些都是史无前例的特殊荣誉。

公元前29年夏,屋大维回到了罗马,同时迎来了又一轮的荣誉加

身。赞颂诸神的圣歌里加入了屋大维的名字。他有权像参加凯旋仪式

一样头顶桂冠出席所有的节庆活动。他可以任意地指定神职人员加入

祭司团,无论当时是否有职位空缺。元老院还关闭了雅努斯神庙

(Janus)的大门,表示战争已经结束。[345]整个城市都来迎接屋大维凯

旋(虽然他事先声明了平民不必前来)。执政官献祭了公牛以庆祝他的

归来,这也是史无前例的宗教仪式。接着,屋大维对罗马人民发表了

讲话。他宣布每一个成年男子都可以得到四百赛的奖金,这个数额足

以让一个人安度整整一年。屋大维称赞了统率舰队立下大功的阿格里

帕,士兵们也得到了奖赏。屋大维还代表他当时年仅十二三岁的外甥

马尔凯卢斯向罗马城内的儿童发放了奖金,这是他第一次展露出想要

构建一个王朝的意图。屋大维没有收下意大利各地人民为他收集的金

币。但就算如此,罗马城也已有了无数的财富。除屋大维赠予罗马人

民的奖金以外,随他一同返乡的士兵们也带来了埃及的大量财宝。据

说,在他们返回以后,罗马城的利率从平时的百分之十二降到了百分

之四,物价也有所上涨。[346]

公元前28年,屋大维得到了“首席元老”(princeps senatus)的

地位,也就是元老院的第一人。这意味着在元老们商讨事宜的时候,

他的意见最为重要。“首席”(princeps)即“第一人”。这个称呼有

着比较悠久的历史。之前也曾有一些名望较高的元老被称为“首

席”,享有全国第一人的美誉。但当时这个称呼基本只是荣誉头衔,

不包含实权。到了后来,至迟在奥古斯都时代的末期,这个头衔才具

备了更加重要的意义。奥古斯都的地位其实是由各种权力和头衔拼凑

起来的,他没有某个统括一切的头衔。我们现在常常使用的“皇帝”

(emperor)这个词的原型只是罗马人对战功赫赫的将军(imperator)的

赞美之词而已,其最初的适用范围是很狭窄的。“元首”(princeps)

这个称呼算是以一种不太正规的方式表明了屋大维独特的显赫地位。

后来的罗马人使用的“principatus”(principate)这个词既可以指代

某个人领导国家的时期(“某某人时代”),也可以用来指代早期罗马

皇帝统治国家的制度(所谓的“元首制”)。[347]

然后,屋大维举办了凯旋仪式。他和他的部下在长达三天的时间

里游行于罗马城内各处。在此期间,他们既向诸神献上了祭品,也向

全城的居民展示了极其丰厚的战利品。第一天的凯旋仪式是为公元前

35—前34年的达尔马提亚战争而举办的。在此之前,屋大维一直无暇

以凯旋游行来庆祝这一次的胜利。第二天纪念的是阿克提翁之战。游

行队伍看起来还带上了描绘着此次胜利的巨幅宣传画。第三天则庆祝

了埃及战事的胜利。按照传统,这一天的重头戏本该是让戴上镣铐的

克莱奥帕特拉跟着游行队伍走遍全城。但既然埃及女王已经自杀,他

们只好用塑像来替代了。不过,她和安东尼所生的龙凤胎亚历山大·

赫利俄斯和克莱奥帕特拉·塞勒涅都在游行队伍里面。而她和恺撒所

生的儿子恺撒里昂当然已经死去。一般说来,俘虏都会在游行结束以

后遭到处决。但这一次,屋大维展现了仁慈之意。来自埃及的财宝也

被装在车里跟着游行队伍供所有人观赏。接着,屋大维本人乘着战车

游遍了全城。另一位执政官和诸位元老都跟在他的身后。[348]严格说

来,屋大维可能只举办了两天的凯旋仪式来纪念达尔马提亚和亚历山

大的战事,因为庆祝内战的胜利不是很光彩的事情,第二天的活动只

是游行而已。不过,这种细枝末节的事情似乎很快就被人遗忘了。

[349]屋大维的庆功活动还没有结束。他把一座庙宇献给了密涅瓦

(Minerva)。大概进行了好一阵子的元老院翻修工程现在已经结束,其

名称按照尤里乌斯·恺撒的名字被更改为尤里乌斯元老院(Curia

Iulia),时人想必也能看出其中的讽刺意味。元老院里还添置了一座

来自他林敦(Tarentum)[1]城的古老的木质胜利女神像,上面装点着来

自埃及的战利品。每当元老们召开会议之时,他们就会为胜利女神献

上祭酒,同时再度回忆起屋大维击败了安东尼。取自埃及的战利品有

很多被放在了古老的罗马城广场中心的尤里乌斯神庙里。此时,这座

庙宇已经完工且得到了祝圣。还有一些财宝被送至罗马城内最为古老

也最受尊崇的卡皮托里翁三神庙—供奉着朱庇特(Jupiter)、朱诺

(Juno)和密涅瓦。

屋大维还在庆祝胜利的运动赛事上大肆挥霍。各种家养或野生的动物都被送入竞技场遭到屠杀,其中包括罗马居民从未见识过的一只河马和一只犀牛。罗马贵族们亲自走上了跑马场进行比赛,甚至有一位元老参加了角斗。在屋大维的命令下,来自苏维汇(Suebi,日耳曼人)和达契亚(Dacia,多瑙河以北)的俘虏之间展开了一场战斗。[350]屋大维还发明了一种叫作“特洛伊游戏”(Lusus Troiae)的新型赛事,让贵族青年比拼马上的功夫。

节庆、建筑、运动赛事、凯旋游行、各种形式的荣誉,还有发放给全体罗马居民的奖金,这一系列庆功活动的规模都是史无前例的。在如此盛事的映衬下,所有人都能够明白屋大维东征归来是一个重大的历史事件。

这些庆功活动的背后其实别有深意。随着阿克提翁之战结束、安

东尼和克莱奥帕特拉死去,屋大维已经毫无疑问地成了罗马政坛的主

人。然而,谁也不知道他接下来会做什么事情。很多人都难免有些畏

惧,因为屋大维完全有可能像某些前人一样发动新一轮的清洗。元老

们表决同意向屋大维颁发种种特殊荣誉的原因与其说是对战胜者的感

激,不如说是深深的畏惧。他们极其迫切地想让屋大维看见自己的忠

心。与此同时,屋大维则以种种举动来安抚元老和罗马人民。其中,

象征意义最大的或许就是关闭雅努斯神庙的大门。依据传统,每当罗

马人民走向战争之时,这座神庙的大门就会被打开。鉴于罗马历史上

内外战争频发,在过去的几百年间,这些门其实很少有关上的机会。

但是关闭大门就象征着和平的新时代已经到来。我们不知道这个决定

究竟是由哪方做出的(也许经过了协商),但双方都愿意接受这个结

果,愿意以此来表示他们衷心地希望战争就此结束,所有人都能够回

归正常的和平年代。

不过,虽然战争或许真的结束了,但是过去的事情并没有被遗

忘。屋大维没有抛弃他的一贯政策。值得注意的是,为了巩固他和平

民的关系,屋大维大方地向罗马平民送出了巨额的奖金,让他们知道

屋大维确实是平民的保护者与大恩人。对于让罗马社会四分五裂的内

战,屋大维不仅不加掩饰,反而以各种手段来庆祝自己的胜利,不给

败者留丝毫的情面。凯旋仪式或许只能算是昙花一现,但今后长期存

在的感恩节庆日会一次又一次地把屋大维的胜利摆到人们的面前。罗

马城内的各种胜利纪念建筑和纪念品也会让人们永远铭记阿克提翁和

亚历山大的战事。更何况,元老们还颁布了法令,要求人们在私人宴

席上为屋大维准备祭酒。这条规定不一定能够强制落实,但它光是存

在就已经有了意义。我们大可以想象一下战死者的亲朋好友们面对这

条规定会作何感想。

在屋大维时代的罗马城,安东尼的踪影被抹去了一些,但人们依

然能够在很多场合感受到克莱奥帕特拉的存在,这让人不禁觉得有点

奇怪。各座建筑内摆放着的埃及工艺品以及展示于尤里乌斯神庙前的

船首都让人联想起这位埃及女王。各神庙里的埃及宝物也一边给庄严

的罗马圣地增添了光彩,一边彰显着克莱奥帕特拉的存在。诗人们依

旧公然传颂着她的故事。最为关键的是,以克莱奥帕特拉为模型的维

纳斯金像依然保留在恺撒建造的维纳斯母神庙内。所有人都知道,当

他们过来瞻仰维纳斯女神的仪容之时,他们看到的其实是克莱奥帕特

拉,她不会为人们所遗忘。屋大维需要克莱奥帕特拉在帝国政权的宣

传当中扮演一个重要的反面角色,帮助屋大维在多年以后把他的政权

塑造得正气凛然。

当然,尽管克莱奥帕特拉没有淡出罗马人的视野,但屋大维显然

更是无比突出的存在。他获得了很多宗教方面的荣誉,让罗马人不仅

要在圣歌中赞颂他,还要为他献上祭品。屋大维在帕拉提翁山上为自

己建造了一座新居,还令其和旁边新建成的阿波罗神庙构成了一个整

体。阿波罗是受屋大维偏爱的神祇,阿克提翁之战的胜利就被他归功

于阿波罗。公元前28年,这座阿波罗神庙在盛誉之下开放了。[351]这

些挺立于山坡之上的白色大理石柱本身就是了不起的胜利纪念品,它

们为阿波罗提供了新的安身之所。维斯塔神庙也加入了这片建筑群。

我们不难想到,屋大维的本意或许就是让人觉得有三位神明共居于帕

拉提翁—阿波罗、维斯塔还有屋大维。

在战神广场上,阿格里帕正在建造一座宏伟的新神庙—万神殿。在公元前27年,阿格里帕第三次担任执政官之时,这座神殿举办了落成典礼。他们的初案是让屋大维的雕像屹立在万神殿的中心。那样一来,万神殿就会成为供奉着活人的庙宇。显而易见,他们就是想让罗马人把这位年轻的罗马之主看作神。除万神殿里的计划以外,屋大维的密友和罗马人民还已经用铸造神像的黄金和白银在罗马城内竖立了一些屋大维的雕像。[352]

罗马共和国属于罗马公民,其政治文化强调的是平等。当然,他

们的平等在现代人眼里难免显得有些古怪:国家领导人享受着“更加

平等”的地位;全国的居民被划分为三六九等。但罗马共和国至少还

承认理论上的平等,身为全国之精英的元老就是这种观念的最佳代

表。无论他们内部有着多么巨大的分歧、何等激烈的竞争,他们基本

上都认为元老之间理应彼此尊重,认可他人的成就,互相建言,携手

并进,一起为罗马而努力。共和国时代确实有伟人诞生,个别人似乎

还太伟大了一些。但是,就连马略、苏拉、庞培乃至恺撒都无法与屋

大维相提并论。屋大维是非常特别的,他的超然地位让其他的罗马人

不得不煞费苦心地去寻找一种合适的政治语言来加以描述。所以,我

们大概可以理解他们为什么要把屋大维奉为神明,而屋大维又为什么

要尝试着把自己塑造为人间之神。在神的面前,共和国的基本框架自

然就失去了意义,没有人能够和神并驾齐驱,平等是不可能的。

但是,和神共处并非易事,当人间之神也会遇到不小的麻烦,后来的卡里古拉皇帝就是例子。所以,公元前28年和公元前27年,屋大维开始调整自己的名分。万神殿成了供奉所有神明的庙宇。屋大维的雕像被放在了入口处,而不是中央。身为万神殿建造者的阿格里帕也得到了一座雕像。至于屋大维的金银雕像,它们都被熔掉,然后铸成了货币。至此,在阿克提翁之战以后一度被屋大维采用,并且在他巡视东方之时仍然受到重视的神化计划就这样突然消失了。

屋大维需要一个更加合适的新形象。他一边寻找政治伙伴(虽然起

初可能只有阿格里帕),一边考虑传统的问题。他开始对共和国的平等

理念示以尊重。我们很难想象他会自愿地做出这种改变。他当然已经

取得了无数次的胜利,整个罗马都唯他是从,胆敢挑战者大多已死。

他还用各种各样的纪念建筑、物品、仪式来不停地彰显自己的权威,

改变罗马的面貌。但是,屋大维遇到了阻力,并非所有人都像官方公

告里声明的那样对这位新的恺撒充满了感激之情,他必须妥善解决自

己的前途问题。三头同盟已亡,安东尼和李必达都已死去。或许,他

可以让罗马继续处于紧急状态之下,假装他还在为恢复共和国而战,

从而继续把持着莫大的权力。但这种做法未免太过拙劣。于是,为了

在正常时期保持着既有的特殊地位,屋大维必须建立一种新秩序。公

元前28年,屋大维陷入了前人在内乱之后所遇到的那种困境。几乎别

无选择的屋大维似乎不得不恢复旧时代的制度。在经历了这些年的动

荡以后,共和国显然已经死去,但现在,它仿佛还能死灰复燃。

共和国复苏

即便是在公元前30年,屋大维也还面临着反对派的威胁。假如是

在几年以前,元老院里或许就会有反对的声音,某些知名政治人物可

能还会离开罗马,以此表明自己的反对态度。但到了这个时候,屋大

维的反对者只能隐秘行事了。从表面上看,此时的罗马政界无比团

结,所有人都对屋大维一片丹心;但事实上,没有人会被这种假象欺

骗。当然,在这种政治环境下分辨异己是比较困难的。其中或许有一

些人敢于直抒胸臆(虽然没有被记录下来),但是大多数人都披着伪

装,只在某些时候趁机给屋大维政权使绊子。我们只能借助于蛛丝马

迹,隐约地看见屋大维的新政权和反对者们展开了争论和交易,以求

排除道路上的阻碍,但我们几乎看不到足以牵动全局的重大事件。

大约在公元前30年,马尔库斯·李必达被除掉了。据说,他和布

鲁图斯的姐妹尤妮亚密谋在恺撒返回罗马之时行刺。尤妮亚的身份使

得这个据说存在的密谋和当初的那些行刺者联系在了一起,让人觉得

有人想要再为罗马除去一个暴君。这位李必达的父亲就是三头之一的

那位马尔库斯·李必达。自从屋大维在公元前36年夺走他的军权以

后,仅保留最高祭司一职的李必达就过上了流放的生活。屋大维似乎

经常对李必达加以羞辱,一直到他约在公元前12年去世为止。[353]小

李必达是个比较有声望的政治人物,他或许不满于自己的父亲所受的

待遇。而尤妮亚虽然是一介女流,但她的家族背景和旧日的人脉让她

有了一定的影响力。在屋大维派驻于罗马的代表麦奇纳斯(Maecenas)

发现了阴谋以后,李必达大概甚至都没有经过审讯就遭到了处死,尤

妮亚则结局不明。[354]

第二个事件比较复杂。公元前30年或公元前29年,罗马将军马尔

库斯·李奇尼乌斯·克拉苏在马其顿作战。这位克拉苏是那位著名的

庞培和恺撒的盟友克拉苏的同名孙子,与他敌对的是某些异族部落—

巴斯塔奈人(Bastarnae)、默西亚人(Moesians)和吉泰人。在和巴斯塔

奈人交战之时,身为将领的克拉苏罕见地亲自上阵,还杀死了巴斯塔

奈之王戴尔多(Deldo)。这几乎堪称罗马人前所未有的壮举。克拉苏有

资格凭此得到一项特殊的荣誉—“丰获”(spolia opima),而屋大维

对此加以阻挠。

屋大维利用了一个程序上的问题。克拉苏是被屋大维派去马其顿

的,严格说来,他只是屋大维的代表。于是,屋大维声称只有独立掌

握军权者才有资格得到“丰获”。听闻此言,人们连忙去遍稽群籍,

然后找出了一个史例。在浩如烟海的罗马传说故事当中,有一个叫作

科苏斯(Cossus)的人曾经被授以“丰获”,所有相关资料都显示科苏

斯当时是一位保民官,受制于另一位高级官员。但是,有一次,屋大

维正在监督朱庇特·菲利特里乌斯神庙(Temple of Jupiter

Feretrius)的修复工作(据说,科苏斯当年就是把“丰获”献到了这座

神庙里),就在工程进行之时,人们发现了一块亚麻布。上面的文字显

示,科苏斯是在他担任执政官期间献出“丰获”的。想必很少有人会

相信这么一份内容正合屋大维所愿的史料只是恰巧在这个时候出现,

至少身为史家的李维(Livy)是不相信的。然而,没有人能够指责屋大

维捏造文本。[355]

这个故事里的屋大维看起来未免有些患得患失,他不希望让身为

贵族子弟的克拉苏得到一个连他也未曾拥有的特殊荣誉。显而易见,

克拉苏既不是屋大维的反对者,也不可能拥有足以挑战他的实力。克

拉苏的军权来源于屋大维的信任,他的职位也是由屋大维亲自指定

的。但就算如此,屋大维也不能容忍他来妨碍自己垄断所有的荣誉。

公元前28年,屋大维开始把自己的特殊地位化作罗马政治的常

态。这一年,屋大维和阿格里帕一同担任执政官。之前,在处于紧急

状态下的公元前31年、公元前30年和公元前29年,屋大维也都担任执

政官。当时的他几乎一直身处海外,留在罗马的另一位执政官不得不

独自管理这座城市。然而,在返回罗马以后,屋大维似乎基本上无视

了同僚的存在。每位执政官原本都有三十名扈从,他们手执法西斯(棍

棒和斧子的组合),以此代表执政官拥有惩戒罗马公民的权威。但是,

自从公元前31年屋大维开始连任执政官以来,他执意要求所有的扈从

都随他出行。两位执政官本应是共掌权力的同僚,而屋大维的这种做

法直观地反映了这种理想状态的消亡。而且,这是他有意为之的象征

性举动,其意义就是展示他大权独揽的地位。不过,在公元前28年,

他改变了主意。

六十名扈从不再全部跟随着屋大维,古老的习俗得到了恢复。阿格里帕在公元前28年和公元前27年都与屋大维并列为执政官,并且正常地享有三十名扈从。其实,从宪法和法律的角度来看,扈从分配的恢复没有什么值得一提的意义。然而,这是一个标志,意味着罗马政治往正常的状态迈出了重要的一步。在公元前28年的某个时间点上,屋大维正式宣布持续了十余年的紧急状态就此结束。[356]

严格说来,这次的国家紧急状态和三头同盟挂钩。他们三人理应只是为了重建共和国才接受极大的权力的。一旦三头同盟消亡,紧急状态的法律依据也就变得含糊不清。人们很难说清楚现在仍处于紧急状态的原因是屋大维以执政官的权力下达了命令,还是元老院颁布了对抗安东尼的法令。不过,看起来也没有什么人真的在乎这种事情。但是,在公元前28年,屋大维决定着手结束紧急状态了,公民的权利得到了恢复,屋大维还专门铸造了纪念此事的金币(请参考图6)。金币上刻画的屋大维脚边有一盒卷轴,他正在把其中的一份交给一位感激不已的公民。金币的一面印着“皇帝·恺撒神子·执政官·第六次”(IMP·CAESAR DIVI·F·COS·Ⅵ),另一面则是“他恢复了罗马人民的法律和权利”。同为执政官、积极参与政事的阿格里帕根本没有被提及。屋大维依然没有准备好让其他人也和他一样走上台前,就算是最受他信赖的阿格里帕也不行。不过,至少正常的法律得到了恢复。这就意味着再也不会有不经审判而处死公民以及没收公民财产的事情了。战争结束了,三头同盟的使命想必已经完成。

公元14年,屋大维给罗马人民留下的遗言—《圣奥古斯都行述》(Res Gestae Divi Augusti)—在他亡故以后面世了。这位开创了帝国时代的首位罗马皇帝对自己的一生做出了这样的结论:

在我第六次和第七次担任执政官期间,内战之火已然熄灭,全国一致听我号令。于是,我把共和国还给了罗马元老院和人民。[357]

屋大维和阿格里帕首先需要确认共和国现在有条件自行处理各项事务。他们开展了人口普查(结果为四百零六万三千名公民),[358]为罗马人民举办了一次净化仪式,还整顿了元老的队伍。

公元前28年的元老人数或许超过了一千,其中有一部分人还是按照传统途径从低级官员开始逐步升入元老院的。但还有不少人在恺撒或者三头同盟执政时期得到了提携,直接成了元老。屋大维公开表示要审查元老们的资格,并且希望有人能够主动让位。无论原因如何,有一些人确实很配合。接着,屋大维和阿格里帕要求元老们互相担保彼此都有资格继续担任元老。他们希望某些人会因此感到尴尬,进而主动辞职。最后,屋大维和阿格里帕亲自审视了剩下来的元老,除去了一些他们觉得不合格的人。

这一套流程的设计是为了让这件事情看起来不那么偏颇,但还是难以避免地引发了争论。被迫离开元老院的人深感不满。据说,屋大维一度要穿着胸铠、在十位密友的保护下去主持元老院会议。离去的那些人其实很多是当初为恺撒或者屋大维效劳才成为元老的,屋大维现在的做法相当于背叛了他们。[359]但屋大维和阿格里帕依然认为有必要让元老院恢复一定的威望,为此,他们已经准备好牺牲一部分追随者。

公元前27年1月,屋大维来到了元老院。他交出了执政官的职位,然后依照罗马传统,宣誓声称自己在任期内处事公正、谨遵法律。在此之前,他从未遵守这个传统,因为当时的他不受法律的约束。随后,在刚刚恢复了地位的元老们面前,屋大维第七次受任为执政官。他的同僚阿格里帕则是第三次。

1月13日,屋大维对元老们发表了讲话。这是一个非常关键的历史

时刻。根据现存的史料,此次讲话标志着罗马政治正常化的进程已经

步入了最后一个阶段。屋大维交出了地方省份的控制权,元老院和人

民能够决定地方总督的人选了。这一步同时意味着屋大维放弃了手中

的庞大军队和巨额的财富。为此,元老们给屋大维颁发了新的荣誉。

他的新居会被饰以橡树叶冠,得到这项荣誉的通常是拯救了罗马公民

的性命之人。而他的门旁会种上月桂树,这是阿波罗和胜利的象征

物。元老院里还会摆放一块金色的盾牌,宣示屋大维身上最为根本的

四项美德:勇(virtus)、仁(clementia)、义(iustitia)、忠

(pietas)。[360]

这次事件几乎必定早在屋大维的计划之内。为了这一天的到来,

他和阿格里帕已经做了至少一年的准备。不过,这还不是最终的安

排,还有一些事情尚未解决。三天以后,元老们再度相会。这一次,

他们展开了更加详细的讨论。最后的结果是,屋大维有权控制西班牙

的绝大部分地区、高卢、日耳曼尼亚、叙利亚、塞浦路斯、埃及、腓

尼基(Phoenice,叙利亚沿海地区和黎巴嫩)和奇里乞亚。除了马其

顿、达尔马提亚和阿非利加(今天的突尼斯)以外,这些由屋大维掌控

的省份已经包括了所有时常面临军事威胁的地方。之后,元老们又给

屋大维颁发了一项殊荣—他们让屋大维有了一个全新的名字。这一年1

月16日,以奥克塔维乌斯之名出生、以恺撒之名立业的那个男孩成了

奥古斯都。[361]

从公元前28年开始,到公元前27年1月最终协议的出台,屋大维成

功地重塑了自己的权力。三头同盟时代的特殊权力被他抛到了一边,

元老院得到了改革,法律的权威得以恢复,共和制度全面复苏,屋大

维认可并恢复了元老院和人民的最高权威,元老院则报之以无上的荣

誉。然而,我们还是能够察觉到奇怪的地方,没有任何迹象表明刚刚

得名的奥古斯都会离开政坛。他仍旧是地位超然的政治人物,统治着

无比广大的领土。他依然担任着执政官的职位,并且会不间断地担任

到公元前23年6月为止。

从古典时代开始,包括史家卡西乌斯·狄奥在内的很多后人都把

公元前27年1月庆祝共和国恢复的元老院会议视作帝国诞生的标志。

[362]不过,狄奥也看到了其中的矛盾之处。现实往往是复杂、混乱

的,政治就是如此。共和国的恢复确实意味着传统的政治运作方式又

回到了罗马,但罗马的政治精英们还得再花十年乃至更久的时间来掌

握如何在一个自相矛盾的政局里行事—重新确立的共和制度与一个拥

有巨大权威的人物并存。公元前27年1月,罗马人创制了奥古斯都。接

下来,他们还须切身体会这件事情的意义。

[1] 即今天的塔兰托(Taranto)。—译者注

第十三章 奥古斯都的诞生

[339] Josephus, Antiquitates , 15.6.约瑟夫斯的记录很详细,但某些

内容有可能是他编造的。

[340] Suetonius, Caesar , 7; Plutarch, Life of Caesar , 11.

[341] Dio, 51.16; Suetonius, Augustus , 18.

[342] P. Oxy . 1453.

[343] Dio, 51.20-21.

[344] Dio, 51.19.狄奥的文字看起来像是在引用罗马的官方布告。

[345] Dio, 51.20.

[346] Dio, 51.21.

[347] Dio, 55.9. Res Gestae , 14; Res Gestae , 7. Res Gestae ,

13有奥古斯都本人运用“元首”(princeps)这个词的实例。

[348] Dio, 51.21.

[349] Fasti Triumphales Barberini记载了古典时代举办的历次凯旋仪

式,其中有公元前 29年8月13日和8月15日纪念达尔马提亚和埃及战事的凯旋仪

式,却未曾提及有关阿克提翁的凯旋仪式。

[350] Dio, 51.22.

[351] Dio, 53.1; Propertius, 2.31.

[352] Dio, 53.22; 53.27.

[353] Res Gestae , 10; Dio, 54.27;也可参考54.15。

[354] Velleius Paterculus, 2.88.

[355] Livy, 4.20; Dio, 51.23-37.

[356] Dio, 53.2.

[357] Res Gestae , 34.

[358] 罗马人之前所做的普查只统计男性人口。而屋大维和阿格里帕的这次很可能有一些调整,其具体情况尚无定论。不过,综合各方意见以后,我们大概可以说他们这次普查统计了女性人口,同时或许把儿童人数也计算在内。

[359] Dio, 52.42; Suetonius, Augustus , 35.

[360] Dio, 53.2-11; Res Gestae , 34; Ovid, Fasti , 1.589-90; Fasti Praenestini(January 13) .

[361] “奥古斯都”的含义大概是“尊敬的”,但这个词也有“增长”“壮大”的意思,暗示着奥古斯都会让罗马发展壮大。

[362] Dio, 53.17.

第十四章 奥古斯都的共和国

公元前27年1月16日,罗马元老们亲身经历了一个非同寻常的历史节点。也许,他们会深思自己究竟做了什么。这也难怪,因为在这三天时间里,他们一起见证了罗马政治传统的恢复、共和国的重生。然而,奥古斯都依旧是罗马政局里独一无二的角色。对于罗马传统政治文化而言,奥古斯都的存在就和他的养父当年一样格格不入。

从某个角度来看,这次达成的协议仿佛是历史的重现。在过去的

一百年里,共和国遭遇了许多次震动整个罗马的大危机,但在每次危

机以后,罗马的政治制度总是能够大致恢复为原来的模样。罗马政治

文化的核心要素是人民主权和贵族统治,罗马革命对这种秩序发起了

挑战。士兵们颠覆了共和国,以恐怖的手段对待贵族阶层,许多贵族

或是被杀或是被夺走了财产。在三头同盟时期,意大利地区的财富经

受了大规模的再分配,意大利的人口分布也随着老兵们入驻殖民地而

发生了重大的变化。时至公元前30年,屋大维已经成为一张巨大的政

治关系网络的中心人物。他由此控制了无数的资源,成为地中海世界

毋庸置疑的主人。

然而,就算奥古斯都已经是大权在握的统治者,他也还面临着具

体如何统治罗马的问题,他还是需要地主、贵族们来担任军官、祭

司、法官、市长和较低级的官员。他手中的确凝聚了莫大的权力,他

大概可以凭此扫除五百年共和国历史积攒下来的种种传统,然后从零

开始。但是,奥古斯都自己就是一个传统的罗马人,他也是在保守的

等级制度文化下成长起来的。罗马不仅指代着意大利半岛中部的那座

城市,还意味着那一套守旧的文化和传统,要扫除元老院和被元老们

奉为圭臬的传统,就势必要给罗马的古老秩序也画上一个句号。这也

就相当于抛弃罗马的光辉历史,建立另一座全新的城市。严格说来,

创造一个全新的罗马并不是完全不可设想的事情。但是,安东尼或许

就是前车之鉴。公元前1世纪的罗马是一个超级大国的政治、文化、宗

教、经济中心,像安东尼那样转而以亚历山大为首同样不是不可设想

的事情,却非常难以实现,有些人大概就是因此才选择了对抗安东

尼。

不过,罗马的政治精英们未必会一味地因循守旧。除了罗马本身

的历史以外,他们还能借鉴于希腊世界里各种各样的城邦、联盟、王

国。虽然未免有些雾里看花之嫌,但是罗马人确实对别国的政治传统

有所了解,还有可能在某些情况下加以效仿。如前文所述,奥古斯都

曾经尝试着把自己的形象塑造成神。他参考了君主制的埃及、波斯和

希腊化诸王国,仿照了它们神化统治者的传统。但他最终效仿出来的

结果非常新颖,具备罗马的特色,不同于这些君主国,甚至还发展出

一套独特的意识形态和社会结构。罗马的君主有着更加强大也更加血

腥的权威,他们受到的约束较少。而且,罗马的君主制在很大程度上

违背了传统。公元前27年,在罗马城的中心,奥古斯都的周围是这座

城市的各个纪念建筑,它们就像是传统的化身。奥古斯都的面前则是

身着紫边白底托加袍的列位元老以及古老的神殿和诸神。此时的奥古

斯都是否已经大胆地设想出一整套全新的罗马之道了呢?

极少有史料能够表明奥古斯都是一位卓有创见的思想家。古代的

史家喜欢想象奥古斯都为属于他的全新国家设计未来蓝图的景象,甚

至还会把他最亲近的顾问阿格里帕和麦奇纳斯也加进来,设想他们在

一起抽象地讨论着该如何治理罗马。然而,奥古斯都很可能并不是一

位高瞻远瞩的政治制度设计师。他和其他的绝大多数革命者一样,深

受过往历史的束缚,常常试图从过去汲取智慧,用以解决当下的问

题。他和其他的绝大多数政治人物一样,忙于应付眼前的麻烦,通常

只能就特定的问题找到特定的解决办法。而罗马人则和其他民族一样

需要在政治生活中体会到安全感。在动荡的时期,他们需要得到安

抚。革命会造成很多问题,因为整个世界都会因革命而变得上下颠

倒,让人们难以用传统来指导自己的社会、政治生活。人民需要安

定,但只有他们所能理解的社会、政治秩序才能让他们感觉到安定。

也就是说,他们需要一套熟悉的秩序。奥古斯都承诺过要为罗马人民

带来和平,但光凭内战的结束(以雅努斯神庙大门的关闭和凯旋仪式的

举办为标志)还不足以实现真正的和平,奥古斯都必须让社会秩序稳定

下来。

但是,公元前28年和公元前27年的罗马政局显然有着内在的矛盾

之处。奥古斯都遇到的是根深蒂固、干系重大的全新问题,他不能选

择忽视,因为这些问题不会自行消失。在这些问题的影响下,罗马政

治的模式不得不改变,罗马人不得不经历一场革命。罗马革命纯粹是

实事求是的结果。它不是在某个伟大的乌托邦理想的引导下产生的,

其根源就是罗马社会内部的政治斗争。军队摧毁了元老们的权力,为

三头同盟的掌权做好了铺垫。而包括后三头同盟在内,所有的政治领

导人都必须处理好两件事—如何满足追随者的需要、如何维持住自己

的权威。正是为了解决这种极其现实的问题,安东尼和屋大维才采用

了有别于罗马传统的方式来宣传自己的权力和统治。因此,尽管安东

尼和屋大维之间的确有着不少的差异,但他们身上的相似之处更是多

得引人注目。

然而,渐渐地,屋大维还是转向了比较保守的做法,恢复旧貌成

了他所建立的新政权的核心。不论是重建神庙、政治机关,还是重塑

道德风气,奥古斯都把自己塑造为保守的政治文化的代表。差不多自

屋大维得名奥古斯都开始,就有一些保守的思想家倾向于仅从表面上

看待奥古斯都的所作所为,把屋大维的新名字看作新时代的标志,把

保守的奥古斯都政权和屋大维时代的暴力统治割裂开来。如果以鼓吹

道德教化的保守观点来看,我们很容易忽视奥古斯都政权的矛盾之

处。奥古斯都或许确实说着传统的话语,以传统的方式统治着罗马,

但他本人的存在、他在罗马政治当中的核心地位、他的一言一行显示

出的莫大权力都全然违背了罗马的传统。现代的历史学家们有时候似

乎忘记了奥古斯都的过去,忘记了他的权力基础,反而专注于他巩固

了地位以后的举动。但是,当时的罗马人恐怕不会这样健忘。

公元前28年和公元前27年的共和国的恢复只是屋大维新出台的保

守政策的第一阶段。为了让旧时代的政治文化复苏,屋大维必须同时

恢复元老院的权威,因为这一整套制度的正常运转是离不开元老院

的。他们是执政官的顾问和后盾,为执政官的行为赋予了道德的力

量,而要让元老们发挥出这个作用就必须先让元老院具有权威。但

是,让元老们重获权力又难免会导致奥古斯都的地位遭遇质疑。于

是,虽然奥古斯都政权有必要恢复元老们的权力,但这件事的最大阻

碍恰恰是奥古斯都本人的权力。

影响奥古斯都政权立足的最大难题就是这个矛盾,奥古斯都必须

妥善地解决自己的地位问题。他现在拥有的权势就算纵观罗马历史也

无出其右者,但他无意像苏拉那样在复古改革完成以后急流勇退。在

公元前28年1月,屋大维的统治依据是紧急状态下虽然不明晰但毋庸置

疑的莫大权力。当然,屋大维的权力归根结底来自听命于他的强大军

队和平民大众对他的支持。但紧急状态的存在让他合法地掌握了凌驾

于法律之上的权力,虽然他在运用这种权力之时还是利用了执政官的

传统身份。然而,在他宣布紧急状态结束之后,这种权力就显得不合

时宜了。异乎寻常的权力总是难以在正常时期找到存在的依据,这是

个让很多独裁政权都困扰不已的问题。因此,在开启奥古斯都时代的

过程当中,屋大维抛弃了这种权力。不过,奥古斯都的权力本就不依

赖于法律的认可,他的根基是金钱和暴力。这种宪法层面上的调整几

乎不会影响到他的实权。

法律是为政权提供统治正当性的一种传统手段。但既然法律无能

为力,奥古斯都政权就把自己的统治正当性的来源解释为人们对奥古

斯都的一些个人品质的尊重。换言之,他正是凭着这些个人品质才打

破了元老之间人人平等的惯例,成为超群绝伦的存在。我们必须带着

这种观点去看待元老院颁发给奥古斯都的荣誉:其宅邸的特殊标志、

他和神明的联系、元老院里的那个赞颂其美德的金色盾牌。奥古斯都

本人也对这种变化做出了解释。他声称在公元前27年以后,他的权力

和其他官员是相等的,他胜于旁人的地方不在官职,而在于他的“权

威”(auctoritas)。[363]“权威”既是政治属性,也是个人属性。奥

古斯都的统治依据从三头同盟时代合法取得的违法权力变成了他凭着

自己的优异品质而获得的个人权威。

奥古斯都的统治经由多年连任执政官而得到了巩固。执政官的连

任并不是什么新鲜事,最著名的例子大概就是马略,他在公元前2世纪

末多次连任执政官。但是,之前的例子都是为了应对危机才出现的,

马略得以连任是因为国家需要他凭着出众的能力和声望来解决国家的

危机。而在公元前27年,我们很难说罗马遇到了什么威胁。奥古斯都

连任执政官的理由看起来似乎不太充分,他在紧急状态结束以后继续

统治罗马的理由只能是他具备了特别优秀的道德品质和领导才能。但

是,元老治国的核心就是分享官职以及让元老们(在同一等级内)保持

平等。因此,奥古斯都依然是让元老们头疼的异常存在。

不过,把政治个人化还造成了一些别的影响。既然奥古斯都拥有

的是个人的道德权威,那么他就需要让人们看到他的道德约束力和领

导才能。因此,在整个奥古斯都时代,我们都能看到他在努力地以各

种方式展示这些与政治挂上了钩的个人品质。他的主要手段是在战场

上建功立业,但他也曾试图在宗教和家族领域显示领导能力。奥古斯

都时代的罗马新秩序需要严格的约束,需要剔除那些导致了百年动荡

的混乱因素,只有深刻的社会改革才能还罗马以和平。

但这种和平是有代价的,这是奥古斯都政权不愿让人了解的事情。奥古斯都政权的严格约束压制了共和国自古以来的自由。虽然听起来或许有些别扭,但一定程度上的混乱其实是民主(或伪民主)制度运行过程中不可或缺的组成部分。共和国时期的罗马政治本就是有些混乱的,竞选犹如战斗,精英之间时常爆发非常激烈的竞争。剔除旧制度当中的混乱因素等同于摧毁传统政治的一大支柱。罗马得到了和平与秩序,而代价就是失去自由,接受奥古斯都的统治。

属于军队的政权?

传统的罗马政治史偏爱讲述贵族之间的故事,但其他的政治力量同样不容小觑。元老们是在屋大维的胜利纪念品环绕下展开政务讨论的,他们每时每刻都能由此回忆起(虽然他们应该永远也忘不了)屋大维的敌人最终都落得怎样的下场。不过,最让他们噤若寒蝉的还是奥古斯都手中的军权。

奥古斯都受命掌控了许多地方省份,其中的绝大部分都驻扎着为

数众多的军队。让这样规模庞大的军队继续听从奥古斯都指挥的理由

是这些省份都频发战事,而奥古斯都既有崇高的威望又有充足的军事

经验。当然,他分身乏术,不可能直接指挥所有地方的军队,他会派

精心选出的亲信去代表他统率部队。不过,虽然有代表的存在,但奥

古斯都和阿格里帕还有后来奥古斯都家族里的核心角色都长期身处地

方省份,和军队待在一起。毕竟,这些军团是他们最重要的支持者。

总之,奥古斯都成功地维持住了对军队的掌控。

罗马军队有着巨大的人力需求。二十八个军团共十四万的罗马男

性需要离开意大利,去地中海世界的各个角落为国效力。而且,其时

限长达十六年。他们的报酬是定期发放的薪水和不定期发放的奖金,

虽然奖金后来也有了固定的发放规定。根据人口普查的结果,此时的

罗马公民总数为四百万出头。也就是说,罗马的人力有大约百分之十

一在军队里,这些人就是最直接受益于奥古斯都的统治的群体。而

且,在之前的内战结束以后,有不少的老兵退伍后拿到了殖民地里的

大量土地。这两部分军人相加就构成了一股人数众多、实力雄厚的势

力。

除了军团以外,奥古斯都还有别的部队。在公元前27年的最终协

定出台以后,他首先在意大利设立了一支卫队,其薪水是普通军团士

兵的两倍。这种卫队早有先例,共和国时代的将军们也曾设立过这种

部队。尤里乌斯·恺撒就有一支规模较大、发挥过许多作用的卫队。

而奥古斯都的卫队很可能有大约五千人,具备比较强的实力。虽然这

些卫兵大多被奥古斯都分别派往意大利的各座城市,留在罗马的人数

其实很少,但这毕竟是突破了共和国时代惯例的事情,一般的执政官

可不会有这样的直属军队。他们的存在非常直观地表明了奥古斯都的

权力究竟来自何方,同时也说明了军队仍然是罗马政局里相当重要的

一股力量。[364]

公元前27年1月的事件标志着罗马政治的程序恢复了正常,但罗马

政治本身已经发生了变化。罗马政治运转的方式和不少的政治文化都

保留了共和国时代的风貌,以贵族阶层的传统和价值观为核心。然

而,奥古斯都还牢牢地把持着大权。不过,这种矛盾的状态至少给政

治讨论提供了空间,因为奥古斯都政权需要遵守旧时代的规矩,以免

奥古斯都被当作独夫。但共和国时代的规矩显然不会允许有奥古斯都

这样独揽大权的人物存在。因此,在公元前27年1月,元老们并不确定

自己究竟促成了什么,也说不清新的政治秩序的本质。不过,就是这

种不确定为政治的发展留下了宝贵的空间。

共和国恢复以后的政治局面

公元前27年下半年,奥古斯都离开了罗马,准备开始治理他的省

份。他先去了高卢,打算入侵不列颠,[365]但这个计划因故被搁置

了。于是,奥古斯都转而在高卢展开了人口普查。这年末,他离开了

高卢,来到西班牙亲自指挥比利牛斯山区的战事,一直到公元前24

年。虽然在这期间,他都担任着执政官的职位,但他从未觉得有必要

返回意大利。[366]在这场战争结束以后,他让一部分军人退役,在西

班牙设立了一个殖民地。

在奥古斯都外出之时,意大利的事务看起来大多是由阿格里帕来

处理的。他正忙于在罗马的战神广场上兴造建筑,其重点项目是尤里

乌斯会堂(Saepta Julia)。这是罗马选民们集会表决法案、选举低级

官员的场所。在为会堂命名之时,阿格里帕没有使用自己的名字,而

是使用了奥古斯都的名字。同样在这一时期,阿格里帕建造了一座浴

场(罗马城内耗资最多的公共建筑之一)和一个被称为尼普顿大厅

(Basilica of Neptune)的建筑。这个尼普顿大厅里展示着纪念奥古斯

都海战胜利的画作。阿格里帕还主持了奥古斯都的女儿尤莉亚和奥古

斯都的外甥马尔凯卢斯的婚礼。此外,阿格里帕在他自己的居所遭遇

火灾以后,入住了奥古斯都在帕拉提翁山上建造的宅邸。阿格里帕不

只是奥古斯都的左右手,他还分享了奥古斯都的权力。奥古斯都的这

幢宅邸当然已经在公元前27年得到了元老院颁发的荣誉,但随着阿格

里帕的迁入,它看起来越发像是一座皇宫了。[367]

然而,奥古斯都等人面对的事情并不总是这么简单。大概在公元

前25年的时候,负责治理埃及的科涅利乌斯·伽卢斯因政治斗争而丧

命。当初,公元前30年下半年,屋大维在动身返回罗马之际任命他为

埃及总督。这或许是个合乎实际的决定,却引发了不小的争议。伽卢

斯恰巧是一位知名的诗人,但他不是元老,而是次一档的骑士。而一

般说来,像埃及这样规模较大、地位重要的省份都会由元老来管辖。

伽卢斯一度忙于管理刚刚成为罗马省份的埃及。他既要在埃及主

持建立罗马的统治秩序,还要前去镇压一场大规模的叛乱。到了公元

前29年4月15日,伽卢斯已经在命人制作纪念胜利的铭文。他自称在十

五天内制服了叛军,占领了五座城市,然后率军跨越了埃及的边界,

进入埃塞俄比亚,并且在当地建立了罗马的霸权地位。[368]伽卢斯的

总督生涯似乎非常成功。然而,他的敌人已经蠢蠢欲动。

一个叫作瓦列里乌斯·拉尔古斯(Valerius Largus)的人对伽卢斯

发起了控告,他提出的名目让人感到有些费解。他声称伽卢斯在埃及

竖立自己的雕像,还制造了吹捧他自己的铭文。这当然都有可能是真

的。但按照罗马法律,这种自吹自擂的行为很难称得上是犯罪。经过

一番争论,奥古斯都和伽卢斯决裂了。然后,伽卢斯又因一个含糊的

罪名而遭到了起诉,他的处境变得越发艰难。虽然看起来有些不可思

议,但这一回,据说伽卢斯在暗中策划革命。之后,他被定了罪,遭

到了流放,他的财产被转交给奥古斯都。接着,伽卢斯自杀了。据

悉,在听说伽卢斯的死讯时,奥古斯都流下了眼泪。考虑到他一生中

明明杀人如麻,这种动情的表现让人不禁感到有些意外。

成功除掉了伽卢斯的瓦列里乌斯·拉尔古斯并没有享受到多少的

喜悦。有一次,有一个人带着一个书写板,在一堆朋友的陪伴下靠近

了拉尔古斯,然后问他是否认识自己。拉尔古斯表示他不认识。然

后,这个人就叫他的那一群朋友都过来见证拉尔古斯的回答。此外,

有一个名为普罗库莱乌斯(Proculeius)的人也有类似的举动,他是奥

古斯都身边的圈子里的人。每次偶遇拉尔古斯之时,普罗库莱乌斯都

会用手紧紧地捂住嘴巴,以此表示在拉尔古斯面前说话是很危险的。

[369]

伽卢斯之死让我们得以看到奥古斯都时代早期的罗马还未完全稳

定下来,奥古斯都政权随时准备动用暴力。不过,伽卢斯的政治地位

不是很高,他是不可能威胁到奥古斯都政权的。也许,他说了某些不

该说的话,然后被拉尔古斯汇报了上去。伽卢斯是极受奥古斯都信赖

之人。如若不然,他不可能得到埃及总督这样重要的职位。但是,包

括他在内,有许多人都会在接下来的这些年里逐渐发现,帝国时代有

了新的规矩。无论伽卢斯究竟做了什么或者说了什么,至少他让奥古

斯都感觉到有必要惩处一下这位朋友了。但就在这个时候,元老们介

入了。伽卢斯不属于元老之列,他是奥古斯都政权的受益者。在当时

的政治条件下,反对奥古斯都之人不会公开挑战他本人。因此,虽然

元老们在斥责伽卢斯之时表现得对奥古斯都忠心耿耿,但其中某些人

很可能只是想要铲除这么一个奥古斯都政权培养起来的新贵而已。

帝国时代的政治让友谊的面貌也不得不发生改变,而友谊是罗马

政治文化的核心要素。因此,伽卢斯事件反映出的是一个相当重大的

转变。罗马精英们向来珍视畅所欲言的自由,但伽卢斯事件宣示了言

论自由的时代已经结束。无论伽卢斯受到了什么指责,支持奥古斯都

的那些元老都只能附和,以此展示他们对奥古斯都的忠诚,因为此时

公开站在伽卢斯那边就相当于宣布自己是现政权的敌人。而且,奥古

斯都当时不在罗马,他几乎没有干涉这件事情。伽卢斯是在一种可怕

而强大的推力下走向死亡的。时人或许少有察觉,但当奥古斯都表明

他反对伽卢斯之时,伽卢斯就已经死了。

公元前24年上半年,在离开了将近三年以后,奥古斯都开始从西

班牙返回罗马,得知此事的元老们纷纷表决同意给奥古斯都颁发更多

宗教和政治领域的荣誉。这几乎要成为一项新传统了。元老们要建造

一座奥古斯都和平圣坛(Altar of Augustan Peace),用以庆祝他胜利

回归。奥古斯都还得到了免受法律的强制要求的权利。虽然他的权力

并没有因此而增长,但这项特权进一步凸显了他的特殊地位。此外,

元老们还给莉薇娅(Livia,奥古斯都之妻)的儿子提比略、奥克塔维娅

(奥古斯都的姐姐)的儿子马尔凯卢斯颁发了荣誉,仿佛在宣布现在这

个共和国的本质其实是君主制。提比略获许提前五年满足各项公职的

年龄限制,并且即刻被选入了元老院。马尔凯卢斯刚刚和奥古斯都的

唯一后代尤莉亚成婚,元老们直接任命他为第二档次的罗马官员(裁判

官),将他选入元老院,同时允许他提前十年参选执政官。[370]在自相

矛盾的奥古斯都的共和国里,传统的共和国官职被保留了下来,并且

成了奥古斯都政权的门面。但这些官职都会被元老院交给奥古斯都的

家族成员,其唯一理由就是褒奖这位实际上的君主。

奥古斯都政权矛盾的本质引发了人们的不满。他宣布了共和国已经得到恢复,却依然手握重权,他的家人甚至也得到了荫庇。所有人,尤其是元老,都不可能不知道这两种现象是互相矛盾的。罗马的保守政治文化不停地受到挑战,人们越来越无法忽视奥古斯都的本质是一位专制君主。当奥古斯都的共和国在公元前27年1月被创造出来之时,人们肯定能够清晰地看到这一点。后来,奥古斯都没过多久就离开了罗马,让这种本质得到了些许的掩盖。然而,随着他的归来,人们不可能再假装这个共和国还是以前的那个共和国。在接下来的两年内,这种潜在的不满情绪会酝酿出一场巨大的政治危机。

奥古斯都共和国的危机

公元前23年1月,年仅三十九岁的奥古斯都第十一次就任执政官。他已经史无前例地连任了九年。在这一年较早的某个时候,罗马又一次受到了周期性流行病的侵袭。病魔当然不会在意人的社会地位。奥古斯都患病了,而且一时之间高烧不退,他的身体渐渐衰弱下去。看起来,他很有可能会一命呜呼。于是,他把亲信和一些国家官员召到了身边,然后把各种官方文档交给了另一位执政官,其中包括了详细的军队部署和财政方面的记录。由此可见,另一位执政官之前无权查看这些文档。奥古斯都还把自己的玺戒交给了阿格里帕。这些举动显然意味着他决定把民政权力交还给正规的国家官员,同时让阿格里帕成为他的私产及政治继承人。[371]

随着病情的恶化,奥古斯都的医生安东尼乌斯·穆萨(Antonius Musa)变得越来越焦急。最后,他拖着奄奄一息的奥古斯都去洗了一次冷水浴,这种快速降温的手段居然成功地让奥古斯都退了烧。

这次疾病让许多人开始考虑奥古斯都死后会发生什么事,元老们议论纷纷。有人说,他打算把整个国家交给他指定的继承人。当然,从法律规定和实际运作来看,共和国是不可能被这样转交出去的。共和国的官员依法掌权,也势必要依法卸职。奥古斯都固然可以像恺撒一样指定私产继承人,但这种做法不是转移政权所属权的公认程序,其政治意义是不明确的(虽然无疑会有很大的意义)。但如果指定下一任的国家领导人,那就相当于宣布罗马现在就是一个君主国。

对于这种传言,奥古斯都不得不做出回应。他带着遗嘱来到了元老院,提议宣读遗嘱。没有人表示同意。首先,要求他读遗嘱会显得自己不信任这位权势滔天的大人物。其次,他既然主动提出要宣读遗嘱,那么肯定不会读出什么不利于他的内容。而且,遗嘱是私密的文件,理应在订立者死后才公之于众。罗马人会在遗嘱里把财产分享给朋友,表明自己是个忠于友谊之人。换言之,遗嘱是订立者对自己的社会关系的确认。因此,罗马人认为遗嘱是非常重要的。元老们当然不愿意公然强迫奥古斯都宣读这么一份私密之极的文档。

此时,有可能成为“皇位继承者”的有两个人:其一是年纪尚轻

的马尔凯卢斯,其二是奥古斯都的政治伙伴阿格里帕。如果以后来的

皇位继承案例来看,即使马尔凯卢斯没有官职,也没有政治、军事经

验,他也应该会继承皇位,因为他既是和奥古斯都关系最近的男性亲

属,也是奥古斯都的女婿。但奥古斯都向来不感情用事。就算是在公

元14年,他似乎也计划着让权力最大、经验最足因而也最有个人权

威、最有政治影响力的亲属来成为第一位接手皇权之人。因此,在公

元前23年的政治环境下,奥古斯都只会选阿格里帕来当他的政治继承

人。

阿格里帕是一位身经百战的将军,他也在罗马城内主持兴造了许许多多的建筑。而且,中下层的罗马人看起来也很爱戴他。当奥古斯都身处西班牙的时候,阿格里帕实际上在代表奥古斯都管理罗马。虽然他的家族背景比不上马尔凯卢斯,但马尔凯卢斯的经验毕竟太少。阿格里帕得到大多数军人拥护的可能性要大得多。况且,奥古斯都把玺戒传给了阿格里帕。

我们不清楚传玺戒这种举动究竟具有怎样的意义,当时的罗马人

或许也难以做出定论。但至少,这种举动留下了政治猜想的空间。阿

格里帕起码由此得到了某种超然于法律之外的权威。看起来,玺戒的

传承意味着就算奥古斯都去世了,罗马也不会脱离恺撒派势力的掌

控,因为阿格里帕会凭此而有权继承奥古斯都的政治地位。不过,如

果说奥古斯都政权还能勉强算是共和国历史上的例外,那么其继承者

的出现就让它显得不像是例外,而是某种可以承继下去的制度创新

了。随着罗马的政局变得越来越紧张,阿格里帕便奉命到东方去替奥

古斯都巡视诸省,他又一次成了奥古斯都的代表。阿格里帕的东方之

行就好像是奥古斯都之前去高卢和西班牙逗留的那三年。他的离去让

他得以完全避开旁人的攻击,也让那些执着于共和制度的元老暂时安

分下来。[372]

奥古斯都正承受着不小的压力。公元前23年7月,他离开了罗马,到城郊的一处圣地去庆祝“拉丁节”(Feriae Latinae)。7月1日,奥古斯都在节庆上辞去了执政官的职务,指定卢奇乌斯·赛斯提乌斯(Lucius Sestius)为继任者。赛斯提乌斯是布鲁图斯的拥护者,而且一直到现在都还在公开地赞扬布鲁图斯诛杀暴君的行为。这个继任的人选不太可能没有经过事先的挑选。奥古斯都想要由此来表明共和国真的恢复了,现在的政府就是正常的共和政府。

然而,实际情况依旧有别于共和国时代,奥古斯都取得了保民官的权力(tribunicia potestas)。他并没有担任保民官,但他拥有保民官的权力和职责。由此,奥古斯都宣示了属于他的政治版图。他会按照宪法和法律,以人民守护者的身份限制执政官的权威。从某种意义上来说,这一举动让奥古斯都政权回到了三头同盟时代。当时,后三头提出的兴兵理由就是反对一小撮元老侵犯人民的权利。而现在,元老们或许成功地让奥古斯都交出了执政官的职位,但他不会就此放弃。

正当政坛上风云变幻之时,奥古斯都不幸遭遇了一场悲剧:马尔

凯卢斯病逝了。他也和奥古斯都一样发了烧,穆萨也试着给他洗了冷

水澡,但这一次没有见效。就算阿格里帕会在奥古斯都万一病逝之时

继承他的政治地位,马尔凯卢斯也仍然是未来的继承者,他的死亡让

奥古斯都失去了所有的男性近亲。马尔凯卢斯得到了火葬,其骨灰被

放在了台伯河畔战神广场上的奥古斯都陵墓当中。这座陵墓会成为奥

古斯都的家族公墓,在未来的五百年里纪念着罗马的第一代皇室(请参

考图7)。卡皮托里翁山脚尚未完工的一座剧院被命名为马尔凯卢斯剧

院(请参考图8),即使经过了两千年的岁月洗礼,这幢宏伟的建筑也依

然让人惊叹不已。

四五年后,维吉尔的伟大作品《埃涅阿斯纪》问世了。这是一部

讲述罗马建城史的神话史诗,其中有一幕预言的场景。维吉尔让主人

公埃涅阿斯来到地下世界,看到了一系列领导着罗马从难民聚居的小

城镇成长为征服世界的大帝国的英雄人物。[373]想来,维吉尔最后应

该会以光荣无限的奥古斯都时代收尾,他在其他的预言场景里确实就

是这么安排的。但在这一幕当中,最后出现的是年轻的马尔凯卢斯的

亡魂和他在战神广场上的葬礼。这一幕的寓意是,马尔凯卢斯原本会

成为最伟大的罗马人,但就连诸神都为此感到嫉妒,出手夺走了他的

性命,让罗马人民失去了一位英雄。一般说来,很多人都青睐于英年

早逝、天妒英才之人,畅想着这些人如果活了下来,实现了自己的抱

负,是否能够解决所有的问题,让历史产生“另一种可能”。但是,

维吉尔为马尔凯卢斯所写的这个故事看起来和皇室很有关系,尤其是

考虑到马尔凯卢斯在去世的时候还根本没有取得什么值得一提的成就

(和其他的这类人物不太一样,比如,遇刺的美国总统约翰·F.肯尼

迪)。

尽管奥古斯都政权能够大张旗鼓地纪念去世的马尔凯卢斯,把他

的名字长久地留在罗马的建筑上,但奥古斯都对权力的掌控开始显得

有些脆弱了。马尔凯卢斯之死震动了奥古斯都政权。如果说维吉尔是

抓住了一些民众在马尔凯卢斯的葬礼上表露出来的态度,那么这场葬

礼本身则清晰地宣示了罗马已经拥有了君主制的基础。许多人,尤其

是平民表现得仿佛罗马已经是一个君主国了。虽然公众表达出这样的

态度可以算是对奥古斯都政权的支持,但同时也有一定的风险。参加

了葬礼、目睹了这一切的元老们都知道马尔凯卢斯还只是个孩子,他

们会清楚地意识到奥古斯都交给他们的这个所谓恢复了的共和国里有

一个皇室一般的家族存在,公众为这样一个年轻而几乎没有政治经验

的男孩深切哀悼,正是证明了他们愿意让这个家族以皇室之身统治国

家。奥古斯都共和国的君主制与共和制并存的双重性质暴露无遗。

我们或许可以把奥古斯都辞去执政官职务的举动视为一场正在发展的政治危机的结果,把阿格里帕派去东方可以让他免于和元老们发生正面冲突,也表明了阿格里帕在帝国政权中的独特地位。可是,皇位继承人和恢复了的共和国格格不入,就连奥古斯都本人也是如此。如果共和国真的已经恢复,那么辞去了执政官职位的奥古斯都就没有理由再留在罗马了。他本就有治理地方省份的职务在身,逗留于罗马只会让他成为君主制本质的最好证明。自公元前49年恺撒渡过卢比孔河以来,元老院的权力一直都处在个别强权人物的严重干扰之下。假如元老们真的能够让奥古斯都离开罗马,他们就可以重获久违的统治地位。

虽然现在的政治局势已经比较复杂了,但奥古斯都还遇到了一场

和政治密切相关的审判。这次审判的直接当事人是曾经担任马其顿总

督的马尔库斯·普里穆斯(Marcus Primus),他应该是克拉苏的继任

者。马其顿是个战事频发的省份,普里穆斯在任期间和当地的奥德吕

赛人(Odrysians)发生了冲突。他的作战很成功,但他在作战之时率军

跨越了自己的省界。严格说来,这是违法的。大约在公元前23年,他

回到了罗马,然后立刻就受到了指控。普里穆斯和奥古斯都走得很

近,他掌控的马其顿是一个很重要的省份,驻扎着大量的军队。奥古

斯都显然不太可能会坐视一个有可能与自己为敌的人掌握马其顿。为

普里穆斯辩护的就是和奥古斯都关系很亲密的卢奇乌斯·穆雷纳

(Lucius Murena)。不过,普里穆斯的自辩词有可能给奥古斯都带来一

些麻烦,他声称自己走出省界是因为马尔凯卢斯(这大概会被看作在代

奥古斯都传讯)或奥古斯都本人传来了命令要求他这样做的。

然而,不管是奥古斯都还是马尔凯卢斯都没有下达这种命令的权威。以罗马的标准来看,马尔凯卢斯只是一个没有任何公职的男孩,他没有权力对普里穆斯这样的高级官员下达指示。奥古斯都其实也不行。如果马尔凯卢斯确实参与其中,他的角色会十分有力地证明奥古斯都真的正在罗马共和国里打造一个凌驾于法律之上的王朝。如果传讯的是奥古斯都本人,情况会稍微好一些,因为奥古斯都当时还是执政官。但这还是有君主制作风的嫌疑,因为共和国的军事政策向来由元老院掌握。

这是一场政治审判,其目的是摧毁普里穆斯的政治生涯。如果陪

审员们对他的说法表示认可,宣判他无罪,那么这几乎相当于宣布奥

古斯都和马尔凯卢斯就是事实上的皇族。如果他们表示反对,那么和

奥古斯都交好的普里穆斯的政治前途就毁了。让所有人深感意外的

是,奥古斯都出庭做证了。他宣称普里穆斯所说的命令根本就不存

在。穆雷纳大怒,他质问是谁传唤奥古斯都出庭的,奥古斯都又为什

么要以证人的身份出庭。奥古斯都回答:“共和国。”他在捍卫共和

国。然而,其实他只是想要保护不宣之秘。他不能让所有人都知道自

己无视了元老院的权威,遑论马尔凯卢斯分享权力之事。接着,陪审

员们开始表决。一些人投了无罪票,但大多数人并不打算用这种方式

来宣布奥古斯都在撒谎。普里穆斯完了。[374]据我们所知,普里穆斯

是奥古斯都的朋友,他肯定也和帝国的其他高层人物有着非常密切的

关系。然而,奥古斯都依旧选择了牺牲他的政治前途。

不久以后,大概在公元前22年,有人密谋刺杀奥古斯都。我们永

远也不可能了解这种阴谋的真相。不过,这次的两个主要嫌疑人分别

是法尼乌斯·凯皮奥(Fannius Caepio)和上文提及的卢奇乌斯·穆雷

纳。他们都在被捕之前逃离了罗马。于是,他们遭到了缺席审判。负

责此案的是莉薇娅(奥古斯都的妻子)之子提比略,[375]这也是他第一

次处理重要的公务。但即使是在这种情况下,提比略也无法让陪审员

们一致同意判决有罪,这使得奥古斯都出台了法案,规定今后每一位

陪审员的判决都会被公之于众。这项新法非常有助于让各位陪审员在

这种政治审判中做出有罪的判决。凯皮奥在逃亡途中被抓回了罗马遭

到处死。之后,凯皮奥的父亲解放了所有在凯皮奥的逃跑路上出手保

护过凯皮奥的奴隶。某个背弃凯皮奥的奴隶还被在脖子上挂上了告示

牌,被带到罗马广场上示众,最后被钉死在十字架上。凯皮奥的父亲

这是在向所有人宣布他相信凯皮奥无罪,同时也表达了他对奥古斯都

的抗议,暗指奥古斯都蓄意杀害凯皮奥。

相比之下,我们不是很确定穆雷纳究竟是如何死去的。穆雷纳的

人脉很广,他的家族和帝国高层的关系很近。他的兄弟普罗库莱乌斯

是当初亲手抓住克莱奥帕特拉的人,也是科涅利乌斯·伽卢斯的朋

友。穆雷纳的姐妹提兰提娅(Terentia)是麦奇纳斯的妻子,而后者又

是奥古斯都身边的亲信。不仅如此,我们一般还认为提兰提娅是奥古

斯都的情人。穆雷纳还有一个兄弟本来会成为公元前23年的执政官,

却在即将受任之时去世了。[376]另外一个兄弟还曾在一些年前指挥过

阿尔卑斯山区的军队。

普里穆斯和穆雷纳(伽卢斯或许也算)的下场都充分表明奥古斯都会为了政治目的而抛弃朋友。长期与奥古斯都共事之人应该不可能不知道他会干出这种冷酷的事情,但罗马人通常还是认为他们的政治秩序是依托于私交网络和彼此之间的恩情而存在的。穆雷纳的倒台尤其令人吃惊,奥古斯都的追随者因此产生了分裂。麦奇纳斯跟提兰提娅说了她的兄弟正遭到调查,然后提兰提娅就告知了穆雷纳,让他逃跑。[377]

麦奇纳斯和提兰提娅或许展现了家人之间互帮互助的关系,但在当时,家族和政治基本是分不开的,他们的行为可以被视作背叛。

在之前的两场重要的政治审判当中,各位陪审员至少是不相信奥古斯都的。现政权露出了獠牙,实现了自己的某些目的,但代价就是让许多人看清了共和制度其实没有恢复。一些元老开始明显地表现出反抗的意图,发轫于公元前28年的奥古斯都共和国看起来即将终结。

奥古斯都仍然有选择的余地,他有军队和资金。但动用军队会让罗马再次陷入内战,还会让奥古斯都被大多数人当作独夫。以权力的实质(金钱、军队)来看,奥古斯都占着上风。元老们也都明白奥古斯都掌握着庞大的政治资源,所以愿意认可他的显赫地位。但是,回首晚期共和国的历史,我们会发现元老们向来不会因此放弃他们的原则。元老们相信自己的权威和传统,相信自己有权利统治这个国家,相信元老治国是罗马的唯一出路。为了让罗马延续下去,奥古斯都需要和元老们达成和解。这是一场互相恐吓的政治比拼,一边的底牌是暗杀,另一边则是重新开启大规模的内战。不过,无论如何,在公元前22年,奥古斯都正准备前去地方省份。在这种情况下,难免会有一些元老认为己方正在逐渐获胜,奥古斯都的权力正在衰落。

但是,三年后,奥古斯都回来了。那时,他的地位已经变得无比稳固,自以为即将取胜的那些元老将再一次切身地体会到政治斗争是何等的现实而残酷。

第十四章 奥古斯都的共和国

[363] Res Gestae , 34.

[364] Dio, 53.11.

[365] Dio, 53.22.

[366] Dio, 53.28; Res Gestae , 12.

[367] Dio, 53.23; 27.

[368] Friedhelm Hoffmann, Martina Minas-Nerpel and Stefan

Pfeiffer (ed.), Die dreisprachige Stele des C. Cornelius Gallus:

übersetzung und Kommentar (Berlin and New York: Walter de Gruyter,

2009).

[369] Suetonius, Augustus , 66.2; Dio, 53.23-24.

[370] Res Gestae , 12; Dio, 53.28.

[371] Dio, 53.21.

[372] Dio, 53.22.

[373] Aeneid 6, 752-885.

[374] Dio, 54.3. Velleius Paterculus, 2.91.

[375] Suetonius, Tiberius , 8.

[376] 穆雷纳的兄弟瓦罗(Varro)的故事比较复杂,有一些文本没有把瓦罗包括在执政官的名单当中。不过,Fasti Capitolini把瓦罗·穆雷纳视作公元前23年1月的执政官。但是,他接下来遇到了某些事情(铭文残缺,不得而知)。

[377] Suetonius, Augustus , 66.3.

第十五章 罗马的混乱与奥古斯都的权力

执政官选举通常开始于前一年的年中。刚刚辞去公元前23年执政官职务的奥古斯都并没有竞选公元前22年的执政官,治理罗马的职责传到了其他人手里。虽然他或许做好了相应的计划,但奥古斯都并没有随阿格里帕一起去地方省份。自公元前32年以来,这是元老院第一次在不包括恺撒的两位执政官带领下展开传统的新年宣誓和献祭仪式。

公元前22年一开始就不太顺利:冬季的风暴十分强烈;台伯河河水泛滥成灾;罗马本身也受到了雷暴雨的侵袭,一些著名的雕像被闪电击中;洪水还带来了疾病;罗马的粮食储备消耗殆尽,城市陷于饥饿之苦。

于是,平民发起了暴动。他们拿下了广场,把元老们围困在元老院里,并且要求元老们让奥古斯都担任独裁官,不然就火烧元老院。手持法西斯的执政官扈从被平民带出了元老院,一起去找奥古斯都。两位执政官就这样被夺走了权威的象征。找到奥古斯都以后,人们请求他来担任独裁官,掌管罗马的粮食供给。奥古斯都夸张地撕破了自己的衣服,露出了胸膛,以此表示自己如果担任独裁官就会遭遇刺杀。不过,他最后还是同意了处理粮食供给的问题。毕竟,他是平民的保护者,也是他们在危难之际的避难所。五天以后,粮仓满了。[378]

奥古斯都一直都在细心地维持着他和罗马平民的关系。自他步入政坛的那一天起,他就在努力地把自己塑造为平民利益的捍卫者,试图将自己与保民官的权力和责任联系在一起,成为人民的代表和保护者。早在公元前36年,他就使用了一些保民官的象征。公元前23年,他还在卸任执政官的同时取得了保民官的权力。由此,奥古斯都表明了自己就算不再是元老院的领导者也会继续保护人民的利益。

奥古斯都丝毫不吝于用金钱来宣示自己的立场。凭着尤里乌斯·

恺撒的遗产,他给至少二十五万人送出了每人三百赛的奖金。公元前

28年,他又给类似规模的群体发放了每人四百赛的奖金作为阿克提翁

和亚历山大战事的胜利纪念。公元前24年,他从西班牙归来,再一次

送出了每人四百赛的礼物。这个数额的资金大概足以支撑两个人以比

较低的消费水平在罗马度过一年。公元前23年,奥古斯都给每位罗马

居民提供了十二份粮食配给,受惠者数目同样至少有二十五万人。

[379]

奥古斯都直接送给罗马城居民的经济利益已经达到了一个非常庞

大的规模。三次奖金的总额分别是七千五百万赛、一亿赛和一亿赛,

他在公元前23年购入的粮食则价值四千万赛。[380]也就是说,在阿克

提翁之战结束以后的六年时间里,奥古斯都花费了两亿四千万赛的资

金来直接援助二十五万罗马平民。每位登记在册的罗马男性都得到了

九百六十赛,每个罗马人勉强维持生活的费用为每年两百到三百赛。

由此可见,奥古斯都给罗马的贫困家庭提供了不小的帮助。

但奥古斯都给罗马经济注入的资金还远远不止这些,他和阿格里

帕实施了规模极其浩大的建筑计划。许多罗马自由民都需要工作,而

在机械化到来以前的时代,搬运、建筑业往往提供了非常多的就业岗

位。[381]在一般情况下,有百分之十到百分之十八的城市男性居民工

作于建筑行业。[382]但帝国时代开头的这几十年并不一般,阿格里帕

和奥古斯都正在让罗马改头换面。当然,以工资形式来到平民手里的

资金并不像前文所提的奖金那样轻松、直接。但是,各行各业的工作

者—切割、运输石块者,搬运木材和砖块者,砌墙者,装潢者,制作

神像者,制作金属配件者,制造、修复建筑工具者—都不难看出这些

浩大的工程的存在基本要归功于奥古斯都。而且,这还没有算上食品

和其他商品的贩卖者,他们是第二大受益群体。如果奥古斯都不在

了,这些支撑着他们生存的工作也会随之消失。

新工程当中虽然有很多用以装饰或纪念的建筑,比如剧院、凯旋

门、纪念碑、神庙以及奥古斯都本人的宫殿,但也有一些非常实用的

建筑。阿格里帕就致力于建造水渠,他修复并改良了马尔奇乌斯渠

(Aqua Marcia),新建了尤里乌斯渠、少女渠(Aqua Virgo)和阿尔西艾

提努斯渠(Aqua Alsietina),让罗马城的供水量增加了百分之五十

多。[383]水是非常重要的资源。新鲜、干净的水能够让所有的城市居

民都受益良多,无论他们富贵与否。罗马的居民数量在前一百年里增

长了很多,已经远远超出了既有基础设施的承载范围。供水量的不足

难免会导致罗马城的居民被迫使用脏水,用水量受限,还要花不少的

时间和精力去寻找、运输可用的水。水渠是当时横贯意大利乡间的主

要设施,许多峡谷里都建有高架渠,所有从新的水渠中受益之人都能

切身地感受到阿格里帕对罗马人民做出了实实在在的贡献。这些水渠

等同于他们的政治声明,其他的任何人都不能以这种非凡的手笔来帮

助罗马城的平民。

精英阶层的各位史家习惯于把这种皇家政策蔑称为“面包与马

戏”,令其看起来像是收买人心(也就是说是一种腐败)或是意在让广

大群众忽视政治形势的麻痹政策。然而,当时的罗马社会是极其不平

等的。在这种社会里,很多人都非常贫穷,他们的生计真的是一个大

问题。阿格里帕和奥古斯都则以实际行动表明了他们愿意用国家的资

源来帮助平民,大幅提高他们的生活水平。在公元前28年到公元前23

年之间,他们二人把令人瞠目的大量资源投入了民生领域。其他的任

何一名元老甚至整个元老院都无法与之相媲美。

这种肚子政策的根本原理是很简单的:全体人民越穷就越渴望生

活补助,也就越支持为他们提供了金钱、食物和水的人。对于穷人而

言,最理想的情况就是政治精英们能够感觉到他们其实在某种意义上

依赖于穷人,然后主动通过社会补助来赢得穷人的支持。奥古斯都在

罗马平民身上投入的巨量资源,相当于认可了罗马公民都有权分享国

家的财富。但如果认为这是大公无私的奉献之举未免又太天真了一

点,阿格里帕和奥古斯都确实是在用这些资源换取平民的支持。显

然,他们这么做是为了能够在未来与元老发生冲突之时得到罗马平民

的助力,虽然他们当时还不知道自己具体会遇到怎样的冲突,但有智

慧的政治家总是会未雨绸缪。

动用巨额资金来赢得平民的爱戴,其实就是把广大平民纳入奥古

斯都的私人关系网络,军队和平民由此都成了奥古斯都的追随者。与

奥古斯都的行为构成鲜明对比的是,许多元老都只会秉承多年的传

统,对罗马平民不屑一顾。这主要是因为他们普遍相信自己的地位依

赖于贵族同伴,而不是贫穷的平民。但奥古斯都和阿格里帕却知道,

元老是不可靠的盟友。从公元前22年开始到公元前19年,奥古斯都将

收到这笔巨额投资的回报。

公元前24年,奥古斯都给罗马人民发放了礼金。如果以小费视

之,其规模未免太大了一些,但看起来,他花这笔钱是有政治意图

的。公元前23年,奥古斯都给罗马人民提供了额外的粮食。这次的慷

慨之举并不是为了纪念什么政治事件,奥古斯都看起来只是想要帮助

大家弥补去年意大利歉收造成的供粮漏洞而已。歉收一般会导致罗马

市场上的粮价上涨,有可能会让某些市民承受饥饿之苦,进而带来政

治动荡。我们没有可以用来揣测公元前23年收成的信息,不过大概在

公元前22年较早的时候,台伯河谷遭受了洪灾,特大降雨有可能冲毁

了比较早的那批作物。在这相邻的三年里,罗马人民经历了两次甚至

三次歉收。

罗马的农业是相当发达的。罗马农民会种植很多种类的作物,会

尽量抵御某些农业风险,也会购买一些实用的工具。他们有酿造葡萄

酒、生产橄榄油的设备,也有保存、运输食物的手段。但是,世界上

所有地区的农业都难以承受异常的降水和气温带来的打击,地中海沿

岸地区尤其如此。降水过多或过少都会导致农作物难以正常生长。一

般说来,大约每四年就会有一次普通的歉收,每十年更是会有一次几

乎颗粒无收的情况出现。连续出现的歉收还会让农民没有时间和资源

来恢复正常的生产。在极端情况下,他们甚至会不得不暂时离开自己

的田地,去其他地方寻找食物和工作。[384]而在难民眼里,最明显的

去处就是罗马城。

罗马人民成功地利用自己的政治权力让罗马的政治精英们为罗马城准备了价格稳定的粮食供给。这项政策从晚期共和国开始,在帝国时期也一直延续了下去。但是,这部分粮食并不能满足全城人口的需要。受惠的大概只有二十万男性,他们的家人和很多不在名册上的人都得依靠粮食市场来维生。

我们可以设想一下,假如某个田产不多的农民在年成一般的时候

卖一半吃一半,那么在收成下降一半的那些年里,他就只能把当年的

全部收成都用于自身的消费了。如果某个农民通常卖七成吃三成,那

么在减产一半的时候他就得吃这一年收成的六成,只卖剩下的四成,

但市场上看到的是,他卖出的粮食只有平时的二成了。所以,农业产

出的一点小波动都会严重地影响城市的粮食供应。大地主面临的局面

是类似的,因为他们在歉收之时必须把更高比例的作物用于供养他们

的佃农。

此外,无论是拥有小规模田地的自耕农还是大地主一般都想在灾

年提高粮价以弥补这一年的损失。同时,由于粮食供给确实减少了,

粮价难免会如他们所愿地上升,但是在这种情况下,粮食市场有可能

彻底崩溃。农民(其实更可能是粮食商人)会仔细地考虑在什么时候把

粮食带到市场上去,然后又要以什么价格卖出。就算对供求法则只有

相当粗浅的认识,他们也很可能会伺机把价格抬得非常高。接下来会

发生的只有两件事,而这两件事都是相当糟糕的:这些粮食有可能会

因为买家不愿购买高价粮食或根本就买不起而卖不出去;或者粮食以

虚高的价格被卖出去了,从而使人们更加倾向于囤积粮食,抬高粮

价。我们有可能会看到粮仓里存着粮食,市场上甚至也有粮食(至少高

价可以买到),但许多人却饿着肚子的景象。

奥古斯都在公元前23年为罗马居民提供粮食的举动证明了他认可

罗马城居民获得食物的权利,也宣示了奥古斯都的共和国愿意为其人

民提供生活所需。他买来了食物,将其分发给大家。农民得到了钱,

罗马市民得到了食物。但在公元前22年,奥古斯都已经不再是执政

官,而继任的两位执政官没有出手解决粮食不足的问题。他们或许是

没有足够的资金,或许是没有看到人民的需要,或许只是不愿意出

手。毕竟,元老们都是大地主,而且向来不怎么同情挣扎在水深火热

之中的穷人,他们很可能处于粮食商人的立场上。罗马平民也能想明

白这些事情。在古典时代因食物短缺而爆发的起义当中,平民的攻击

目标始终是富有的地主,因为他们认为这些大地主有意囤积粮食,以

便趁着人民遭受苦难之际大发一笔国难财。

这两位执政官既没有军队也没有警察。显而易见,几万名饥肠辘辘、愤怒不已、支持着奥古斯都的平民突然出现在广场上会给执政官造成巨大的压力。但也许几千人就足以困住各位元老,迫使他们屈服。唯一有条件轻松制止这些民众、保护诸位元老的是奥古斯都,因为他有自己的卫队。然而,他看起来不太愿意让卫兵们去广场上保护元老,打压这群想要让他来拯救自己的民众。

被困在元老院里的两位执政官放弃了象征着他们的权威的扈从。

民众把这些扈从带到了奥古斯都(他肯定在罗马城内)面前,请求他来

担任独裁官。当然,这群民众不是经由正规的程序组建起来的,他们

不是平民会议那样的合法机关。但是,共和国制度的首要原则就是人

民主权,而这次的事件显然体现了人民的意志。从政治的角度来说,

奥古斯都大可以按照人民的意愿,接受独裁官的职位。法律上的细节

想必会有办法解决的,困居元老院的元老们大概很快就会同意批准奥

古斯都担任独裁官。然而,奥古斯都露出了他的胸膛,以这种戏剧般

夸张的方式拒绝了民众的请求。

当时的世界毕竟没有话筒,也没有大众传媒,和一大群愤怒的民

众沟通确实需要用一些夸张的动作来表达自己的意思—这算是一种政

治默剧。奥古斯都的意思很清楚:受任独裁官等于他的死亡。我们或

许可以将其解读为他对宪法极其尊重,宁死也不愿担任独裁官。这种

看法还符合了许多人心中的奥古斯都的形象—试图在自己的权力和罗

马的传统之间寻求平衡的一位宪法改革家。后来,奥古斯都本人则暗

示他当年的拒绝是在谦让,以求符合罗马的道德传统。[385]

然而,这种观点难以让人信服。奥古斯都很可能是在以展露胸膛

的行为来表示自己会步前一位独裁官恺撒的后尘,惨遭元老们的毒

手。[386]他想要让人们相信他是站在平民这边的,他是人民的保护

者,愿意对抗威胁着全体人民的元老。他正是以人民保护者的身份再

次出手处理供粮问题、干涉罗马政务的。奥古斯都的谦让是属于伟人

的谦让,他拒绝担任独裁官是因为真正的权力不在此处。这也是他精

心考虑的结果:受任为独裁官就会坐实其对手的批评,让政治形势倒

退回三头同盟末期的状态,毁掉奥古斯都恢复的共和国,更有可能导

致非常不理想的恶果。

奥古斯都解决了粮食供给的问题。为此,他发明了一个供应粮食

的新职位。在他的介入下,罗马的粮食供应在不到五天的时间里就恢

复了正常。这种惊人的速度让人不禁想要加以思考,就算奥古斯都在

接受请求以后就立刻开始着手准备,向各地省份发出了请求供粮的消

息(尤其是阿非利加、西西里、萨丁尼亚),他的信件也几乎不可能在

五天之内抵达目的地。更何况,他们还得临时准备紧急供粮的船只,

把粮食装上船,然后运到普提欧利(Puteoli)或奥斯蒂亚,接着再用车

辆或者驳船运到罗马。这一系列流程一般要花费好几周的时间。那

么,奥古斯都究竟是如何在五天之内解决粮食短缺的问题的呢?

这里有几种不同的可能性。首先是阴谋论。鉴于奥古斯都控制着

重要的粮食产地西西里,有大量的粮食都在他的掌握之中。当粮食危

机渐渐加重之时,奥古斯都猜想元老们会无法解决这个问题,便有意

地减少了进入市场的粮食数量。第二种可能性是,奥古斯都成功地说

服了意大利的那些粮食商人、农民、地主,让他们放弃了囤积居奇的

打算。毕竟,拒绝奥古斯都的要求向来都有很高的风险。还有一种可

能性是,随着奥古斯都宣布要出手管理粮食供给,手中存着粮食的那

些人很自然地认为奥古斯都会强征存粮,或是会很快地找到其他渠道

的粮食来供给罗马的粮食市场。无论如何,粮价都会在不久以后恢复

正常。也就是说,现在就该出手存粮了。

不管奥古斯都到底做了什么,这次的粮食危机终究是结束了。而且,他飞快的解决速度很可能会让平民们更加相信这些元老不是无能就是歹毒,人为地抬高了粮价,只为谋取私利。总而言之,公元前22年初,罗马政坛上再次出现了两极对立的景象。在此期间,奥古斯都证明了他确实是人民的权利的捍卫者,会为人民挺身而出,对抗元老。

缺席之策:没有奥古斯都的奥古斯都共和国

在年中将至之时,奥古斯都离开了罗马,前去西西里。但他的离去并没有给罗马的政局带来和平。在粮食起义的暴力冲突和凯皮奥及穆雷纳的阴谋发生以后,罗马深陷于混乱之中。[387]即将到来的下一个政治热点事件是执政官选举,这次的选举应该是被推迟到了这一年较晚的时候,因为奥古斯都当时肯定不在罗马城内。

选举过程中发生了暴力冲突。这种事情并不罕见,但这次的起因

不是竞选者之间的矛盾,而是没有参选的奥古斯都的支持者发起的骚

乱。负责选举执政官的是以百人团(centuria)形式组织起来的罗马公

民会议,他们在投票时也要按照顺序经过“桥”。这种会议是有可能

失控的,因为虽然主持会议的官员会尽量维持现场的秩序,但他的声

音有可能会被选民淹没。这一次,选民们扰乱了会议的正常流程。他