作者: deepoo

-

余少祥:论社会法的本质属性[节]

一、体现社会法本质的基本范畴

范畴及其体系是衡量人类在一定历史时期理论发展水平的指标,也是一门学科成熟的重要标志。社会法的基本范畴是社会法的概念、性质及结构体系等内容的本质体现,这是当前学术界研究相对薄弱的环节。社会法的基本范畴经历了从社会保护、社会保障到社会促进,从生存性公平到体面性公平的演变,体现了社会法不同于其他部门法的本质特征。

(一)国内立法史视角

一直以来,我国社会法的基本范畴都是社会保护,主要体现为对特定弱势群体的生活救济和救助。到了近代,开始探索社会保障制度。新中国成立尤其是新时代以来,社会促进逐渐成为社会法的新追求。

在我国古代,虽然没有系统的社会法制度体系,但很早就有关于社会救济的思想和行为记载,如《礼记·礼运》提出“使老有所终,壮有所用,幼有所长,鳏寡孤独废疾者,皆有所养”;《墨子》主张“饥者得食,寒者得衣,劳者得息”。在制度方面,《礼记·王制》言及夏、商、周各代对聋、哑等残障人士“各以其器食之”。在西周,六官中地官之下设大司徒,专门负责灾害救济。春秋战国时期,增加了“平籴、通籴”等措施。两宋之后,居养机构发展较为完善,有福田院、居养院等多种形式。此外,还有用于赈灾的名目众多的仓储体系,如汉有常平仓,唐有义仓,两宋有惠民仓、社仓,元有在京诸仓、御河诸仓,明有预备仓等。但总体上看,这些救助措施均非法定义务。统治者赈灾济困乃是一种怀柔之术,是为巩固皇权的收买人心之举,与现代意义的社会法相距甚远。

我国真正开启社会立法的是北洋政府。清末搞得沸沸扬扬的修宪和制订法律的活动,催生了民法、刑法等一批法律法规,却没有一部关于社会救济和保障民众生活的法律。1923年,北洋政府颁布《矿工待遇规定》,首次引入“劳动保险”概念,可谓我国社会法的破壳之作。可惜,这些法令因战乱和时局动荡刚实施便很快夭折。南京国民政府建立后,先后颁布《慈善团体监督法》《救灾准备金法》《最低工资法》等。从抗日战争起,以国民政府社会部成立为标志,社会立法渐趋完备。1943年《社会救济法》颁布,奠定了民国社会法的基石。这一时期,《社会保险法原则》《职工福利社设立办法》等先后公布,为探索社会保障进行了有益尝试,社会法发展开始迈入现代化门槛。但由于内战不断、政局不稳、政令不畅,加上官僚买办资本的抵制,这些法令并没有得到有效实施。

新中国成立后,我国实行的是计划经济体制和单位对职工生老病死全包的政策。直到20世纪80年代,民众的基本生活保障仍是由国家和集体组织承担。90年代起,随着向市场经济转型,一部分群体开始从单位人向“社会人”转变。为确保这部分民众的基本生活来源,我国开始建立社会保障制度,先后颁布《残疾人保障法》(1990)、《劳动法》(1994)、《城市居民最低生活保障条例》(1999)等社会法规。进入21世纪后,相继出台了《劳动合同法》(2007)、《社会保险法》(2010)等社会立法。新时代以来,又陆续推出《慈善法》(2016)、《法律援助法》(2021)等,加上之前的《红十字会法》(1993)、《就业促进法》(2007),社会促进逐渐成为立法的关键词。从总体上看,我国当代社会立法是制度变迁的产物,而非在市场发展中形成的,因此与西方国家有所不同。

(二)国外立法史视角

社会法是舶来品,深受欧美日等工业国家影响,因此探求社会法的概念、范畴与体系等,离不开对外国法制的比较观察。从总体上看,国外社会法范畴也经历了社会保护、社会保障和社会促进的演进。

英国是世界上最早实行社会立法的国家,其目的是为脆弱群体提供社会保护。1388 年,金雀花王朝制定了一部《济贫法案》。1531年,亨利八世又颁布了一部《名副其实救济法》,规定老人和缺乏能力者可以乞讨,地方当局将根据良心从事济贫活动。这两个法案与1601年伊丽莎白《济贫法》相比,影响较小。后者诞生于“羊吃人”的圈地运动时期,旨在“将不附任何歧视性的工作给有工作能力的人”,后为很多国家效仿。1563年,英国颁布了历史上第一部《劳工法》,1802—1833年又颁布5个劳动法案,覆盖了几乎所有工业部门,确立了现代劳动保护体系及基本原则。1834年,英国政府出台《济贫法修正案》,史称“新济贫法”。这些立法孕育着社会法的丰富遗产,具有鲜明的时代性、体系性和结构性特征。此后欧洲其他工业化国家纷纷仿效英国,建立起自己的社会保护制度。

世界上最早实行社会保险立法的是德国。19世纪中后期,俾斯麦政府采取“胡萝卜加大棒”政策,一面对工人阶级反抗实施残酷镇压,一面通过社会保险对其安抚,相继出台了《疾病保险法》(1883)、《工伤保险法》(1884)等法规。由于社会保险法适应了工业化对劳动力自由流动的需求,解决了劳动者生活的后顾之忧,在社会法体系中占有重要地位。但西方社会法真正完成的标志是1935年美国《社会保障法》施行,这是社会保障概念在世界上首次出现。之后,社会法的发展开始进入一个新的历史阶段——为社会成员提供普遍福利,其典型标志是英国“贝弗里奇计划”实施。由于该计划被逐步纳入立法,标志着英国社会法走向完备和成熟。第二次世界大战后西方各国在推行社会立法时,不同程度借鉴了《贝弗里奇报告》模式,使得西方社会法的福利化转型最终完成。

20世纪60年代,西方国家普遍解决了生存权问题,社会促进开始成为立法的重要权衡。除了传统的慈善法大量兴起外,扶贫法和反歧视法逐渐形成新的热潮。以美国为例,1964年约翰逊政府通过《经济机会法》,宣布“向贫困宣战”,此外还实施了社区行动计划、学前儿童启蒙教育计划等。其他国家如英国的《儿童扶贫法案》、法国的“扶贫计划”和德国的《联邦改善区域结构共同任务法》等在促进落后地区经济社会发展方面也起到了重要作用。在反歧视方面,美国、英国、欧盟和日本都有完备的立法。尤其是美国,仅反就业歧视法就多达十余部,且有大量判例具有重要立法价值。这一时期,日本的《反对性别歧视法》(1975)、瑞典的《男女机会均等法》(1980)等纷纷出台。根据反歧视法的差别待遇原则,都是为了促进国民获得实际平等地位,实现社会实质公平。

(三)学术研究史视角

我国社会法研究肇始于民国初期。1949年以后,又分为“大陆”和“台湾地区”两个支系,前者的探索早于后者,而且在一定程度上沿袭了民国的传统。从学术史上看,学术界在某些观点上取得了较大共识,但核心范畴略有差异。

民国的社会保护和社会幸福说。多数民国学者认为,社会法是救济和保护社会弱者之法。如李景禧提出,社会法是“为防止经济弱者地位的日下,调整了暂时的矛盾”。陆季藩指出,社会法是“以保护劳动阶级或社会弱者为目标”的法。林东海认为,凡是“解决社会上之经济的不平等问题”的立法,都是社会法。杨智提出,社会法是“以增进及保护社会弱者之利益为目的”的法。也有学者主张,社会法包含一般社会福利。如张蔚然提出,社会法是“关于国民经济生活之法”。卢峻认为,社会法的目标是“使社会互动关系或社会连立关系”达到最高目标。黄公觉则明确提出,广义社会法“指一切关于促进社会幸福的立法”,狭义社会法仅指“为促进社会里的弱者或比较不幸者的利益或幸福之立法”。

大陆的劳动保护与社会保障说。1993年,中国社会科学院法学研究所在一份报告中将社会法解释为“调整因维护劳动权利、救助待业者而产生的各种社会关系的法律规范的总称”。这是新中国学术界首次系统阐述这一概念。最高人民法院2002年编纂的《社会法卷》认为,“坚持社会公平、维护社会公共利益、保护弱势群体的合法权益”是“社会法的主要特点”。在学术界,多数学者将社会法定义为调整劳动与社会保障关系的法律。如张守文认为,社会法“具有突出的保障性”,主要是“防范和化解社会风险和社会危机,保障社会安全和社会秩序”;赵震江等认为,社会法是“从整个社会利益出发,保护劳动者,维护社会稳定”,包括“社会救济法、社会保障法和劳动法等”。从中国社会法学研究会历次年会讨论的情况来看,劳动法、社会保障法、慈善法属于社会法的观点已被普遍接受。

台湾地区的社会安全和生活安全说。很多台湾学者从社会保护出发,将社会法称为社会安全法。如王泽鉴认为,社会法“系以社会安全立法为主轴所展开的”。钟秉正认为,社会法是“以社会公平与社会安全为目的之法律”,“以消除现代工业社会所产生的各种不公平现象”。也有学者明确提出社会法是生活安全法。如郝凤鸣认为,社会法是“以解决与经济生活相关之社会问题为主要目的”,“藉以安定社会并修正经济发展所造成的负面影响”;陈国钧认为,社会法旨在保护某些特殊人群的“经济生活安全”,或用以促进“社会普遍福利”,这些法规的集合被称为社会法或社会立法。总之,在台湾学术界,社会法集中指向与社会保护、社会保障和社会福利等相关的社会安全或生活安全法。

二、决定社会法本质的要素分析

事物的本质和发展方向是由核心要素决定的,在讨论社会法的本质之前,我们先分析决定其本质的核心要素。如前所述,社会法产生的根源是社会的结构性矛盾,尤其是市场化带来诸多社会问题,使得国家不得不运用公权力干预私人经济,达到保障民众生存权、化解社会矛盾的目的。在一定意义上,政治国家、经济社会和历史文化等要素在社会法本质形成过程中起到了决定性作用。

(一)政治国家要素

作为国家在干预私人领域过程中形成的全新法律门类,社会法与传统的自由权、自由市场经济体制以及民主法治国家理念存在一定冲突。正是国家职能的转变决定了社会法的内在精神和本质,使人民受益于国家的关照。

1.从消极国家到积极国家

在古典自由主义时期,政府主要承担“守夜人”角色。资本主义发展到垄断阶段以后,不但造成市场机制失灵,而且难以维持社会稳定。于是,社会上层开始形成一种共识,即通过国家干预,改良资本主义制度,以消除暴力革命的隐患。正如马克思和恩格斯指出,“资产阶级中的一部分人想要消除社会弊病”,“但是不要由这些条件必然产生的斗争和危险”。按照黑格尔的阐述,国家的目的在于“谋公民的幸福”,否则它“就会站不住脚的”。在这种情形下,国家这只“看得见的手”开始不断发挥作用,以平衡不同社会群体的需求,积极国家随之诞生。因此,国家干预并非理论家的发明,而是在历史进程中实际发生的,即对抗已重新采取直接的国家干涉主义形式,国家进一步成为社会秩序的干预者。

国家干预社会生活是通过社会立法实现的,直接决定了社会法的性质和宗旨。由于国家不得不采取干涉主义的社会立法来做社会救济的工具,于是在法律上体现为,国家对于任何人都有保障其基本生活的义务。从立法宗旨来看,旨在打破弱肉强食的丛林法则,将社会贫富分化控制在一个可以承受的动态合理范围之内。比如,通过劳资立法,克服自由资本主义无节制地追求高额利润造成的社会分裂等严重后果。事实上,国家实行经济社会干预,不是否认私人利益和个人需求,而是将其重整到更高的全社会层面,即运用国家的力量实现个人的特殊利益与社会整体利益的统一。因此,社会法表面上是社会性的,实质上是政治性的,是一种典型的政治法学,它发轫于人对国家的依附性,发生于国家对共同体内每个人的幸福所负有的法律责任,使国民的生活安全得到有效保障。

2.从社会国到福利国家

积极国家进一步引发从消极自由到积极自由的发展。也就是说,国家不仅有保障公民基本自由不受侵犯的消极义务,更有保障公民基本生存与安全的积极义务,这也是社会发展进步的重要标志。在这一背景下,政府不再像以前一样仅仅囿于维护社会秩序,或对出现的问题进行决策干预,而是更进一步转换为保障人民具有人格尊严和最低生存条件的给付行政。通过给付行政,政府承担了涵盖广泛的计划性的行为、社会救济与社会保障等任务。尤其是在工业社会条件下,国民享有基本权利和事实自由的物质基础并不在于他们为社会作过什么贡献,而根本上依赖于政府的社会给付。正是给付行政成就了今天的社会国,即一个关照社会安全与民生福祉的国家。社会法便是为实现社会国的目标任务形成的法律体系,而社会国原则又为立法者干预私人领域提供了合法性依据。

19世纪末20世纪初,随着垄断资本主义发展,社会本位的法理念开始取代个人本位的法思想并居于支配地位。这一时期,政治国家与市民社会的矛盾在法律上体现的结构也发生了新变化,使得国家在向国民承诺下不断增加福利范围。1942年,英国“贝弗里奇计划”首次采用福利国家称谓,通过财产重新配置,为公民提供基本生活保障。二战之后,这一思想主宰了西方的正统观念,很多国家确认促进民生幸福是公民的重要社会权利,对广泛和普遍的社会福利而言同样如此,国家承担了民众直接或间接的生活责任。可见,政治国家不但有力地推动了社会法的发展,而且决定了其福利化方向,最大限度地消除了各阶级之间的对抗冲突以及社会革命的危险,促进了社会公正公平,有效维护了社会稳定。

(二)经济社会要素

工业革命以后,资本主义的新信念是唯物质主义的,即只要物质财富足够多,一切社会问题都会自动消失。事实上,纯粹的市场机制无法解决社会公平、效率以及经济长期稳定等重要问题。由于市场体系造成了巨大的社会混乱,如果不深刻调整,市场机制也将被摧毁。因此,资产阶级国家被迫用法律来防止资本主义剥削过度的现象,通过社会立法去收拾资本和市场留下的烂摊子,出现了以社会法为核心、旨在对冲和矫治市场化不利后果的社会保护运动,结果连最纯正的自由主义者也承认,自由市场的存在并不排斥对政府干预的需要。正如罗斯福在1938年向国会提交的一份“建议”中指出:“我们奉行的生活方式要求政治民主和以营利为目的的私人自由经营应该互相服务、互相保护——以保证全体而不是少数人最大程度的自由。”

经济民主理论认为,经济问题与伦理问题密切相关,人类经济生活应满足高尚、完善的伦理道德方面的欲望。社会法倡导社会保险、社会救济、劳工保护等社会权利,以解决资本主义发展中日益严峻的社会问题。一方面,要保障每个人拥有获取扩展其能力的物质条件和自我实现的机会;另一方面,要在支持扩大国家给付的理由与加重政府财政负担的结果之间进行权衡。可见,社会法的产生不单纯是对民众生活的保护,也是产业制度有效运行和社会存续的必需。因此,社会法在本质上是由资本主义的结构性矛盾决定的,是这一矛盾在法学层面的反映。因此,社会法与市民法同属资本主义的法,它不否认市场经济。

与此同时,社会要素也深刻地影响着社会法的本质。随着工业革命深入发展,市场为社会创造了巨额财富,也制造了大量贫困。正如马克思恩格斯所说,“劳动生产了宫殿,但是给工人生产了棚舍”。1848年,《共产党宣言》发表,整个欧洲为之震动。恩格斯明确指出:平等不仅应“在国家的领域中实行”,还应当“在社会的、经济的领域中实行”。这一时期,各种社会主义思潮如德国的社会民主党运动、法国的工团社会主义、巴枯宁与蒲鲁东的无政府主义等纷纷发出社会改革的呼吁。由此看来,近现代社会实际上受到了一种双向运动支配,其一是经济自由主义原则,其二是社会保护原则,二者交互作用。应该说,社会法的产生正是对社会无序发展及其大量不良后果进行矫正的反向运动。

从本质上看,社会保险、社会救助等均是由社会再分配决定的,其目的是使社会上的富人与穷人达成一种建立稳定秩序的合作。如德国当时的社会保险立法受到普遍赞成,资方认为可以抵消暴力革命,劳方则视其为实现社会主义的第一阶段。这一共识不断巩固和积累,成为重要的社会支持手段。美国学者卡尔多等在社会福利的基础上,还提出一种社会补偿理论,认为从受益者新增收益中拿出一部分补偿受损者,就实现了帕累托改进。总之,社会再分配是以生存权和社会公平为法理基础,这是社会法最重要的价值理念,体现了生产关系变革和社会法的发展进步。而且,社会法的发达程度是由经济社会发展水平决定的。一方面,所有的社会权利实现都依赖于经济发展指数和财政状况;另一方面,它限制资本主义的非人道压榨和剥削,却使资本家在所谓合法范围内得以充分发展。

(三)历史文化要素

社会是由历史事实的总和所规定的、经验地形成的人类质料,作为最具解释力的最新法理范式,社会法标志着人类政治文明、法治文明和社会现代化达到了空前高度,历史意义深远。历史法学派明确指出,法是以民族的历史传统为基础生成的事物,是从特殊角度观察的人类生活。萨维尼详细考察了德国法,认为法的素材“发源于国民自身及其历史的最内在本质”,因而受历史决定。马克思认为,历史意味着现实的个人通过生产实践活动进行物质创造,并逐渐认识世界、改造世界;而“表现在某一民族的政治、法律、道德、宗教”等“语言中的精神生产”也是“人们物质行动的直接产物”。因此,法律是历史的产物,是世世代代的人活动的结果。可见,马克思历史观的内核在于,从历史和现实出发考察法律的形成和本质,并将市民社会理解为整个历史和社会立法的基础。

德国是现代社会法的发源地,其社会立法极大地丰富、发展和完善了现代法律体系。从实践中看,德国社会法受历史因素的影响是广泛而深远的。如1794年《普鲁士普通邦法》规定,国家有义务对那些为了共同利益而被迫牺牲其特殊权利和利益的人进行补偿。以此为源头,德国逐渐孕育出公益牺牲原则,成为社会补偿法的理论渊源。为了应对二战受害人及其遗属的供养问题,德国出台了《联邦供养法》,并逐步演变为对各类暴力行为受害人的补偿。再如,德国法律有一个苛情救济制度,主要是为恐怖和极端犯罪受害人提供人道主义款项,但受害人无法主动主张这一权利。2013年,第十八届议会提出,要制订新的受害人补偿和社会补偿法。不久,柏林恐怖袭击案发生,使得改革进程急剧加速。如今,服民役者、因接种疫苗身体受损者均被纳入社会补偿范围,使其社会法体系日臻完备。

文化也是社会法本质形成的重要决定因素。马克思指出,“权利决不能超出社会的经济结构以及由经济结构制约的社会的文化发展”,因为文化是现代社会思想的特殊元素,奠定了一整套理解和解释人类行为的规则。社会文化决定论甚至认为,人类及社会制度的形成,由各种文化价值和社会机构决定。尤其是法律文化,决定了一国法律的内在逻辑,以及历史进程中积累下来并不断创新的群体性法律认知、价值体系、心理态势和行为模式。客观地说,很多法律特性只有通过法律文化才能得到解释,如德国、英国、美国和法国法的不同。因此,法律既存在于一个与传统相通的整体之中,又存在于一个与他物相关联而形成的民族精神的整体之中,他们共同构成了法律的文化意义的经纬。

决定社会法本质的文化要素有法律观念、传统和制度等,如俾斯麦立法是德国留给世界最宝贵的政治遗产,是法律文化的最高层次。此外,法律理论的影响也是不言而喻的。一是社会连带理论。如社会连带主义法学提出,连带关系要求个人对其他人负有义务,每个人都依靠与他人合作才可能过上满意的生活成为社会保险法的理论基础。二是公民权利理论。如马歇尔提出,公民权利“是福利国家核心概念”,成为福利立法的理论基石。三是差别平等理论。这一理论认为,财富和权力的不平等,只有最终能对每个人的利益,尤其是在对地位最不利的社会成员的利益进行补偿的情况下才是正义的。这些文化元素对社会法本质形成起到了重要的决定作用。因此,如果剥夺了文化要素,社会法就不是今天的样子,也不可能实现生活安全的社会化和国家化。

三、社会法本质的理论证成

作为独立的学科名称和专门法学术语,社会法有特定的语意内涵、独立的研究对象和独特的法律本质,应立足于中国的历史和现实文化,借鉴国外经验,构建具有中国特色的社会法理论。并非所有与社会或社会问题相关的法律都是社会法,它以为每一个社会成员提供适当的基本生活条件为使命,因此不仅仅是现代社会场域的法,也是应对现代社会的法。

(一)社会法是弥补私法不足的法律体系

私法和市场竞争必然孕育着贫富分化与社会危机。为了挽救资产阶级统治秩序,资本主义国家遂通过社会立法来修正某些私法原则,限制完全的自由竞争,矫正私法和自由放任的市场经济带来的负面后果。

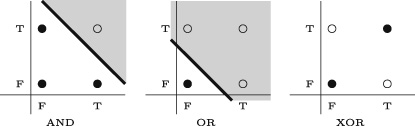

1.私法公法化与公法私法化

近代私法推定法律关系发生在身份平等且充分自由的人们之间,对市场经济的保障是十分必要的,至少对于市场主体来说形成了私人平等。所谓私人平等,就是人格与资格平等、机会均等。因此,在经济交往中,只要不采取欺诈、强迫等手段,各方都可以自由地追求利益最大化,国家作为中介人和社会契约的执行者只有保护个体权利不受侵害的消极义务,没有促进个体利益的积极义务。但是,这种抽象平等忽略了人们在天赋能力、资源占有、社会地位等方面的实际差异,结果产生了事实上的不自由、不平等,不可避免地出现“贫者愈贫,富者愈富”的马太效应。正是私法调整机制的不足以及所有权绝对和个人本位法思想泛滥,导致社会弱者生存困难、劳动者生存状况不断恶化和劳资对立等严重社会后果,迫切需要对私法意思自治、形式平等、契约自由等原则进行修正。

由于私法和市场机制不能自动解决社会贫困、失业等问题,在法律发展中出现了私法公法化和公法私法化现象,逐渐形成社会法这一以实现社会实质公平为目的、以公私法融合为特征的新型法律部门。这是因为,单纯的公法容易导致过多限制经济自由的危险,单纯的私法又无法影响经济活动的全部结构。所谓私法公法化,是国家运用公共权力调整一些原本属于私法的社会关系,使私法带有公法的色彩和性质;所谓公法私法化,是国家以私人身份出现在法律关系中,将私法手段引入公法关系,使国家成为私法的主体和当事人。这种公共权力介入私人领域的做法就是公私法融合,并随之产生与公私法并列的第三法域。按照共和主义的观点,在私人对个人基本权利产生实质性支配关系时,国家有义务帮助个人对抗这种支配,此时基本权利经由国家介入得以保全。

2.社会法对市民法的修正

如前所述,市民法(即民法)有益于资源有效配置与财富公正分配,但由于各主体掌握的信息、谈判能力和经济力量等不同,交易结果不一定公平。在现实中,很多人认识到法律的基本精神是有利于强者而非弱者,市民法确立的平等协商、契约自由等原则在实践中形同虚设。一方面,它忽视了个体的现实差异;另一方面,市民法上的“人”是一种超越实际存在、拟制化的抽象人,已逐渐丧失伦理性与社会正当性基础。从法史可知,对人的看法在很大程度上决定着法律的发展趋势和方向。20世纪下半叶起,新的利益前所未有地逼迫着法律,要求以社会立法的形式得到承认,法律也越来越多地确认其存在,将空前大量的权利提高到受法律保护的地位。正是源于此种法理论的立法被称为社会法,这一变化也体现了从市民法到社会法、从近代法到现代法原理的重大转换。

与市民法不同,社会法更关注人的具象性与实力差异,由此很多学者从市民法修正角度来阐释社会法,将社会矫正思想置于自由主义的平等思想之上。如沼田稻次郎提出,社会法是以“对建立在个人法基础上的个人主义法秩序所存在弊端的反省”为特征的法。事实上,社会法对市民法的修订主要体现为生存权保障,具体而言就是对财产权绝对、契约自由、平等协商等原则的限制,一些学者称之为民法社会化或现代化,是不准确的。社会法对民法的修正是系统化的,在法律理念、原则、方法和调整的法律关系上有显著不同。总之,社会法是传统市民法不足的产物,正如马克思所说,立法者“不是在创造法律,不是在发明法律,而仅仅是在表述法律,他用有意识的实在法把精神关系的内在规律表现出来”。

(二)社会法调整的是实质不平等的社会关系

由于私法本身无法推动不平等的社会关系向实质平等转变,以公权力矫正不平等就成为必然选择。社会法正是通过对不平等的社会关系实行区别对待和差异化调整,增强弱者与强者抗衡的力量,实现实质意义的平等和公平。

1.从形式平等到实质不平等

私法的形式平等旨在确立绝对财产权和缔约自由权,使个人通过市场机制选择追逐利益最大化,并承担由此带来的后果。但是,这种平等作为近代民主政治的理念不是实质性的,而是舍弃了当事人不同经济社会地位的人格平等和机会均等,并非事实上的平等。恩格斯说:“劳动契约仿佛是由双方自愿缔结的”,这种“只是因为法律在纸面上规定双方处于平等地位而已”,“这不是一个普通的个人在对待另一个人的关系上的自由,这是资本压榨劳动者的自由”。拉德布鲁赫在《法学导论》中写道: “这种法律形式上的契约自由,不过是劳动契约中经济较强的一方——雇主的自由”,“对于经济弱者……则毫无自由可言”。因此,所谓契约自由和所有权绝对,事实上已成为压迫和榨取的工具。

尽管私法形式正义要求按照法律规定分门别类以后的平等对待,但它并未告诉人们,应该怎样或不该怎样分类及对待,如果机械地贯彻形式平等原则,就容易产生许多弊病。一方面,总会有一些人处于强势地位,一些人居于劣势地位;另一方面,强者常常利用优势地位欺压弱者,形成实际上的不平等关系。以劳动关系为例,如果不对契约双方进行一定干预,劳动者通常被迫同意雇主的苛刻条件而建立不平等劳动关系。由于市场本身无法克服这一现象,必然带来一系列社会利益冲突,甚至导致严重的社会危机。正是自由主义无序发展导致19世纪出现垄断与无产、奢侈与赤贫、餍饫与饥馑的严重对立现象,因此必须对形式平等导致的实质不平等进行矫正,通过社会法规制,平衡各种社会矛盾和利益冲突。

2.从实质不平等到实质平等

为了达到实质平等,资产阶级国家开始通过社会立法适当保护社会弱者,抑制社会强者。与民法不同,社会法既有私法调整方法,也有公法调整方法,因为单靠私法规范不能达到目的,必须运用公法的强制性规范予以支持才能实现权利的真正保障。作为反思法律形式平等的必然结果,社会法主要是以社会基准法和倾斜保护的方式对平等主体间不平衡的利益关系予以适度调节,设定一些法律禁止或倡导的方面,体现了马克斯·韦伯所称“现代法的反形式主义”趋势,是一种“回应型法”或称“实质理性法”。其法理基础是,为了校正形式平等所造成的实质不平等,对个人生存和生活条件进行实际保障。当然,这种积极义务是辅助性的,只是对形式平等的缺陷和不足进行必要修正和补充,并没有取代和全面否定形式平等,正如社会法没有取代和完全否定民法一样。

由此可见,社会法调整的乃是实质不平等的社会关系,旨在纠正市场经济所导致的必然倾斜。所谓实质平等,是国家针对不同人群的事实差异,采取适当区别的对待方式,以缩小由于形式平等造成的社会差距。为了实现这一目标,立法者一方面关注平等人格背后人们在能力、条件、资源占有等方面的不平等,并以倾斜保护方式实现人与人之间的和谐;另一方面重视为人们提供必需的基本生活保障,使得立法的目标变成了结果的平等。有鉴于此,社会法上的社会保障并非临时性救济,也不是政府“信意”为之,而是法律赋予的强制性义务。总之,社会法是近现代社会实质不平等的产物和反映,以应对私法产生的“市场失灵”和过度社会分化等问题。马克思说:“人们按照自己的物质生产率建立相应的社会关系,正是这些人又按照自己的社会关系创造了相应的原理、观念和范畴。”

(三)社会法通过基准法机制发挥作用

与民法不同,社会法有一个基准法机制即最低权利保障,它提供了一种在社会的基本制度中分配权利和义务的办法,即将弱者的部分权利规定为强者或国家和社会的义务,以矫正实质意义的不平等,缩小社会差距。

1.以基准法保障底线

所谓社会基准法,是将弱者的部分利益,抽象提升到社会层面,以法律的普遍意志代替弱者的个别意志,实现对其利益的特殊保护。具体就是,以立法形式规定过去由各方约定的某些内容,使弱者的权利从私有部门转移到公共部门,实现这部分权利法定化和基准化。比如,国家规定最低工资、最低劳动条件、最低生活保障标准等都是基准法,因其具有公法的法定性和强制性,任何团体和个人契约都不能与之相违背或通过协议改变。社会基准法在初次和再次分配中都有体现,如最低工资法属于初次分配,最低生活保障法属于再次分配。在一定程度上,社会基准法是对私法所有权绝对、等价有偿、契约自由等原则的限制和修正,通常被认为是推行某种“家长制”统治的结果,因为要实现从社会的富有阶层向贫困阶层进行资源再分配,将不可避免地侵犯到财产权的绝对性。

社会基准法克服了弱者交易能力差、其利益常被民法意思自治方式剥夺的局限,在一定程度上改变了强弱主体力量不均衡状态。但是,它没有完全排除私法合意,即在基准法之上仍按契约自由原则,由市场和社会调节,这是社会法与其他部门法的显著不同。也就是说,当事人的约定只要不违反基准法,国家并不干预,个人和团体契约可以继续发挥作用。因此,社会法规范既有公法的强制性,也有私法的任意性,通过基准法限制某种利己主义的表达,通常被视为一种由统治权力强加于个人的必要。社会法与行政法的共同点在于,都实行强制性规范,但社会法是一种底线控制,没有完全排除契约自由。社会法与民法的共同点在于都尊重契约自由,但前者对契约自由作用有所限制,后者是当事人完全意思自治,任何外力干预都被视为违法或侵权。

2.以义务规范体现权利

社会基准法的另一种表现形式是,以义务规范体现权利。这也是社会法的显著特征之一,即立足于强弱分化人的真实状况,用具体的不平等的人和团体化的人重塑现代社会的法律人格,用倾斜保护方式明确相对弱势一方主体的权利,严格规定强势一方主体的义务,实现对社会弱者和民生的关怀。因此,社会法重在对私权附以社会义务,授予权利也是使相对人承担义务的手段。以社会保障法为例,社会救助、社会优抚、社会福利等主要由国家提供,社会保险则由雇主、雇员和国家共同负担,并规定为国家和社会义务,以保障民众的基本生活权利。由此,现代国家已成为新的财产来源之一,民众的生存权不再建立在民法传统意义上的私人财产所有权之上,而是立足于国家提供的生存保障与社会救济的基础之上。

社会法上的权利义务之所以不一致,是因为社会生活中客观存在一种不对等性,法律对当事人的权利义务设定就有所不同。具体就是,通过后天弥补,以法律形式向弱者适当倾斜。因此,社会法不关心穷人对自己的困境负多大责任,赋予其社会保障权也不以承担义务为前提条件。其实质是,将民众和社会弱者的基准权利规定为国家和社会的义务,因此与一些学者所谓义务本位不同。如欧阳谿认为,社会法“在于促进社会生活之共同利益”,“必以社会为本位”。事实上,封建主义和资本主义以义务为本位的法律,只不过是多数人尽忠于少数人的义务而已。不仅如此,社会法对所有权设定义务并不以权利滥用或过错为条件,限制的也不是个体而是类权利,限制方式包括使所有权负有更多义务,向弱者适当倾斜等,与民法的禁止权利滥用原则并不相同。

(四)社会法的根本目标是生活安全

不同于民法维护交易安全、刑法维护人身和财产安全、行政法维护国家安全,社会法旨在维护民众的生活安全,保障其社会性生存。它基于保护社会脆弱群体而产生,形成了不同类型、内容丰富、功能互补的制度体系。

1.社会法:维系民生之法

社会法的内在精神是保护民生福祉,也就是保障人民的生活、群众的生计和社会安全。马克思指出:“人们为了能够‘创造历史’,必须能够生活,但是为了生活,首先就需要吃喝住穿以及其他一些东西。”从本质来看,社会法的终极目标是,确保每个公民都能过上合乎人的尊严的生活,保障民众免于匮乏的自由。其核心在于,保护某些特别需要扶助人群的经济生活安全,促进社会大众的普遍福利;其实质是,对市场经济中的失败者以及全体国民予以基本的生存权保障,以此促进整个社会的和谐稳定。笔者曾将理解社会法的关键词概括为“弱者的生活安全”“提供社会福利”“国家和社会帮助”,极言之即“生活安全”。由于社会法建立了一种弱者保护机制和利益分配的普遍正义立场,通常称为民生之法。

社会法保障民众的生活安全有一个从部分社会到全体社会的发展过程。早期社会法仅仅是维护特殊群体的生活安全,认为社会法保护的是经济上处于从属地位的劳动者阶级这一特殊具体的主体。随着社会的发展,社会法的调整范围从弱者的生存救济拓展到普遍社会福利,实现了从部分社会到全体社会的转换。汉斯·F.察哈尔对此有过精辟总结,认为狭义社会法是“以保护处于经济劣势状况下的一群人的生活安全所”;广义社会法是“以改善大众生活状况促进社会一般福利”。从功能学上看,社会法有利于消融社会对抗、冲突,实现国家和社会安全,即通过保障民众的基本生存权利,扩大社会福利范围,增加公共服务数量,使每一个人都能获得某种程度的生活幸福感。

2.社会法的最高本体和逻辑结构

社会法主要通过行政给付保障民众的生活安全,这就要求国家直接提供诸如食品、救济金、补贴等基本条件,使人们在任何情况下都能维持起码的生活水准,这是社会法的最高本体。社会法上的给付分为间接给付和直接给付,如政府在工资、工时、工作条件等方面对企业进行规制,是一种间接给付;国家为保障民众生存而进行社会救助、社会保险、社会优抚补偿等,是直接给付。二者均指向国家积极义务所蕴含的实质平等。一方面,社会法上的给付是法定的,其依据必须是国家所颁布的实在法,而不能单纯地依靠宪法,因此无法律则无社会给付;另一方面,在社会给付法律关系中,国家事实上是给付主体和“财产的公众代理人”,这既是一种公共职能,也是一种国家义务。

通过行政给付,社会法确认和保护民众的生存权、社会保险权与福利权等,最终形成系统化、不同类型的结构体系。一是社会保护法,即保护妇女、未成年人、残疾人、老年人、劳工等脆弱群体的法规概称。目前,国际社会普遍将社会保护的重点确定为在社会保障体系中得不到充分保护的人。二是社会保障法,即国家用来应对全体社会成员因疾病、生育、工伤、失业和年老等引起收入减少或中断后造成经济和社会困境的法规总称,包括社会保险、社会救助、社会优抚与补偿法等。三是社会促进法,即某一类社会立法,能够促进社会实质正义、社会效用和福利等普遍提升,使公民的生活更加富足、便捷、安定,如慈善法、反歧视法、扶贫法等。这是社会法的三个基本类型,都蕴含行政给付,也都以保障民众的生活安全为目标,在本质上是一致的。

四、围绕社会法本质的体系建构

自新中国成立尤其是改革开放后,我国社会法建设取得了很大成就,但相比之下仍然是最为落后的法律部门。由于起步较晚,研究还不充分,至今没有形成相对系统的社会法体系。如何从本质上对社会法以概念清晰、理论坚实、结构严整、逻辑缜密的方式进行体系化建构,并外化为全面有序的法规系列,是推动我国社会法实践和经济社会稳定发展必须解决的重要问题。

(一)加强社会法科学民主立法

参照发达国家经验,一方面,我国社会法最大的问题是基本法律缺失,本应是“四梁八柱”的社会救助法、医疗保障法、社会福利法、社会补偿法等仍不见踪影。在社会法分支领域,亦存在诸多盲点,如集体协商与集体合同法、反就业歧视法等尚未出台,涉及平台劳动者保护的法规亦鲜有问世。另一方面,一些法规存在矛盾和冲突。

针对上述问题,宜在现有法规基础上,以保障民生和共同富裕为导向,进一步完善社会法体系。当前,我国民众在就业、养老、医疗、居住等方面仍存在很多困难,亟待通过立法解决。而且,要促进社会法规范和制度衔接。以社会救助和社会保险为例,我国和美国都实行分立模式,但美国没有社会保险的居民可以得到相应社会救助保障。在英国,1909 年的《扶贫法》要求政府在实行社会救助的同时,通过强制性社会保险使失业人员得到生活救济。在解决法规冲突方面,我国《立法法》确立了两项制度:一是直接解决机制,即“新法优于旧法”“上位法优于下位法”“特别法优于一般法”;二是间接解决机制,即将无法适用处理规则的冲突纳入送请裁决范围,区分法定和酌定情形,由有权机关裁决。此外,也可以运用利益衡量方法化解法律规范冲突,填补法律漏洞。

同时,提高立法质量。由于种种原因,我国社会法普遍存在立法质量不高问题,主要表现为立法层级低、碎片化严重、落后于实践发展等。以社会保障法为例,除了《社会保险法》,其他都是行政法规和部门规章。由于法规权威性不足,我国社会保障发展明显受限。因此,提高立法层级,建立覆盖面广的法规体系非常重要。从《社会保险法》来看,也存在很多问题。一是占全国人口一半的农民、没有就业的城镇居民、公务员和军人等保险都是“由国务院另行规定”,没有体现全民性;二是其内容远远落后于实践,如城居保与新农合、生育保险与医疗保险已合并,机关事业单位已纳入社会保险,社会保险费明确由税务部门征收,但《社会保险法》均没有体现。由于社会法立法质量不高,不仅没有解决好贫富差距问题,而且在某种意义上使贫富差距逐渐扩大。

要改变这种状况,必须深入推进社会法科学立法、民主立法。科学立法的核心在于根据社会发展需要,制定符合实际情况的社会法制度。事实上,一项法律只有切实可行,才会产生效力。以最低生活保障法为例,对救济款实行“一刀切”是不科学的,一些发达国家通常采用一种负所得税法,即按照被保障人收入实行差额补助,可以借鉴。所谓民主立法,就是在立法决策、活动中,坚持人民主体性地位,“要把体现人民利益、反映人民愿望、维护人民权益、增进人民福祉落实到依法治国全过程”。需要说明的是,我国社会法意在保障民众的基本生存权,将贫富分化控制在一定范围内,并非“福利超赶”或“泛福利化”,否则会“导致社会活力不足”,阻碍人们的积极性和创造性。

(二)提升社会法行政执法效能

社会法行政执法分为两项:一是行政给付,二是行政监察。前者为积极执法,由政府主动履行法定义务;后者为消极执法,实行不告不理原则。在行政执法中,如果当事人违法,还会产生相应的行政、民事和刑事责任。

1.充分发挥行政给付功能

社会法行政执法的主要内容是行政给付,这是社会法与传统部门法最显著的区别,体现了法律思想从形式正义到实质正义的追求。但从我国行政给付情况看,重视和保障弱势群体利益的特征并不明显。党的二十届三中全会明确提出,要加强普惠性、基础性、兜底性民生建设。近年来,尽管国家采取了大量措施解决民生问题,但相对贫穷问题依然存在,民生保障还存在薄弱环节。一方面,行政给付中社会保护和社会促进支出很少;另一方面,城乡和地区之间差异较大。在经济发达地区和效益好的单位,给付标准高,在落后地区和效益不好的单位,给付标准低,形成一种反向歧视。不仅如此,有的地方仍存在“人情保”“关系保”等现象,使得法定的行政给付和社会保障功能大打折扣。

社会法上的行政给付有一个重要特点是,社会化程度越高,保障功效越好,体现的管理制度越公平。我国正处于社会转型期,为更好防范和化解新的社会矛盾,亟待建立公平的行政给付制度体系。一是政府积极主动执法。社会法所保障的社会权利与政治权利不同,政府不积极作为就很难实现。以残疾人保障为例,他们有着特殊的生理和社会需求,需要额外帮助和政府主动作为。当然,社会保护给付并不否定NGO和私人机构的作用,因为政府也会失灵。二是建立行政给付统筹与协调制度。以社会救助为例,目前最低生活保障和临时救助由民政部门负责,特定失业群体救助由人社部门负责,教育类救助由教育部门负责,且救助给付审批程序烦琐,耗时过长,有待改进。三是坚决惩治行政给付中的腐败行为,真正建立群众满意的阳光下的给付制度。

2.减少行政立法,加强监察职能

我国社会法有一个重要特点是,法律条文多是原则性、指导性规定,软法性质明显,在立法中授权政府部门另行制定法规或规章的情况很常见。由此,行政部门实际上扮演了执法和立法主体的双重角色。以劳动法为例,由于没有处理好原则与规则的关系,很多规范仍以行政法规和部门规章的形式出台。以社会保险法为例,很多现行制度没有在法律中体现,而是由国务院及其部委的“决定”“通知”等规定。例如,有关养老保险费缓缴、基本养老保险待遇、工伤和医疗保险先行支付与追偿等,都是由国务院文件规定,没有法定标准。甚至一些体制性问题如社保转移接续、社保费征缴主体等都是由行政机关协调解决。

在我国社会法执法中,应“去行政化”,使其回归监察定位。一是建立健全的监察体制。目前,劳动和社会保障监察已进入实操,但仍存在机构名称设置不规范不统一、规格不一致等问题。二是执法必严。社会法执法不严现象也应纠正,如基本养老保险全国统筹是《社会保险法》明文规定的,但至今省级统筹的目标仍未实现。为此,要大力推动执法权限和力量下沉,以适应社会法执法的实际需要。三是改进执法方式,逐步解决执法中的不作为、乱作为问题,将权力关进制度的笼子。

(三)推进社会法司法化

我国社会法在司法机制上仍存在很多空白,例如,社会保护和社会促进法体现的主要是宣示性权利,很少在法院适用。事实上,只有在社会权利受到法院或准司法机构保护的时候,社会法才能真正发挥稳定器的作用。

1.社会法司法化的限度

社会法上的诉权并非完全的权利,而是受到了一定限制。一方面,有关社会权的诉讼不可能扩展到尚未纳入法律保护的领域;另一方面,即便有些权利已经纳入法律保护,也不是完全可诉的。这也是社会法区别于其他部门法的显著特征。首先,社会权与自由权有很大区别。社会权需要国家采取积极措施才能实现,自由权只要国家不干预即能实现。其次,国家对国民的责任有一定限度。社会法上的国家责任是由法律明确规定的,是一种有限责任。再次,由司法决定行政给付有违权力分立理念。社会法的行政给付传统上都是由立法和行政机关作出裁量,如果司法过度侵入,会被认为危及民主制度和权力分工体系。最后,由立法和行政机关决定公共资源分配有现实合理性。由于社会法上的权利保护与大量资金投入有关,请求权客体(财政资源)的有限性直接决定了其诉讼的限制性。

但是,这并不意味着社会法上的权利是不可诉的,承认一部分权利的可诉性,可以促进国家履行其承诺的积极义务。以社会保障权为例,对于公民依法享有的社会保险、社会福利等待遇,当事人可以起诉;对于基准法和约定权益受到侵犯,也可以起诉。如1970年的戈德伯格诉凯利案中,美国联邦最高法院明确指出,社会福利可以请求法院救济。在英国和法国,社会法诉讼由社会保障法庭解决,德国则设立了专门的社会法院。但是,对政府确立的给付标准、最低工资标准等不满意,则不能起诉,因其在很大程度上是由政治而非司法决定。这也是社会法与其他部门法最重要的区别之一。如在1956年日本朝日诉讼案中,原告认为每月600日元不符合宪法规定的最低生活条件,但由于被告日本政府的解释理由更充分,导致“原告的诉讼请求无疾而终”。

2.社会法司法化的实践进路

确立公益诉讼和诉讼担当人制度。由于社会权益被侵害的后果不限于某个当事人,而是包含不特定多数人甚至公共社会,非利害关系人亦可起诉。比如,印度建立了一种公益诉讼模式,即只要是善意的,任何人都可以为受害人起诉。在社会法诉讼中,还有诉讼担当人和集团诉讼概念,也是对民事诉讼主体资格的突破和超越。如在集体合同争议中,工会是诉讼担当人和唯一主体,其他任何组织和个人都无权起诉。诉讼担当人与民法上的委托代理人不同,当事人不能解除其担当关系。此外,集团诉讼也是社会法的另一种诉讼机制。20世纪90年代,利用集团诉讼处理劳动保护、社会保险等纠纷成为潮流。对于诉讼请求较小的当事人来说,如果起诉标的比诉讼费用少,当事人就倾向于集团诉讼。

实行举证责任倒置制度。社会法司法机制同样体现了向弱者倾斜的理念。20世纪以来,在大量司法实践中,诞生了社会法另一个独特的司法机制——举证责任倒置。以工伤事故为例,法律明确规定由雇主承担举证责任;在欠薪案中,劳动者对未付工资的事实不负举证责任,都体现了对劳动者的特殊保护。这一点从工作场所中雇员给雇主造成损失和雇主给雇员造成损失承担责任以及举证责任的“非对等性”也可以看出。再如,就业歧视在美国等国家是违法的,当事人只要表明歧视发生时的情况即可,此后举证责任就转移到雇主那里,否则就构成歧视,在行政给付、社会保护等案例中也是如此。举证责任倒置主要是对弱者实行最大限度的司法保护,应确立为我国社会法基本的司法制度。

设置专门法庭或适用简易程序。在司法程序上,社会法争议亦有别于一般民事诉讼。以劳动司法为例,很多国家设置了行政裁判前置程序,以及两项重要原则:一是缩短劳动争议审限,二是劳资同盟介入。因此,社会法司法一般审限较短,程序也简单。由于当事人的诉讼请求与生存权和健康权等息息相关,如果像债权、物权一样按照民事案件审理,期限都在半年或一年以上,这种马拉松式的诉讼显然与权利人生存的现实需要是不相容的,很可能危及其生存。因此,对于社会法诉讼中一些耗时长、成本高的案件,为了节省社会成本和当事人的开支,应当使争议得到迅速和经济的处理,因此,可以借鉴一些国家的成功经验,设置专业裁判所或专门法庭,适用简易程序审理。

本文转自《中国社会科学》2024年第11期

-

John D. Kelleher 《Deep Learning》

1 Introduction to Deep Learning

2 Conceptual Foundations

3 Neural Networks: The Building Blocks of Deep Learning

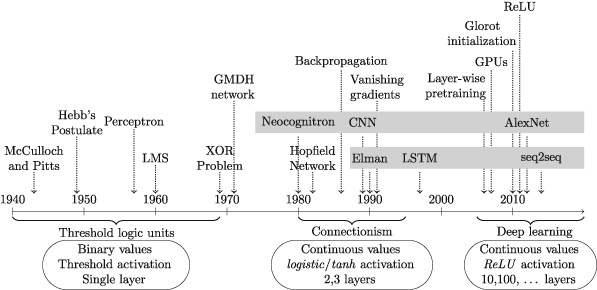

4 A Brief History of Deep Learning

5 Convolutional and Recurrent Neural Networks

6 Learning Functions

7 The Future of Deep Learning1 Introduction to Deep Learning

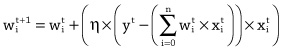

Deep learning is the subfield of artificial intelligence that focuses on creating large neural network models that are capable of making accurate data-driven decisions. Deep learning is particularly suited to contexts where the data is complex and where there are large datasets available. Today most online companies and high-end consumer technologies use deep learning. Among other things, Facebook uses deep learning to analyze text in online conversations. Google, Baidu, and Microsoft all use deep learning for image search, and also for machine translation. All modern smart phones have deep learning systems running on them; for example, deep learning is now the standard technology for speech recognition, and also for face detection on digital cameras. In the healthcare sector, deep learning is used to process medical images (X-rays, CT, and MRI scans) and diagnose health conditions. Deep learning is also at the core of self-driving cars, where it is used for localization and mapping, motion planning and steering, and environment perception, as well as tracking driver state.

Perhaps the best-known example of deep learning is DeepMind’s AlphaGo.1 Go is a board game similar to Chess. AlphaGo was the first computer program to beat a professional Go player. In March 2016, it beat the top Korean professional, Lee Sedol, in a match watched by more than two hundred million people. The following year, in 2017, AlphaGo beat the world’s No. 1 ranking player, China’s Ke Jie.

In 2016 AlphaGo’s success was very surprising. At the time, most people expected that it would take many more years of research before a computer would be able to compete with top level human Go players. It had been known for a long time that programming a computer to play Go was much more difficult than programming it to play Chess. There are many more board configurations possible in Go than there are in Chess. This is because Go has a larger board and simpler rules than Chess. There are, in fact, more possible board configurations in Go than there are atoms in the universe. This massive search space and Go’s large branching factor (the number of board configurations that can be reached in one move) makes Go an incredibly challenging game for both humans and computers.

One way of illustrating the relative difficulty Go and Chess presented to computer programs is through a historical comparison of how Go and Chess programs competed with human players. In 1967, MIT’s MacHack-6 Chess program could successfully compete with humans and had an Elo rating2 well above novice level, and, by May 1997, DeepBlue was capable of beating the Chess world champion Gary Kasparov. In comparison, the first complete Go program wasn’t written until 1968 and strong human players were still able to easily beat the best Go programs in 1997.

The time lag between the development of Chess and Go computer programs reflects the difference in computational difficulty between these two games. However, a second historic comparison between Chess and Go illustrates the revolutionary impact that deep learning has had on the ability of computer programs to compete with humans at Go. It took thirty years for Chess programs to progress from human level competence in 1967 to world champion level in 1997. However, with the development of deep learning it took only seven years for computer Go programs to progress from advanced amateur to world champion; as recently as 2009 the best Go program in the world was rated at the low-end of advanced amateur. This acceleration in performance through the use of deep learning is nothing short of extraordinary, but it is also indicative of the types of progress that deep learning has enabled in a number of fields.

AlphaGo uses deep learning to evaluate board configurations and to decide on the next move to make. The fact that AlphaGo used deep learning to decide what move to make next is a clue to understanding why deep learning is useful across so many different domains and applications. Decision-making is a crucial part of life. One way to make decisions is to base them on your “intuition” or your “gut feeling.” However, most people would agree that the best way to make decisions is to base them on the relevant data. Deep learning enables data-driven decisions by identifying and extracting patterns from large datasets that accurately map from sets of complex inputs to good decision outcomes.

Artificial Intelligence, Machine Learning, and Deep Learning

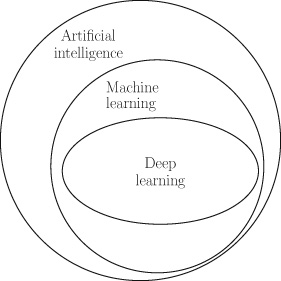

Deep learning has emerged from research in artificial intelligence and machine learning. Figure 1.1 illustrates the relationship between artificial intelligence, machine learning, and deep learning.

Deep learning enables data-driven decisions by identifying and extracting patterns from large datasets that accurately map from sets of complex inputs to good decision outcomes.

The field of artificial intelligence was born at a workshop at Dartmouth College in the summer of 1956. Research on a number of topics was presented at the workshop including mathematical theorem proving, natural language processing, planning for games, computer programs that could learn from examples, and neural networks. The modern field of machine learning draws on the last two topics: computers that could learn from examples, and neural network research.

Figure 1.1 The relationship between artificial intelligence, machine learning, and deep learning. Machine learning involves the development and evaluation of algorithms that enable a computer to extract (or learn) functions from a dataset (sets of examples). To understand what machine learning means we need to understand three terms: dataset, algorithm, and function.

In its simplest form, a dataset is a table where each row contains the description of one example from a domain, and each column contains the information for one of the features in a domain. For example, table 1.1 illustrates an example dataset for a loan application domain. This dataset lists the details of four example loan applications. Excluding the ID feature, which is only for ease of reference, each example is described using three features: the applicant’s annual income, their current debt, and their credit solvency.

Table 1.1. A dataset of loan applicants and their known credit solvency ratings

ID Annual Income Current Debt Credit Solvency 1 $150 -$100 100 2 $250 -$300 -50 3 $450 -$250 400 4 $200 -$350 -300 An algorithm is a process (or recipe, or program) that a computer can follow. In the context of machine learning, an algorithm defines a process to analyze a dataset and identify recurring patterns in the data. For example, the algorithm might find a pattern that relates a person’s annual income and current debt to their credit solvency rating. In mathematics, relationships of this type are referred to as functions.

A function is a deterministic mapping from a set of input values to one or more output values. The fact that the mapping is deterministic means that for any specific set of inputs a function will always return the same outputs. For example, addition is a deterministic mapping, and so 2+2 is always equal to 4. As we will discuss later, we can create functions for domains that are more complex than basic arithmetic, we can for example define a function that takes a person’s income and debt as inputs and returns their credit solvency rating as the output value. The concept of a function is very important to deep learning so it is worth repeating the definition for emphasis: a function is simply a mapping from inputs to outputs. In fact, the goal of machine learning is to learn functions from data. A function can be represented in many different ways: it can be as simple as an arithmetic operation (e.g., addition or subtraction are both functions that take inputs and return a single output), a sequence of if-then-else rules, or it can have a much more complex representation.

A function is a deterministic mapping from a set of input values to one or more output values.

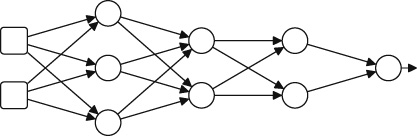

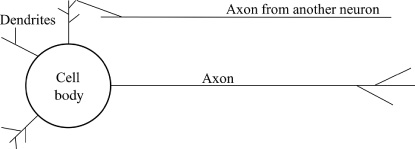

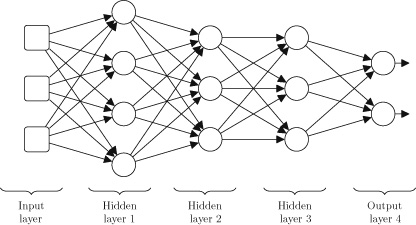

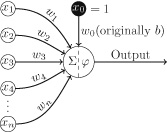

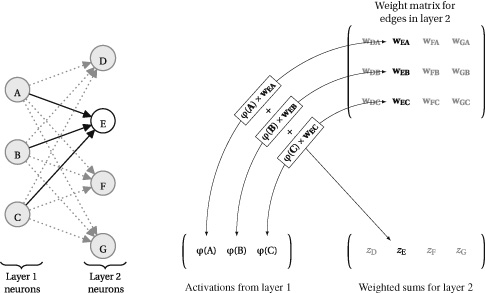

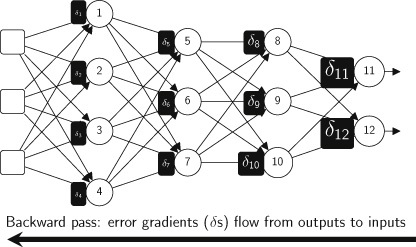

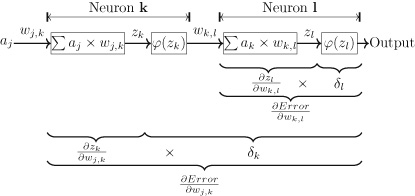

One way to represent a function is to use a neural network. Deep learning is the subfield of machine learning that focuses on deep neural network models. In fact, the patterns that deep learning algorithms extract from datasets are functions that are represented as neural networks. Figure 1.2 illustrates the structure of a neural network. The boxes on the left of the figure represent the memory locations where inputs are presented to the network. Each of the circles in this figure is called a neuron and each neuron implements a function: it takes a number of values as input and maps them to an output value. The arrows in the network show how the outputs of each neuron are passed as inputs to other neurons. In this network, information flows from left to right. For example, if this network were trained to predict a person’s credit solvency, based on their income and debt, it would receive the income and debt as inputs on the left of the network and output the credit solvency score through the neuron on the right.

A neural network uses a divide-and-conquer strategy to learn a function: each neuron in the network learns a simple function, and the overall (more complex) function, defined by the network, is created by combining these simpler functions. Chapter 3 will describe how a neural network processes information.

Figure 1.2 Schematic illustration of a neural network. What Is Machine Learning?

A machine learning algorithm is a search process designed to choose the best function, from a set of possible functions, to explain the relationships between features in a dataset. To get an intuitive understanding of what is involved in extracting, or learning, a function from data, examine the following set of sample inputs to an unknown function and the outputs it returns. Given these examples, decide which arithmetic operation (addition, subtraction, multiplication, or division) is the best choice to explain the mapping the unknown function defines between its inputs and output:

Most people would agree that multiplication is the best choice because it provides the best match to the observed relationship, or mapping, from the inputs to the outputs:

In this particular instance, choosing the best function is relatively straightforward, and a human can do it without the aid of a computer. However, as the number of inputs to the unknown function increases (perhaps to hundreds or thousands of inputs), and the variety of potential functions to be considered gets larger, the task becomes much more difficult. It is in these contexts that harnessing the power of machine learning to search for the best function, to match the patterns in the dataset, becomes necessary.

Machine learning involves a two-step process: training and inference. During training, a machine learning algorithm processes a dataset and chooses the function that best matches the patterns in the data. The extracted function will be encoded in a computer program in a particular form (such as if-then-else rules or parameters of a specified equation). The encoded function is known as a model, and the analysis of the data in order to extract the function is often referred to as training the model. Essentially, models are functions encoded as computer programs. However, in machine learning the concepts of function and model are so closely related that the distinction is often skipped over and the terms may even be used interchangeably.

In the context of deep learning, the relationship between functions and models is that the function extracted from a dataset during training is represented as a neural network model, and conversely a neural network model encodes a function as a computer program. The standard process used to train a neural network is to begin training with a neural network where the parameters of the network are randomly initialized (we will explain network parameters later; for now just think of them as values that control how the function the network encodes works). This randomly initialized network will be very inaccurate in terms of its ability to match the relationship between the various input values and target outputs for the examples in the dataset. The training process then proceeds by iterating through the examples in the dataset, and, for each example, presenting the input values to the network and then using the difference between the output returned by the network and the correct output for the example listed in the dataset to update the network’s parameters so that it matches the data more closely. Once the machine learning algorithm has found a function that is sufficiently accurate (in terms of the outputs it generates matching the correct outputs listed in the dataset) for the problem we are trying to solve, the training process is completed, and the final model is returned by the algorithm. This is the point at which the learning in machine learning stops.

Once training has finished, the model is fixed. The second stage in machine learning is inference. This is when the model is applied to new examples—examples for which we do not know the correct output value, and therefore we want the model to generate estimates of this value for us. Most of the work in machine learning is focused on how to train accurate models (i.e., extracting an accurate function from data). This is because the skills and methods required to deploy a trained machine learning model into production, in order to do inference on new examples at scale, are different from those that a typical data scientist will possess. There is a growing recognition within the industry of the distinctive skills needed to deploy artificial intelligence systems at scale, and this is reflected in a growing interest in the field known as DevOps, a term describing the need for collaboration between development and operations teams (the operations team being the team responsible for deploying a developed system into production and ensuring that these systems are stable and scalable). The terms MLOps, for machine learning operations, and AIOps, for artificial intelligence operations, are also used to describe the challenges of deploying a trained model. The questions around model deployment are beyond the scope of this book, so we will instead focus on describing what deep learning is, what it can be used for, how it has evolved, and how we can train accurate deep learning models.

One relevant question here is: why is extracting a function from data useful? The reason is that once a function has been extracted from a dataset it can be applied to unseen data, and the values returned by the function in response to these new inputs can provide insight into the correct decisions for these new problems (i.e., it can be used for inference). Recall that a function is simply a deterministic mapping from inputs to outputs. The simplicity of this definition, however, hides the variety that exists within the set of functions. Consider the following examples:

- • Spam filtering is a function that takes an email as input and returns a value that classifies the email as spam (or not).

- • Face recognition is a function that takes an image as input and returns a labeling of the pixels in the image that demarcates the face in the image.

- • Gene prediction is a function that takes a genomic DNA sequence as input and returns the regions of the DNA that encode a gene.

- • Speech recognition is a function that takes an audio speech signal as input and returns a textual transcription of the speech.

- • Machine translation is a function that takes a sentence in one language as input and returns the translation of that sentence in another language.

It is because the solutions to so many problems across so many domains can be framed as functions that machine learning has become so important in recent years.

Why Is Machine Learning Difficult?

There are a number of factors that make the machine learning task difficult, even with the help of a computer. First, most datasets will include noise3 in the data, so searching for a function that matches the data exactly is not necessarily the best strategy to follow, as it is equivalent to learning the noise. Second, it is often the case that the set of possible functions is larger than the set of examples in the dataset. This means that machine learning is an ill-posed problem: the information given in the problem is not sufficient to find a single best solution; instead multiple possible solutions will match the data. We can use the problem of selecting the arithmetic operation (addition, subtraction, multiplication, or division) that best matches a set of example input-output mappings for an unknown function to illustrate the concept of an ill-posed problem. Here are the example mappings for this function selection problem:

Given these examples, multiplication and division are better matches for the unknown function than addition and subtraction. However, it is not possible to decide whether the unknown function is actually multiplication or division using this sample of data, because both operations are consistent with all the examples provided. Consequently, this is an ill-posed problem: it is not possible to select a single best answer given the information provided in the problem.

One strategy to solve an ill-posed problem is to collect more data (more examples) in the hope that the new examples will help us to discriminate between the correct underlying function and the remaining alternatives. Frequently, however, this strategy is not feasible, either because the extra data is not available or is too expensive to collect. Instead, machine learning algorithms overcome the ill-posed nature of the machine learning task by supplementing the information provided by the data with a set of assumptions about the characteristics of the best function, and use these assumptions to influence the process used by the algorithm that selects the best function (or model). These assumptions are known as the inductive bias of the algorithm because in logic a process that infers a general rule from a set of specific examples is known as inductive reasoning. For example, if all the swans that you have seen in your life are white, you might induce from these examples the general rule that all swans are white. This concept of inductive reasoning relates to machine learning because a machine learning algorithm induces (or extracts) a general rule (a function) from a set of specific examples (the dataset). Consequently, the assumptions that bias a machine learning algorithm are, in effect, biasing an inductive reasoning process, and this is why they are known as the inductive bias of the algorithm.

So, a machine learning algorithm uses two sources of information to select the best function: one is the dataset, and the other (the inductive bias) is the assumptions that bias the algorithm to prefer some functions over others, irrespective of the patterns in the dataset. The inductive bias of a machine learning algorithm can be understood as providing the algorithm with a perspective on a dataset. However, just as in the real world, where there is no single best perspective that works in all situations, there is no single best inductive bias that works well for all datasets. This is why there are so many different machine learning algorithms: each algorithm encodes a different inductive bias. The assumptions encoded in the design of a machine leanring algorithm can vary in strength. The stronger the assumptions the less freedom the algorithm is given in selecting a function that fits the patterns in the dataset. In a sense, the dataset and inductive bias counterbalance each other: machine learning algorithms that have a strong inductive bias pay less attention to the dataset when selecting a function. For example, if a machine learning algorithm is coded to prefer a very simple function, no matter how complex the patterns in the data, then it has a very strong inductive bias.

In chapter 2 we will explain how we can use the equation of a line as a template structure to define a function. The equation of the line is a very simple type of mathematical function. Machine learning algorithms that use the equation of a line as the template structure for the functions they fit to a dataset make the assumption that the model they generate should encode a simple linear mapping from inputs to output. This assumption is an example of an inductive bias. It is, in fact, an example of a strong inductive bias, as no matter how complex (or nonlinear) the patterns in the data are the algorithm will be restricted (or biased) to fit a linear model to it.

One of two things can go wrong if we choose a machine learning algorithm with the wrong bias. First, if the inductive bias of a machine learning algorithm is too strong, then the algorithm will ignore important information in the data and the returned function will not capture the nuances of the true patterns in the data. In other words, the returned function will be too simple for the domain,4 and the outputs it generates will not be accurate. This outcome is known as the function underfitting the data. Alternatively, if the bias is too weak (or permissive), the algorithm is allowed too much freedom to find a function that closely fits the data. In this case, the returned function is likely to be too complex for the domain, and, more problematically, the function is likely to fit to the noise in the sample of the data that was supplied to the algorithm during training. Fitting to the noise in the training data will reduce the function’s ability to generalize to new data (data that is not in the training sample). This outcome is known as overfitting the data. Finding a machine learning algorithm that balances data and inductive bias appropriately for a given domain is the key to learning a function that neither underfits or overfits the data, and that, therefore, generalizes successfully in that domain (i.e., that is accurate at inference, or processing new examples that were not in the training data).

However, in domains that are complex enough to warrant the use of machine learning, it is not possible in advance to know what are the correct assumptions to use to bias the selection of the correct model from the data. Consequently, data scientists must use their intuition (i.e., make informed guesses) and also use trial-and-error experimentation in order to find the best machine learning algorithm to use in a given domain.

Neural networks have a relatively weak inductive bias. As a result, generally, the danger with deep learning is that the neural network model will overfit, rather than underfit, the data. It is because neural networks pay so much attention to the data that they are best suited to contexts where there are very large datasets. The larger the dataset, the more information the data provides, and therefore it becomes more sensible to pay more attention to the data. Indeed, one of the most important factors driving the emergence of deep learning over the last decade has been the emergence of Big Data. The massive datasets that have become available through online social platforms and the proliferation of sensors have combined to provide the data necessary to train neural network models to support new applications in a range of domains. To give a sense of the scale of the big data used in deep learning research, Facebook’s face recognition software, DeepFace, was trained on a dataset of four million facial images belonging to more than four thousand identities (Taigman et al. 2014).

The Key Ingredients of Machine Learning

The above example of deciding which arithmetic operation best explains the relationship between inputs and outputs in a set of data illustrates the three key ingredients in machine learning:

1. Data (a set of historical examples).

2. A set of functions that the algorithm will search through to find the best match with the data.

3. Some measure of fitness that can be used to evaluate how well each candidate function matches the data.All three of these ingredients must be correct if a machine learning project is to succeed; below we describe each of these ingredients in more detail.

We have already introduced the concept of a dataset as a two-dimensional table (or n × m matrix),5 where each row contains the information for one example, and each column contains the information for one of the features in the domain. For example, table 1.2 illustrates how the sample inputs and outputs of the first unknown arithmetic function problem in the chapter can be represented as a dataset. This dataset contains four examples (also known as instances), and each example is represented using two input features and one output (or target) feature. Designing and selecting the features to represent the examples is a very important step in any machine learning project.

As is so often the case in computer science, and machine learning, there is a tradeoff in feature selection. If we choose to include only a minimal number of features in the dataset, then it is likely that a very informative feature will be excluded from the data, and the function returned by the machine learning algorithm will not work well. Conversely, if we choose to include as many features as possible in the domain, then it is likely that irrelevant or redundant features will be included, and this will also likely result in the function not working well. One reason for this is that the more redundant or irrelevant features that are included, the greater the probability for the machine learning algorithm to extract patterns that are based on spurious correlations between these features. In these cases, the algorithm gets confused between the real patterns in the data and the spurious patterns that only appear in the data due to the particular sample of examples that have been included in the dataset.

Finding the correct set of features to include in a dataset involves engaging with experts who understand the domain, using statistical analysis of the distribution of individual features and also the correlations between pairs of features, and a trial-and-error process of building models and checking the performance of the models when particular features are included or excluded. This process of dataset design is a labor-intensive task that often takes up a significant portion of the time and effort expended on a machine learning project. It is, however, a critical task if the project is to succeed. Indeed, identifying which features are informative for a given task is frequently where the real value of machine learning projects emerge.

The second ingredient in a machine learning project is the set of candidate functions that the algorithm will consider as the potential explanation of the patterns in the data. In the unknown arithmetic function scenario previously given, the set of considered functions was explicitly specified and restricted to four: addition, subtraction, multiplication, or division. More generally, the set of functions is implicitly defined through the inductive bias of the machine learning algorithm and the function representation (or model) that is being used. For example, a neural network model is a very flexible function representation.

Table 1.2. A simple tabular dataset

Input 1 Input 2 Target 5 5 25 2 6 12 4 4 16 2 2 04 The third and final ingredient to machine learning is the measure of fitness. The measure of fitness is a function that takes the outputs from a candidate function, generated when the machine learning algorithm applies the candidate function to the data, and compares these outputs with the data, in some way. The result of this comparison is a value that describes the fitness of the candidate function relative to the data. A fitness function that would work for our unknown arithmetic function scenario is to count in how many of the examples a candidate function returns a value that exactly matches the target specified in the data. Multiplication would score four out of four on this fitness measure, addition would score one out of four, and division and subtraction would both score zero out of four. There are a large variety of fitness functions that can be used in machine learning, and the selection of the correct fitness function is crucial to the success of a machine learning project. The design of new fitness functions is a rich area of research in machine learning. Varying how the dataset is represented, and how the candidate functions and the fitness function are defined, results in three different categories of machine learning: supervised, unsupervised, and reinforcement learning.

Supervised, Unsupervised, and Reinforcement Learning

Supervised machine learning is the most common type of machine learning. In supervised machine learning, each example in the dataset is labeled with the expected output (or target) value. For example, if we were using the dataset in table 1.1 to learn a function that maps from the inputs of annual income and debt to a credit solvency score, the credit solvency feature in the dataset would be the target feature. In order to use supervised machine learning, our dataset must list the value of the target feature for every example in the dataset. These target feature values can sometimes be very difficult, and expensive, to collect. In some cases, we must pay human experts to label each example in a dataset with the correct target value. However, the benefit of having these target values in the dataset is that the machine learning algorithm can use these values to help the learning process. It does this by comparing the outputs a function produces with the target outputs specified in the dataset, and using the difference (or error) to evaluate the fitness of the candidate function, and use the fitness evaluation to guide the search for the best function. It is because of this feedback from the target labels in the dataset to the algorithm that this type of machine learning is considered supervised. This is the type of machine learning that was demonstrated by the example of choosing between different arithmetic functions to explain the behavior of an unknown function.

Unsupervised machine learning is generally used for clustering data. For example, this type of data analysis is useful for customer segmentation, where a company wishes to segment its customer base into coherent groups so that it can target marketing campaigns and/or product designs to each group. In unsupervised machine learning, there are no target values in the dataset. Consequently, the algorithm cannot directly evaluate the fitness of a candidate function against the target values in the dataset. Instead, the machine learning algorithm tries to identify functions that map similar examples into clusters, such that the examples in a cluster are more similar to the other examples in the same cluster than they are to examples in other clusters. Note that the clusters are not prespecified, or at most they are initially very underspecified. For example, the data scientist might provide the algorithm with a target number of clusters, based on some intuition about the domain, without providing explicit information on relative sizes of the clusters or regarding the characteristics of examples that belong in each cluster. Unsupervised machine learning algorithms often begin by guessing an initial clustering of the examples and then iteratively adjusting the clusters (by dropping instances from one cluster and adding them to another) so as to improve the fitness of the cluster set. The fitness functions used in unsupervised machine learning generally reward candidate functions that result in higher similarity within individual clusters and, also, high diversity between clusters.

Reinforcement learning is most relevant for online control tasks, such as robot control and game playing. In these scenarios, an agent needs to learn a policy for how it should act in an environment in order to be rewarded. In reinforcement learning, the goal of the agent is to learn a mapping from its current observation of the environment and its own internal state (its memory) to what action it should take: for instance, should the robot move forward or backward or should the computer program move the pawn or take the queen. The output of this policy (function) is the action that the agent should take next, given the current context. In these types of scenarios, it is difficult to create historic datasets, and so reinforcement learning is often carried out in situ: an agent is released into an environment where it experiments with different policies (starting with a potentially random policy) and over time updates its policy in response to the rewards it receives from the environment. If an action results in a positive reward, the mapping from the relevant observations and state to that action is reinforced in the policy, whereas if an action results in a negative reward, the mapping is weakened. Unlike in supervised and unsupervised machine learning, in reinforcement learning, the fact that learning is done in situ means that the training and inference stages are interleaved and ongoing. The agent infers what action it should do next and uses the feedback from the environment to learn how to update its policy. A distinctive aspect of reinforcement learning is that the target output of the learned function (the agent’s actions) is decoupled from the reward mechanism. The reward may be dependent on multiple actions and there may be no reward feedback, either positive or negative, available directly after an action has been performed. For example, in a chess scenario, the reward may be +1 if the agent wins the game and -1 if the agent loses. However, this reward feedback will not be available until the last move of the game has been completed. So, one of the challenges in reinforcement learning is designing training mechanisms that can distribute the reward appropriately back through a sequence of actions so that the policy can be updated appropriately. Google’s DeepMind Technologies generated a lot of interest by demonstrating how reinforcement learning could be used to train a deep learning model to learn control policies for seven different Atari computer games (Mnih et al. 2013). The input to the system was the raw pixel values from the screen, and the control policies specified what joystick action the agent should take at each point in the game. Computer game environments are particularly suited to reinforcement learning as the agent can be allowed to play many thousands of games against the computer game system in order to learn a successful policy, without incurring the cost of creating and labeling a large dataset of example situations with correct joystick actions. The DeepMind system got so good at the games that it outperformed all previous computer systems on six of the seven games, and outperformed human experts on three of the games.

Deep learning can be applied to all three machine learning scenarios: supervised, unsupervised, and reinforcement. Supervised machine learning is, however, the most common type of machine learning. Consequently, the majority of this book will focus on deep learning in a supervised learning context. However, most of the deep learning concerns and principles introduced in the supervised learning context also apply to unsupervised and reinforcement learning.

Why Is Deep Learning So Successful?

In any data-driven process the primary determinant of success is knowing what to measure and how to measure it. This is why the processes of feature selection and feature design are so important to machine learning. As discussed above, these tasks can require domain expertise, statistical analysis of the data, and iterations of experiments building models with different feature sets. Consequently, dataset design and preparation can consume a significant portion of time and resources expended in the project, in some cases approaching up to 80% of the total budget of a project (Kelleher and Tierney 2018). Feature design is one task in which deep learning can have a significant advantage over traditional machine learning. In traditional machine learning, the design of features often requires a large amount of human effort. Deep learning takes a different approach to feature design, by attempting to automatically learn the features that are most useful for the task from the raw data.

In any data-driven process the primary determinant of success is knowing what to measure and how to measure it.

To give an example of feature design, a person’s body mass index (BMI) is the ratio of a person’s weight (in kilograms) divided by their height (in meters squared). In a medical setting, BMI is used to categorize people as underweight, normal, overweight, or obese. Categorizing people in this way can be useful in predicting the likelihood of a person developing a weight-related medical condition, such as diabetes. BMI is used for this categorization because it enables doctors to categorize people in a manner that is relevant to these weight-related medical conditions. Generally, as people get taller they also get heavier. However, most weight-related medical conditions (such as diabetes) are not affected by a person’s height but rather the amount they are overweight compared to other people of a similar stature. BMI is a useful feature to use for the medical categorization of a person’s weight because it takes the effect of height on weight into account. BMI is an example of a feature that is derived (or calculated) from raw features; in this case the raw features are weight and height. BMI is also an example of how a derived feature can be more useful in making a decision than the raw features that it is derived from. BMI is a hand-designed feature: Adolphe Quetelet designed it in the eighteenth century.

As mentioned above, during a machine learning project a lot of time and effort is spent on identifying, or designing, (derived) features that are useful for the task the project is trying to solve. The advantage of deep learning is that it can learn useful derived features from data automatically (we will discuss how it does this in later chapters). Indeed, given large enough datasets, deep learning has proven to be so effective in learning features that deep learning models are now more accurate than many of the other machine learning models that use hand-engineered features. This is also why deep learning is so effective in domains where examples are described with very large numbers of features. Technically datasets that contain large numbers of features are called high-dimensional. For example, a dataset of photos with a feature for each pixel in a photo would be high-dimensional. In complex high-dimensional domains, it is extremely difficult to hand-engineer features: consider the challenges of hand-engineering features for face recognition or machine translation. So, in these complex domains, adopting a strategy whereby the features are automatically learned from a large dataset makes sense. Related to this ability to automatically learn useful features, deep learning also has the ability to learn complex nonlinear mappings between inputs and outputs; we will explain the concept of a nonlinear mapping in chapter 3, and in chapter 6 we will explain how these mappings are learned from data.

Summary and the Road Ahead

This chapter has focused on positioning deep learning within the broader field of machine learning. Consequently, much of this chapter has been devoted to introducing machine learning. In particular, the concept of a function as a deterministic mapping from inputs to outputs was introduced, and the goal of machine learning was explained as finding a function that matches the mappings from input features to the output features that are observed in the examples in the dataset.

Within this machine learning context, deep learning was introduced as the subfield of machine learning that focuses on the design and evaluation of training algorithms and model architectures for modern neural networks. One of the distinctive aspects of deep learning within machine learning is the approach it takes to feature design. In most machine learning projects, feature design is a human-intensive task that can require deep domain expertise and consume a lot of time and project budget. Deep learning models, on the other hand, have the ability to learn useful features from low-level raw data, and complex nonlinear mappings from inputs to outputs. This ability is dependent on the availability of large datasets; however, when such datasets are available, deep learning can frequently outperform other machine learning approaches. Furthermore, this ability to learn useful features from large datasets is why deep learning can often generate highly accurate models for complex domains, be it in machine translation, speech processing, or image or video processing. In a sense, deep learning has unlocked the potential of big data. The most noticeable impact of this development has been the integration of deep learning models into consumer devices. However, the fact that deep learning can be used to analyze massive datasets also has implications for our individual privacy and civil liberty (Kelleher and Tierney 2018). This is why understanding what deep learning is, how it works, and what it can and can’t be used for, is so important. The road ahead is as follows:

• Chapter 2 introduces some of the foundational concepts of deep learning, including what a model is, how the parameters of a model can be set using data, and how we can create complex models by combining simple models.

• Chapter 3 explains what neural networks are, how they work, and what we mean by a deep neural network.

• Chapter 4 presents a history of deep learning. This history focuses on the major conceptual and technical breakthroughs that have contributed to the development of the field of machine learning. In particular, it provides a context and explanation for why deep learning has seen such rapid development in recent years.

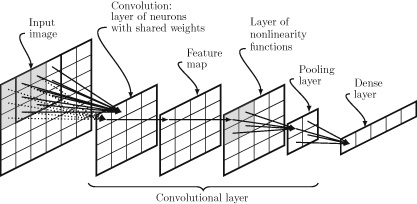

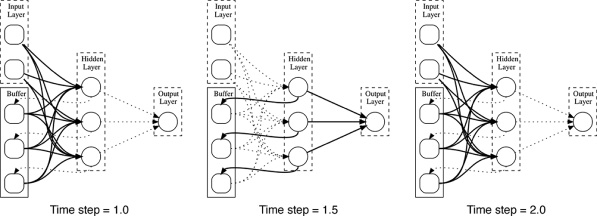

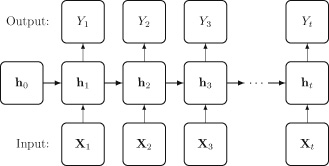

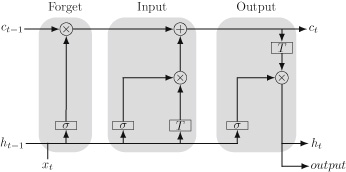

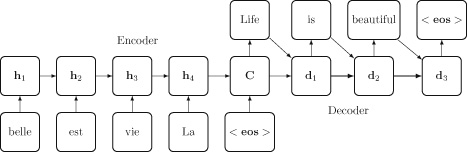

• Chapter 5 describes the current state of the field, by introducing the two deep neural architectures that are the most popular today: convolutional neural networks and recurrent neural networks. Convolutional neural networks are ideally suited to processing image and video data. Recurrent neural networks are ideally suited to processing sequential data such as speech, text, or time-series data. Understanding the differences and commonalities across these two architectures will give you an awareness of how a deep neural network can be tailored to the characteristics of a specific type of data, and also an appreciation of the breadth of the design space of possible network architectures.

• Chapter 6 explains how deep neural networks models are trained, using the gradient descent and backpropagation algorithms. Understanding these two algorithms will give you a real insight into the state of artificial intelligence. For example, it will help you to understand why, given enough data, it is currently possible to train a computer to do a specific task within a well-defined domain at a level beyond human capabilities, but also why a more general form of intelligence is still an open research challenge for artificial intelligence.

• Chapter 7 looks to the future in the field of deep learning. It reviews the major trends driving the development of deep learning at present, and how they are likely to contribute to the development of the field in the coming years. The chapter also discusses some of the challenges the field faces, in particular the challenge of understanding and interpreting how a deep neural network works.2 Conceptual Foundations

This chapter introduces some of the foundational concepts that underpin deep learning. The basis of this chapter is to decouple the initial presentation of these concepts from the technical terminology used in deep learning, which is introduced in subsequent chapters.

A deep learning network is a mathematical model that is (loosely) inspired by the structure of the brain. Consequently, in order to understand deep learning it is helpful to have an intuitive understanding of what a mathematical model is, how the parameters of a model can be set, how we can combine (or compose) models, and how we can use geometry to understand how a model processes information.

What Is a Mathematical Model?

In its simplest form, a mathematical model is an equation that describes how one or more input variables are related to an output variable. In this form a mathematical model is the same as a function: a mapping from inputs to outputs.

In any discussion relating to models, it is important to remember the statement by George Box that all models are wrong but some are useful! For a model to be useful it must have a correspondence with the real world. This correspondence is most obvious in terms of the meaning that can be associated with a variable. For example, in isolation a value such as 78,000 has no meaning because it has no correspondence with concepts in the real world. But yearly income=$78,000 tells us how the number describes an aspect of the real world. Once the variables in a model have a meaning, we can understand the model as describing a process through which different aspects of the world interact and cause new events. The new events are then described by the outputs of the model.

A very simple template for a model is the equation of a line:

In this equation

is the output variable,

is the input variable, and

and

are two parameters of the model that we can set to adjust the relationship the model defines between the input and the output.

Imagine we have a hypothesis that yearly income affects a person’s happiness and we wish to describe the relationship between these two variables.1 Using the equation of a line, we could define a model to describe this relationship as follows:

This model has a meaning because the variables in the model (as distinct from the parameters of the model) have a correspondence with concepts from the real world. To complete our model, we have to set the values of the model’s parameters:

and

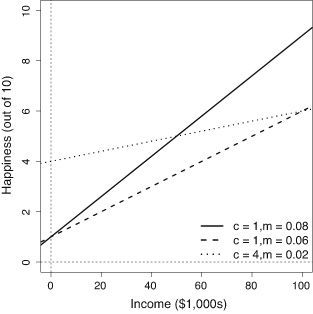

. Figure 2.1 illustrates how varying the values of each of these parameters changes the relationship defined by the model between income and happiness.

One important thing to notice in this figure is that no matter what values we set the model parameters to, the relationship defined by the model between the input and the output variable can be plotted as a line. This is not surprising because we used the equation of a line as the template to define our model, and this is why mathematical models that are based on the equation of a line are known as linear models. The other important thing to notice in the figure is how changing the parameters of the model changes the relationship between income and happiness.

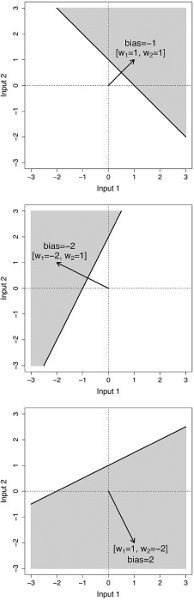

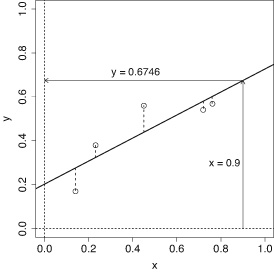

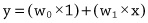

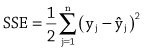

Figure 2.1 Three different linear models of how income affects happiness. The solid steep line, with parameters

, is a model of the world in which people with zero income have a happiness level of 1, and increases in income have a significant effect on people’s happiness. The dashed line, with parameters

, is a model in which people with zero income have a happiness level of 1 and increased income increases happiness, but at the slower rate compared to the world modeled by the solid line. Finally, the dotted line, parameters

, is a model of the world where no one is particularly unhappy—even people with zero income have a happiness of 4 out of 10—and although increases in income do affect happiness, the effect is moderate. This third model assumes that income has a relatively weak effect on happiness.

More generally, the differences between the three models in figure 2.1 show how making changes to the parameters of a linear model changes the model. Changing

causes the line to move up and done. This is most clearly seen if we focus on the y-axis: notice that the line defined by a model always crosses (or intercepts) the y-axis at the value that

is set to. This is why the

parameter in a linear model is known as the intercept. The intercept can be understood as specifying the value of the output variable when the input variable is zero. Changing the

parameter changes the angle (or slope) of the line. The slope parameter controls how quickly changes in income effect changes in happiness. In a sense, the slope value is a measure of how important income is to happiness. If income is very important (i.e., if small changes in income result in big changes in happiness), then the slope parameter of our model should be set to a large value. Another way of understanding this is to think of a slope parameter of a linear model as describing the importance, or weight, of the input variable in determining the value of the output.

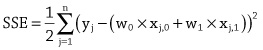

Linear Models with Multiple Inputs

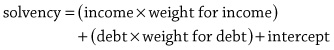

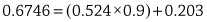

The equation of a line can be used as a template for mathematical models that have more than one input variable. For example, imagine yourself in a scenario where you have been hired by a financial institution to act as a loan officer and your job involves deciding whether or not a loan application should be granted. From interviewing domain experts you come up with a hypothesis that a useful way to model a person’s credit solvency is to consider both their yearly income and their current debts. If we assume that there is a linear relationship between these two input variables and a person’s credit solvency, then the appropriate mathematical model, written out in English would be:

Notice that in this model the

m

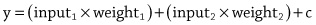

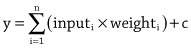

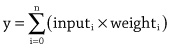

parameter has been replaced by a separate weight for each input variable, with each weight representing the importance of its associated input in determining the output. In mathematical notation this model would be written as:

where

represents the credit solvency output,

represents the income variable,

represents the debt variable, and

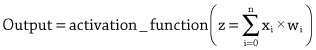

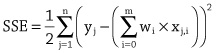

represents the intercept. Using the idea of adding a new weight for each new input to the model allows us to scale the equation of a line to as many inputs as we like. All the models defined in this way are still linear within the dimensions defined by the number of inputs and the output. What this means is that a linear model with two inputs and one output defines a flat plane rather than a line because that is what a two-dimensional line that has been extruded to three dimensions looks like.It can become tedious to write out a mathematical model that has a lot of inputs, so mathematicians like to write things in as compact a form as possible. With this in mind, the above equation is sometimes written in the short form:

This notation tells us that to calculate the output variable

y

we must first go through all

inputs and multiple each input by its corresponding weight, then we should sum together the results of these

multiplications, and finally we add the

intercept parameter to the result of the summation. The

symbol tells us that we use addition to combine the results of the multiplications, and the index

tells us that we multiply each input by the weight with the same index. We can make our notation even more compact by treating the intercept as a weight. One way to do this is to assume an

that is always equal to 1 and to treat the intercept as the weight on this input, that is,

. Doing this allows us to write out the model as follows:

Notice that the index now starts at 0, rather than 1, because we are now assuming an extra input,

input0=1

, and we have relabeled the intercept

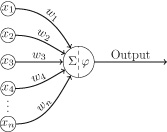

weight0.Although we can write down a linear model in a number of different ways, the core of a linear model is that the output is calculated as the sum of the n input values multiplied by their corresponding weights. Consequently, this type of model defines a calculation known as a weighted sum, because we weight each input and sum the results. Although a weighted sum is easy to calculate, it turns out to be very useful in many situations, and it is the basic calculation used in every neuron in a neural network.

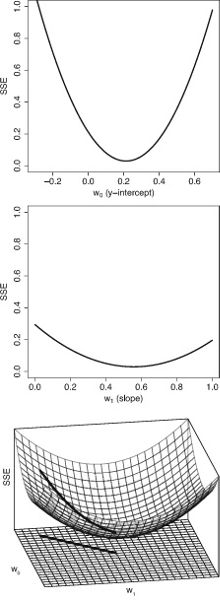

Setting the Parameters of a Linear Model

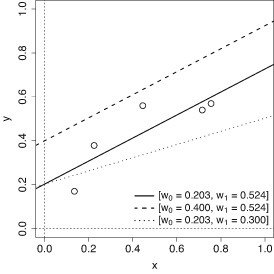

Let us return to our working scenario where we wish to create a model that enables us to calculate the credit solvency of individuals who have applied for a financial loan. For simplicity in presentation we will ignore the intercept parameter in this discussion as it is treated the same as the other parameters (i.e., the weights on the inputs). So, dropping the intercept parameter, we have the following linear model (or weighted sum) of the relationship between a person’s income and debt to their credit solvency:

The multiplication of inputs by weights, followed by a summation, is known as a weighted sum.

In order to complete our model, we need to specify the parameters of the model; that is, we need to specify the value of the weight for each input. One way to do this would be to use our domain expertise to come up with values for each of the parameters.

For example, if we assume that an increase in a person’s income has a bigger impact on their credit solvency than a similar increase in their debt, we should set the weighting for income to be larger than that of the debt. The following model encodes this assumption; in particular this model specifies that income is three times as important as debt in determining a person’s credit solvency:

The drawback with using domain knowledge to set the parameters of a model is that experts often disagree. For example, you may think that weighting income as three times as important as debt is not realistic; in that case the model can be adjusted by, for example, setting both income and debt to have an equal weighting, which would be equivalent to assuming that income and debt are equally important in determining credit solvency. One way to avoid arguments between experts is to use data to set the parameters. This is where machine learning helps. The learning done by machine learning is finding the parameters (or weights) of a model using a dataset.

Learning Model Parameters from Data

Later in the book we will describe the standard algorithm used to learn the weights for a linear model, known as the gradient descent algorithm. However, we can give a brief preview of the algorithm here. We start with a dataset containing a set of examples for which we have both the input values (income and debt) and the output value (credit solvency). Table 2.1 illustrates such a dataset from our credit solvency scenario.2

The learning done by machine learning is finding the parameters (or weights) of a model using a dataset.

We then begin the process of learning the weights by guessing initial values for each weight. It is very likely that this initial, guessed, model will be a very bad model. This is not a problem, however, because we will use the dataset to iteratively update the weights so that the model gets better and better, in terms of how well it matches the data. For the purpose of the example, we will use the model described above as our initial (guessed) model:

Table 2.1. A dataset of loan applications and known credit solvency rating of the applicant

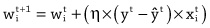

ID Annual income Current debt Credit solvency 1 $150 -$100 100 2 $250 -$300 -50 3 $450 -$250 400 4 $200 -$350 -300 The general process for improving the weights of the model is to select an example from the dataset and feed the input values from the example into the model. This allows us to calculate an estimate of the output value for the example. Once we have this estimated output, we can calculate the error of the model on the example by subtracting the estimated output from the correct output for the example listed in the dataset. Using the error of the model on the example, we can improve how well the model fits the data by updating the weights in the model using the following strategy, or learning rule:

• If the error is 0, then we should not change the weights of the model.

• If the error is positive, then the output of the model was too low, so we should increase the output of the model for this example by increasing the weights for all the inputs that had positive values for the example and decreasing the weights for all the inputs that had negative values for the example.

• If the error is negative, then the output of the model was too high, so we should decrease the output of the model for this example by decreasing the weights for all the inputs that had positive values for the example and increasing the weights for all the inputs that had negative values for the example.To illustrate the weight update process we will use example 1 from table 2.1 (income = 150, debt = -100, and solvency = 100) to test the accuracy of our guessed model and update the weights according to the resulting error.

When the input values for the example are passed into the model, the credit solvency estimate returned by the model is 350. This is larger than the credit solvency listed for this example in the dataset, which is 100. As a result, the error of the model is negative (100 – 350 = –250); therefore, following the learning rule described above, we should decrease the output of the model for this example by decreasing the weights for positive inputs and increasing the weights for negative inputs. For this example, the income input had a positive value and the debt input had a negative value. If we decrease the weight for income by 1 and increase the weight for debt by 1, we end up with the following model:

We can test if this weight update has improved the model by checking if the new model generates a better estimate for the example than the old model. The following illustrates pushing the same example through the new model:

This time the credit solvency estimate generated by the model matches the value in the dataset, showing that the updated model fits the data more closely than the original model. In fact, this new model generates the correct output for all the examples in the dataset.

In this example, we only needed to update the weights once in order to find a set of weights that made the behavior of the model consistent with all the examples in the dataset. Typically, however, it takes many iterations of presenting examples and updating weights to get a good model. Also, in this example, we have, for the sake of simplicity, assumed that the weights are updated by either adding or subtracting 1 from them. Generally, in machine learning, the calculation of how much to update each weight by is more complicated than this. However, these differences aside, the general process outlined here for updating the weights (or parameters) of a model in order to fit the model to a dataset is the learning process at the core of deep learning.

Combining Models

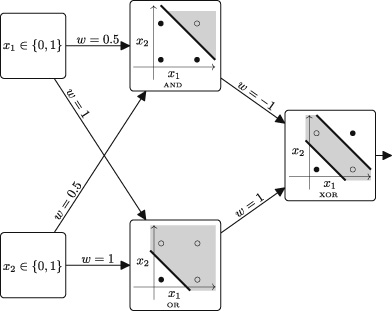

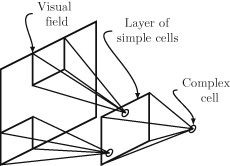

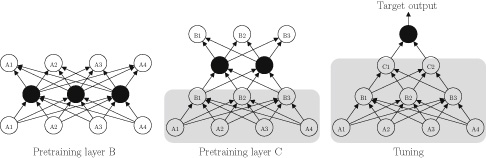

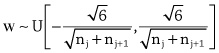

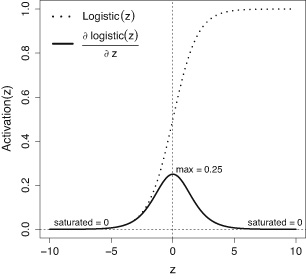

We now understand how we can specify a linear model to estimate an applicant’s credit solvency, and how we can modify the parameters of the model in order to fit the model to a dataset. However, as a loan officer our job is not simply to calculate an applicant’s credit solvency; we have to decide whether to grant the loan application or not. In other words, we need a rule that will take a credit solvency score as input and return a decision on the loan application. For example, we might use the decision rule that a person with a credit solvency above 200 will be granted a loan. This decision rule is also a model: it maps an input variable, in this case credit solvency, to an output variable, loan decision.